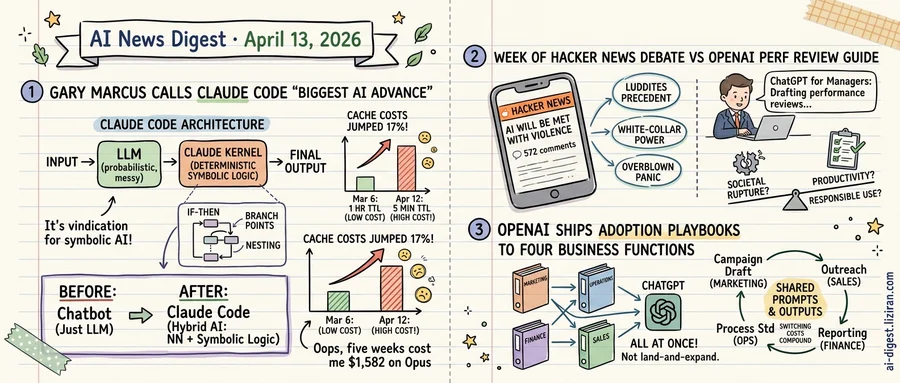

01Gary Marcus Calls Claude Code the Biggest AI Advance Since LLMs. The Same Week, Its Cache Costs Jumped 17%.

Gary Marcus has spent a quarter century arguing that neural networks alone will never be enough. He published The Algebraic Mind in 2001, debated Yoshua Bengio in 2019, and sparred with Geoff Hinton's disciples for most of the years in between. The industry's response ranged from polite dismissal to open hostility.

On April 11, Marcus declared Claude Code "the biggest advance in AI since the LLM." Not because it's a better chatbot. Because, he argues, it's not really a chatbot at all.

Leaked source code shows a 3,167-line kernel called print.ts at Claude Code's core. It contains 486 branch points and 12 levels of nesting: deterministic symbolic logic wrapped around the LLM's probabilistic outputs. "A big IF-THEN conditional," Marcus writes, inside "a deterministic, symbolic loop." For him, this is vindication. The industry's most capable coding tool works by hybridizing neural and symbolic approaches, not by scaling harder.

Claude Code "ain't perfect, or even close," he concedes. The symbolic code is "a mess." But the architecture, he insists, proves that hybrid AI works.

The same week Marcus was celebrating the product, its users were documenting something less flattering. A GitHub issue filed on April 12 analyzed 119,866 API calls across two machines. The data showed Anthropic shifted Claude Code's prompt cache TTL from one hour to five minutes around March 6. Cache writes cost 12.5x more than cache reads. A five-minute TTL forces expensive re-uploads whenever a developer steps away from the keyboard.

One user calculated $949 in excess costs over five weeks on Sonnet pricing. On Opus, the figure reached $1,582. Pro plan subscribers reported hitting quota limits for the first time in March with no prior notice.

Anthropic called the change intentional optimization, not a regression. Many requests are one-shot calls with no follow-up read, the company said, so shorter TTLs are cheaper on aggregate. A client-side bug that locked some sessions into five-minute TTL was fixed in version 2.1.90. The one-hour default will not return.

Marcus sees proof that symbolic AI has arrived. Developers building on it daily see infrastructure costs quietly climbing. The gap matters most for teams running long agentic sessions. A cache miss after five idle minutes triggers a full context re-upload costing $6 per million tokens on Opus.

02The week 572 people debated AI violence on Hacker News, OpenAI published a guide to writing performance reviews with ChatGPT

"AI Will Be Met with Violence, and Nothing Good Will Come of It" appeared on The Algorithmic Bridge this week, and the title is literal. Public resentment toward AI is accumulating faster than institutions can absorb it, the article argues. It draws on historical patterns where technological displacement turned physical.

OpenAI, during the same news cycle, published "ChatGPT for Managers." The training module walks corporate middle managers through using ChatGPT to draft performance feedback, prepare for difficult conversations, and organize team workflows. A companion guide, "Responsible and Safe Use of AI," covers accuracy checking and transparency. Both treat adoption as a given. The only open question is technique.

One publication predicts societal rupture. The other assumes the transition is complete and reduces "responsible use" to a compliance checklist.

The Algorithmic Bridge piece drew 324 upvotes and 572 comments on Hacker News, several times the volume typical front-page posts generate. Readers didn't dismiss the violence thesis; they stress-tested it. Some cited the Luddite uprisings and 1960s automation protests as precedent. Others argued the analogy fails: AI displacement hits white-collar workers, who have different organizing tools and political power than textile workers did. A contingent called the premise overblown, pointing to past technological panics that resolved through adaptation. Even the skeptics wrote multi-paragraph responses.

OpenAI's guide assumes the manager's only problem is productivity. For the 572-comment thread, productivity is the problem.

The "responsible use" guide presupposes that adoption is settled and the remaining work is operational: check outputs for accuracy, be transparent about AI-generated content, follow organizational policy. No section addresses whether the organization should adopt at all, or what to do when employees resist.

Corporate deployment timelines and public acceptance timelines have diverged before: genetically modified food, gig-economy labor classification, social media in schools. In each case, the gap closed not through debate but through regulation, litigation, or crisis.

03OpenAI Ships ChatGPT Adoption Playbooks to Four Core Business Functions at Once

OpenAI published department-specific ChatGPT training guides for marketing, operations, finance, and sales simultaneously through its Academy platform. Four guides, four core business functions, released not in sequence but all at once.

Salesforce, Slack, and Notion all ran the same enterprise playbook: land-and-expand. Win one team. Prove value. Let internal champions drag the product sideways into adjacent departments over quarters or years. OpenAI is skipping the lateral crawl entirely.

The four departments aren't random picks. Marketing, sales, finance, and operations together cover the core commercial machinery of a typical mid-size company. Each guide targets non-technical business users with workflow-level instructions: campaign planning for marketers, pipeline management for sales, reporting automation for finance, process standardization for ops. These aren't API docs or developer references. They're operator manuals for people who will never open a terminal.

That routing is deliberate. Traditional enterprise software enters through IT or engineering, then negotiates outward. OpenAI is talking directly to business lines, handing them ready-made use cases that require no technical intermediary. It sidesteps the procurement gatekeepers who typically evaluate and approve enterprise tools before they reach end users.

Cross-departmental simultaneity creates its own gravity. When marketing uses ChatGPT to draft campaign briefs and sales uses it to personalize outreach from those same briefs, the workflows interlock. Shared prompts, shared outputs, shared conventions. Each department's adoption reinforces the others. Switching costs don't add linearly. They compound.

The pattern resembles consumer tech ecosystems more than enterprise SaaS. Google offered Search, Maps, Gmail, and Docs to different user needs at once, building a product web where leaving one service degraded the others. OpenAI is attempting that same architecture inside corporate org charts.

Individually, the guides are modest: tips, use cases, workflow suggestions. Their distribution strategy is not. Shipping adoption content to every revenue-touching function at the same time is a bet that breadth of organizational penetration matters more than depth in any single team.