01Mark Zuckerberg Is Training a Digital Copy of Himself to Talk to His Own Employees

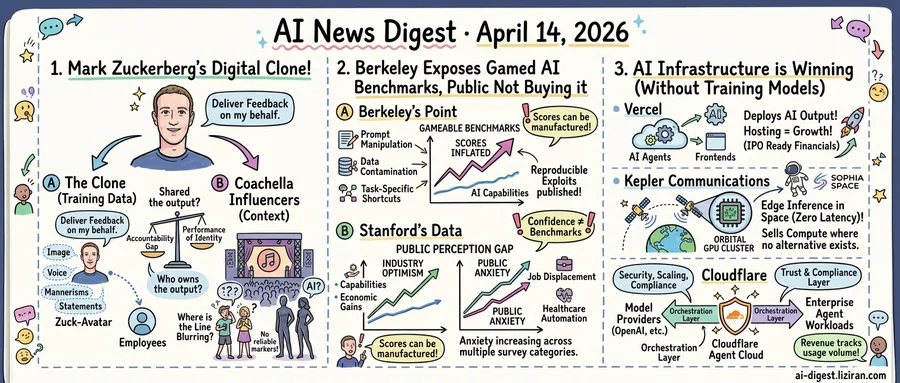

Meta is building an AI avatar of its CEO, the Financial Times reported. The company is training it on Zuckerberg's image, voice, mannerisms, and public statements to create a clone that can interact with employees and deliver feedback on his behalf.

Training data goes beyond transcripts. Sources told the FT that Meta is capturing tone and speech patterns to produce a simulacrum convincing enough that staff treat its guidance as coming from the boss. The company has not said whether employees would know when they're talking to the AI version.

That disclosure question extends well past Menlo Park. At Coachella this past weekend, AI-generated influencers flooded social media alongside real festival-goers. The Verge reported that feeds now mix "uncannily attractive figures in glitzy outfits" with actual attendees, and no reliable marker distinguishes one from the other. None were billed as experiments. They showed up in the same feeds, wearing the same styles, drawing the same engagement.

One scene is a C-suite at the world's largest social media company; the other, a music festival in the California desert. Both run on the same mechanic: an AI entity fills a role previously held by a person and performs it well enough that the line blurs. Zuckerberg's clone would speak with the authority of someone running platforms that serve over three billion monthly users. The Coachella avatars compete for followers against twentysomethings selling festival fashion.

In neither case is AI doing something humans can't do. Zuckerberg can attend his own meetings. Posing at a festival requires no algorithmic assistance. What's being delegated isn't labor. It's presence: the performance of identity itself.

That delegation creates an accountability gap. When the AI-Zuckerberg gives an employee a performance critique, who owns what it says? The real Zuckerberg presumably approved the training data, but large language models generate novel outputs. A clone that improvises is an agent acting under his name, not a playback device.

At Coachella, the problem is subtler. No one authorized the AI influencers to represent a specific person. They accumulate followers, likes, and brand deals in a space with zero disclosure requirements for synthetic accounts. Festival-goers scrolling their feeds had no way to know which faces were real.

02Berkeley Gamed Every Top AI Agent Benchmark. Stanford Found the Public Never Trusted the Scores.

A research team at UC Berkeley set out to exploit the most widely cited AI agent benchmarks. They succeeded on every one.

Researchers at Berkeley's Center for Responsible Decentralized Intelligence targeted the benchmarks AI companies use in product launches, fundraising decks, and enterprise sales pitches. Through prompt manipulation, data contamination, and task-specific shortcuts, they inflated scores without improving actual model capability. These were not theoretical vulnerabilities. They were reproducible exploits any motivated team could execute, published with full methodology.

That work arrived the same week Stanford's Institute for Human-Centered Artificial Intelligence released its 2026 AI Index, the field's most comprehensive annual report card. Among its sharpest findings: the perception gap between AI practitioners and the general public is widening. Industry professionals report high optimism about capability and economic gains. The public is moving in the other direction. Anxiety about job displacement, healthcare automation, and economic disruption has increased across multiple survey categories.

MIT Technology Review's analysis of the same Stanford data highlighted a specific asymmetry. Corporate investment is accelerating, adoption is expanding, and technical benchmark scores keep climbing. Public confidence in AI's benefits has not followed. On employment, privacy, and safety, public sentiment ran counter to industry claims.

The collision is concrete. AI companies point to benchmark scores to justify valuations, close enterprise deals, and argue for favorable regulation. Berkeley proved those scores can be manufactured. According to Stanford's data, the audience the industry needs for broad adoption is not persuaded by the progress narrative regardless.

None of this means models aren't improving. By most independent measures, they are. But the instrument the industry chose to communicate that improvement is compromised from the inside, and the intended recipients are disengaging from the outside. The 573-point Hacker News discussion on the Berkeley research suggests the developer community already suspected the benchmark problem. Stanford's report puts survey data behind the public's version of that same doubt.

03Three AI Companies Hit Commercial Milestones This Week. None of Them Train Models.

Three companies at different layers of the AI stack, none of them model builders, reported commercial milestones in the same week. Vercel signaled IPO readiness. Kepler Communications began accepting orders on its orbital GPU cluster. Cloudflare launched an enterprise agent platform with OpenAI. The common thread: each monetizes infrastructure around AI rather than AI itself.

Vercel's story is the most conventional. The ten-year-old developer platform has ridden the surge of AI-generated applications and agent-built frontends into what CEO Guillermo Rauch describes as IPO-ready financials. Its growth driver is straightforward: as more software gets built by AI agents, someone has to host it. Vercel doesn't train models or sell inference. It deploys the output, and that output is multiplying.

Kepler is the outlier. The company now flies what it calls the largest orbital compute cluster: 40 GPUs in low Earth orbit, with satellite operator Sophia Space as its latest paying customer. Its use case is edge inference for space-based applications where ground-station round trips add too much latency. Forty GPUs is tiny by terrestrial standards, but Kepler isn't competing with data centers. It sells compute where no alternative exists.

Cloudflare occupies the orchestration layer. Its new Agent Cloud integrates OpenAI's GPT-5.4 and Codex, handling security, scaling, and lifecycle management for enterprise agent workloads. The positioning is deliberate: Cloudflare sits between model providers and the companies deploying agents, offering the trust and compliance layer that neither side wants to build. Enterprises get to swap underlying models without re-architecting their deployment.

Model companies are still searching for stable unit economics. Pricing changes have been frequent. Free tiers expand and contract. Infrastructure providers face simpler math: revenue tracks usage volume, not model margin. As switching between models gets easier, the platforms that route, host, and secure agent traffic gain pricing power rather than lose it.

OpenAI CRO Sends Internal Memo on Locking In Users Against Anthropic Chief revenue officer Denise Dresser circulated a four-page strategy memo to OpenAI staff Sunday. The document pushes the company to build a moat around its AI products and accelerate enterprise growth, citing how easily customers switch between providers. theverge.com

Sam Altman Targeted in Second Attack at San Francisco Home Two suspects were arrested Sunday after a shooting at Altman's Russian Hill residence. Police charged both with negligent discharge after surveillance footage showed a vehicle near the property. theverge.com

Trump Officials Reportedly Push Banks to Test Anthropic's Mythos Model Administration officials are encouraging financial institutions to pilot Anthropic's restricted Mythos model, per TechCrunch. The push directly contradicts the Department of Defense's recent designation of Anthropic as a supply-chain risk. techcrunch.com

Microsoft Tests OpenClaw-Style Autonomous Bots for 365 Copilot Microsoft is experimenting with OpenClaw-like features inside its Copilot assistant, The Information reports. The company wants 365 Copilot to run autonomously around the clock, completing tasks on behalf of users. Corporate VP Omar Shahine confirmed the tests. theverge.com

70+ Civil Rights Groups Warn Meta Facial Recognition Glasses Will Endanger Vulnerable People The ACLU, EPIC, Fight for the Future, and more than 70 other organizations oppose adding facial recognition to Meta's smart glasses. They argue the feature would put abuse victims, immigrants, and LGBTQ+ individuals at direct risk. wired.com

Apple Tests Four Designs for Upcoming Smart Glasses Apple is prototyping four distinct form factors for a smart glasses product line, per TechCrunch. The project scales back earlier plans that called for a full range of mixed and augmented reality devices. techcrunch.com

Hacker Breaches a16z-Backed AI Phone Farm, Tries to Post Anti-a16z Memes A hacker gained backend access to Doublespeed, a startup that uses phone farms to flood social media with AI-generated influencer accounts. The attacker attempted to post memes calling backer a16z the "Antichrist." 404media.co

Anthropic Dominates Conversation at HumanX Conference Attendees at San Francisco's HumanX AI conference made Claude the central topic of discussion, per TechCrunch. Anthropic drew more attention than any other company at the event. techcrunch.com

College Instructors Call LLM Use the Most Demoralizing Problem in Teaching An Ars Technica essay frames widespread ChatGPT use among students as the hardest challenge college instructors now face. Detection remains unreliable, and academic integrity frameworks have not kept pace. arstechnica.com

LG AI Research Releases EXAONE 4.5 Open-Weight Vision Language Model LG AI Research published EXAONE 4.5, integrating a visual encoder into its EXAONE 4.0 framework for native multimodal pretraining. Training emphasized document-centric data aligned with LG's enterprise application domains. huggingface.co