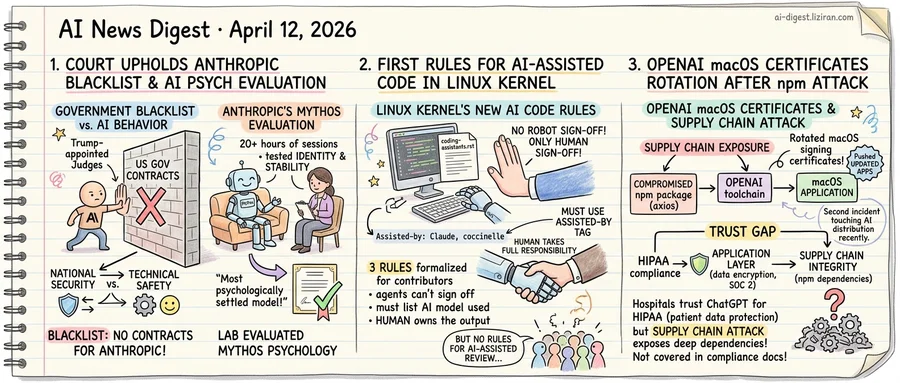

01Court Upholds Anthropic Blacklisting as Company Sends AI to a Psychiatrist

A panel of Trump-appointed judges denied Anthropic's emergency motion to stay the federal government's technology blacklisting order this week. The decision is the latest setback in the company's legal effort to reverse what it calls an unjustified ban. It leaves Anthropic locked out of government contracts and federal procurement with no clear path to reinstatement.

Anthropic had argued the blacklisting was arbitrary and lacked substantive national security justification. The appeals court was unconvinced, deferring to executive authority. Under the order, federal agencies cannot purchase or integrate Anthropic's technology. That shuts the company out of a market where competitors OpenAI and Google have secured major defense and intelligence contracts over the past year.

The same week the court declined to intervene, Anthropic published results from a different kind of assessment entirely. It had subjected its latest model, Mythos, to 20 hours of structured psychiatric evaluation conducted by a licensed psychiatrist. Sessions tested identity consistency, resistance to manipulation, and behavioral stability under adversarial conditions. Anthropic called Mythos "the most psychologically settled model we have trained to date."

No other major AI lab has attempted anything comparable. Anthropic built its identity around safety through published research, restricted model releases, and governance commitments adopted voluntarily. It pioneered constitutional AI and responsible scaling policies. Before the psychiatric assessment, the company had already blocked public access to Mythos on safety grounds.

The blacklisting order cited none of this work. It did not reference alignment failures, unsafe outputs, or inadequate testing. National security determinations and technical safety assessments operate on parallel tracks with no shared vocabulary and no mechanism for one to inform the other. A company can satisfy every safety standard its field has devised and still fail a national security review that never examines model behavior. The government evaluates geopolitical risk; Anthropic measures model psychology.

That disconnect carries consequences beyond one company's bottom line. The AI industry has treated safety investment as a form of regulatory insurance: spend on alignment, earn government trust. Anthropic tested that theory more aggressively than anyone. It hired psychiatrists for its models, restricted its own products, and submitted to evaluations no regulator required. A federal appeals court just confirmed that none of it registered in the one government process that determined market access.

02Linux Kernel Maintainers Write the First Official Rules for AI-Assisted Code

A new file in the Linux kernel's Documentation directory runs just 60 lines. Its core rule: AI agents must not sign off on code. Only humans can.

The document, coding-assistants.rst, formalizes three requirements for anyone using AI tools to contribute to the kernel. All AI-generated code must be GPL-2.0 compatible, same as any other contribution. Agents are explicitly barred from adding Signed-off-by tags. Only a human can certify the Developer Certificate of Origin, the kernel's legal mechanism confirming a contributor's right to submit code. Whoever submits the patch reviews it, certifies compliance, and takes full responsibility.

A third rule covers attribution. Contributors must tag AI involvement with an Assisted-by: line naming the tool, model version, and any specialized analysis software. The document gives a concrete example: Assisted-by: Claude:claude-3-opus coccinelle sparse. Basic tools like git and gcc don't qualify. The kernel wants to know when a model shaped the code, not when a compiler built it.

The distinction draws a line academic researchers are still mapping. A recent survey from Shanghai Jiao Tong University and Carnegie Mellon traces a pattern across the LLM agent ecosystem. Capabilities once embedded in model weights are migrating into external runtime infrastructure: memory stores, reusable skills, interaction protocols. The kernel's policy is a ground-level response to that shift. As AI tooling moves from autocomplete to agent-driven workflows that write, test, and submit patches, someone has to own the output. Kernel maintainers decided that someone is always human.

On Hacker News, the document drew 489 points and 370 comments. Disagreement centered on whether human sign-off creates real accountability or just legal cover. Others questioned whether the Assisted-by: tag will help maintainers calibrate review effort or become a formality. One persistent criticism targeted a gap: the policy governs AI-assisted authorship but says nothing about AI-assisted review. A maintainer could use an LLM to evaluate incoming patches with no disclosure requirement.

The kernel accepts patches from thousands of developers worldwide. These 60 lines now govern how all of them use AI.

03OpenAI Rotates macOS Signing Certificates After Axios Supply Chain Attack

A compromised npm package forced OpenAI to rotate its macOS code signing certificates — the same week the company is marketing HIPAA-compliant ChatGPT to hospitals.

The axios library, one of the most widely used HTTP clients in the JavaScript ecosystem, was hit by a supply chain attack. OpenAI, which depends on axios in its toolchain, responded by rotating its macOS code signing certificates, pushing updated applications, and stating that no user data was compromised.

The technical response followed standard incident procedure: isolate the blast radius, rotate credentials, ship patches. But the timing exposes a structural problem. OpenAI is actively courting healthcare organizations with ChatGPT, positioning HIPAA compliance as the trust foundation: encrypted data, access controls, audit logging. Its Academy healthcare page walks clinicians through using ChatGPT for diagnosis support, documentation, and patient care.

HIPAA compliance governs how patient data is handled at the application layer. It says nothing about whether the npm packages underneath that application were tampered with last Tuesday. Healthcare IT teams evaluating AI vendors typically audit data flows, encryption standards, and access policies. Few have the tooling or mandate to audit a vendor's transitive dependency tree in real time.

That gap is not unique to OpenAI. Every major AI company ships products built on deep stacks of open-source dependencies. But the gap becomes acute when the sales pitch centers on trust. A hospital CISO approving ChatGPT for clinical workflows is implicitly trusting not just OpenAI's infrastructure, but every maintainer of every package OpenAI pulls from public registries.

This incident caused no data breach, by OpenAI's account. The certificates were rotated, the apps were updated, and operations continued. Yet the axios compromise is the second supply chain incident to touch a major AI company's distribution pipeline in recent months. The attack surfaces differ: in prior cases, AI tools themselves were targeted. Here, a generic dependency was poisoned and AI companies were collateral. Both paths arrive at the same exposure.

OpenAI's HIPAA documentation covers data encryption, SOC 2 compliance, and business associate agreements. It does not address software supply chain integrity. No major AI vendor's compliance page does.

AI2 Releases MolmoWeb, a Fully Open Web-Browsing Agent With Training Data AI2 published MolmoWeb, an autonomous web agent built entirely on open models and open training data. The release includes MolmoWebMix, a large-scale dataset of web navigation demonstrations, along with full training recipes. Prior web agents with comparable performance relied on proprietary models and undisclosed data. huggingface.co

DMax Speeds Up Diffusion Language Models With Aggressive Parallel Decoding Researchers introduced DMax, a decoding method for diffusion-based language models that replaces binary mask-to-token transitions with progressive self-refinement. A new training strategy called On-Policy Uniform Training reduces error accumulation during parallel token generation. The approach enables higher decoding parallelism without degrading output quality. huggingface.co

OpenVLThinkerV2 Tackles Cross-Task Reward Variance in Multimodal RL Training A new open-source multimodal reasoning model addresses two bottlenecks in applying group relative policy optimization across diverse visual tasks: wildly different reward distributions between task types, and the tension between fine-grained perception and multi-step reasoning. The model handles multiple visual domains within a single generalist architecture. huggingface.co

Researchers Propose "Meta-Cognitive" Fix for AI Agents That Over-Use Tools A paper identifies a failure pattern in multimodal agents: reflexive tool invocation even when the answer is visible in the image. The proposed method teaches models to judge when internal knowledge suffices and when external tool calls are actually needed. The fix reduced unnecessary tool calls while maintaining accuracy on queries that genuinely require external lookups. huggingface.co