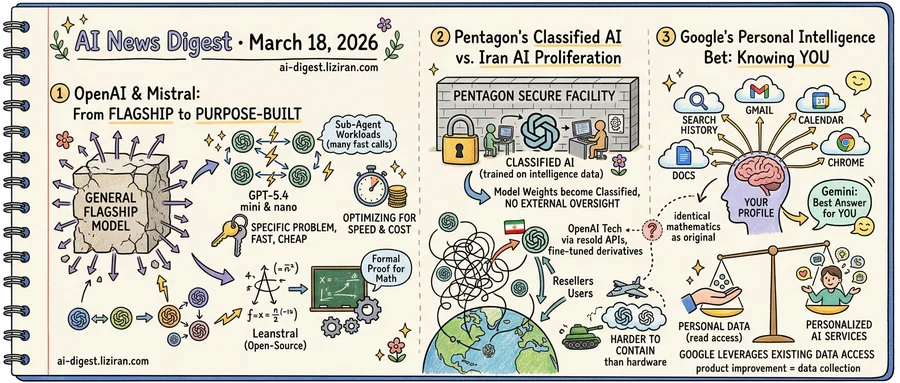

01OpenAI and Mistral Shift from Flagship Models to Purpose-Built Tools

OpenAI's newest models aren't built for humans. GPT-5.4 mini and nano, announced this week, target sub-agent workloads: tasks where one AI system calls another at high frequency. The models optimize for speed and cost over raw capability. OpenAI describes them as designed for "coding, tool use, multimodal reasoning, and high-volume API" calls. The intended customer isn't a person typing prompts. It's another model.

Days later, Mistral released Leanstral, an open-source agent for formal proof engineering in Lean 4, the language mathematicians use to write machine-verified proofs. Not a general coding assistant, but a single-domain tool for a specialized academic workflow. The project drew 735 points and 179 comments on Hacker News.

These two products share no technical DNA. The first is proprietary, built for API infrastructure at scale. Leanstral is open-source, aimed at a niche mathematical discipline. Yet both reflect the same strategic bet: the next unit of value comes from purpose-built tools that solve narrow problems faster and cheaper than any general model can.

For two years, lab competition followed a single axis. Train the largest model, top the leaderboard, claim the crown. That playbook rewarded scale above all else. As flagship models from major labs have converged in benchmark performance, the constraint has moved downstream. The relevant question is no longer "which model is smartest" but "which one fits this job."

OpenAI's play targets a real cost problem. In sub-agent architectures, a single user query can trigger dozens of model calls behind the scenes. Token price per call becomes the decisive variable. A model that costs less while maintaining tool-use accuracy wins the contract, no matter its score on graduate-level reasoning.

Mistral's decision to open-source Leanstral reflects a different competitive logic. In the flagship era, releasing a top model's weights meant surrendering advantage. For specialized tools, open-sourcing accelerates ecosystem adoption. A formal proof agent is valuable only if proof engineers actually use it. Free distribution is the faster path to becoming the default.

Neither lab framed its release this week as a flagship moment. Both positioned them as infrastructure.

02Pentagon Plans Classified AI Training as Commercial Models Drift Toward Iran

The U.S. Department of Defense is building secure facilities where AI companies will train models on classified intelligence. The goal: military-specific AI variants that never leave government walls, never face external audits, never get tested by independent red teams.

The same week, MIT Technology Review traced multiple pathways OpenAI's commercial technology could take into Iran.

The Pentagon's plan goes further than current military AI procurement. Defense officials confirmed discussions to let companies bring their training pipelines inside classified environments, feeding models data that carries the highest security markings. The resulting variants would be purpose-built for military applications. Training on classified data means the weights themselves become classified, creating AI systems that exist entirely outside civilian oversight.

Current military AI use involves running commercial models in secure settings to analyze existing intelligence. Training on that intelligence is a different operation. Classified information stops passing through the model and starts reshaping its parameters permanently. Every inference afterward carries traces of secrets encoded in the weights.

The control logic is straightforward. Lock down the data, lock down the facility, lock down the model. But MIT Technology Review's reporting on OpenAI traced a messier reality outside the vault. API access gets resold across borders. Fine-tuned model derivatives circulate beyond any single company's control. The architectural knowledge that commercial deployment makes public cannot be recalled. Weights trained in Virginia and weights accessed in Tehran run on identical mathematics.

Classification was designed for discrete objects: documents, satellite photos, weapons blueprints. Whether it can contain statistical patterns distributed across billions of parameters is a question the Pentagon has not answered publicly. The Defense Department's own procurement history suggests the challenge. Controlled military hardware has ended up in adversary hands for decades despite strict export regimes. AI model weights are easier to copy than a jet engine and harder to trace than a missile component.

03Google Bets Its AI Edge on Knowing You, Not Outsmarting Rivals

Google on Tuesday opened its "Personal Intelligence" feature to all US users for free, connecting Gemini to Search history, Gmail, and Chrome browsing data. The feature had been restricted to paid AI Pro and AI Ultra subscribers. Now anyone with a Google account can opt in.

The move reveals where Google has decided to compete. In raw model capability, the company has spent two years chasing OpenAI and Anthropic. Gemini's benchmarks have improved, but the gap has never been comfortable. Personal Intelligence reframes the contest: instead of producing the best answer to any question, Google wants to produce the best answer for you.

That distinction runs on what Google already holds. No other AI company simultaneously controls a search engine, an email service, a calendar, a document suite, and the world's most-used browser. When a user asks Gemini to help plan a trip, the model can pull from flights they've searched, confirmation emails in their inbox, and documents they've shared with colleagues. OpenAI and Anthropic would need partnership deals to access even a fraction of that context.

The integration spans three surfaces: AI Mode in Search, the Gemini app, and Gemini in Chrome. Google says users can control which services are linked and that Gemini will reference connected data only when relevant.

But the exchange has a specific shape. To get personalized responses, users grant Gemini read access across their digital life. Google already monetizes user data through advertising. Adding an AI layer that reads emails and browsing history creates a second extraction channel. Product improvement and data collection become the same act. Users who opt in aren't just sharing preferences. They're feeding a system whose value grows the more it knows about them.

Google has tried personalization before: Google Now, the Assistant, Discover. Each promised contextual intelligence. None lasted. The difference now is that Gemini sits inside products people use daily. It doesn't require a new habit, only permission.

Microsoft Merges Consumer and Commercial Copilot Teams Under New Executive Microsoft reorganized its Copilot division, unifying previously separate consumer and commercial engineering teams under one leader. The company has run parallel Copilot efforts for years; the consolidation targets a more consistent product across business and personal users. theverge.com

Americans Send Nearly 3 Million Daily ChatGPT Messages Asking About Pay OpenAI published research showing U.S. users send roughly 3 million compensation-related queries to ChatGPT each day. The data covers questions about salaries, earnings, and pay benchmarks across industries. openai.com

Google Funds New AI Tools for Open Source Security Google announced additional investment in AI-powered code analysis to find vulnerabilities in open source software. The effort includes new scanning tools and security-focused development practices aimed at catching flaws before release. blog.google

OpenSeeker Publishes First Fully Open Training Data for LLM Search Agents Researchers released OpenSeeker, an open-source dataset for training LLM-based search agents. High-performance search agent development has been restricted to large companies with proprietary data; OpenSeeker provides the full pipeline. huggingface.co

EnterpriseOps-Gym Tests AI Agents on Realistic IT Workflows A new benchmark evaluates LLM agents on long-horizon planning tasks involving persistent state changes and access controls. Existing benchmarks miss the complexity of enterprise environments where agents must respect strict protocols across multi-step operations. huggingface.co

Mixture-of-Depths Attention Lets Transformer Heads Reach Back to Earlier Layers MoDA, a new attention mechanism, allows each head to attend to key-value pairs from both the current layer and preceding layers. The design addresses signal degradation in deep models, where useful features formed in shallow layers get diluted by repeated residual updates. huggingface.co

Seoul World Model Generates Street-Level Video Grounded in a Real City Researchers built a city-scale video generation model anchored to actual Seoul street-view images. The system uses retrieval-augmented conditioning to produce video sequences tied to real geographic locations rather than imagined environments. huggingface.co

VET-Bench Reveals Top Vision-Language Models Score Near Chance on Object Tracking A synthetic benchmark tests VLMs on tracking visually identical objects using only spatiotemporal continuity. Every state-of-the-art model tested performed at or near random, exposing a basic gap in visual tracking that existing benchmarks obscure with visual shortcuts. huggingface.co

RLCF Trains Models to Judge Research Ideas Using Large-Scale Community Signals A new training method called Reinforcement Learning from Community Feedback teaches models to assess and propose high-impact scientific ideas. The approach targets scientific taste — the ability to identify promising research directions — rather than execution capability. huggingface.co

Distillation Method Achieves Lossless Transfer from Transformers to Hybrid xLSTM Researchers introduced a distillation approach that transfers knowledge from quadratic-attention LLMs to sub-quadratic hybrid xLSTM architectures without performance loss. The method defines lossless distillation through tolerance-corrected win-and-tie rates between student and teacher across task sets. huggingface.co

Cheers Separates Patch Detail from Semantics for Unified Visual Understanding and Generation A new multimodal model decouples patch-level visual detail from semantic representations within a single architecture. The split stabilizes comprehension while improving generation fidelity — addressing a core tension in models that attempt both tasks jointly. huggingface.co