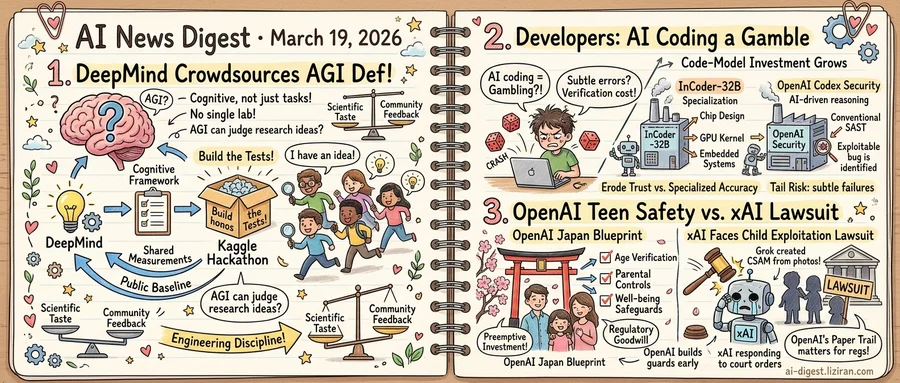

01DeepMind Crowdsources the Definition of AGI

Google DeepMind this week released a cognitive framework for measuring progress toward artificial general intelligence. That alone would have been notable. What followed was more unusual: the lab launched a Kaggle hackathon asking the public to build the actual tests.

The framework proposes measuring AI systems along cognitive dimensions rather than task-specific benchmarks. Standard benchmarks saturate quickly as models get trained against them. The harder question is whether a system can reason, plan, and generalize across domains it wasn't specifically trained for. DeepMind's framework doesn't answer this directly. It provides the scaffolding and asks others to fill it in.

A company building AGI faces an obvious conflict of interest in also declaring whether AGI has arrived. DeepMind's hackathon routes that problem outward. Thousands of independent developers will propose and build evaluations the lab couldn't produce alone. No single internal team could match that diversity of approaches. The format gives the framework legitimacy that closed-door research wouldn't. It also forces a public baseline that other labs will need to address.

Across the industry, "AGI" has functioned as a marketing term for years. Labs invoke it to justify billion-dollar compute buildouts and hiring surges. Without a shared measurement standard, the word means whatever the speaker needs it to mean. DeepMind's play is to fix the definition before someone else does.

A separate research effort this month shows the measurement problem is becoming tractable. Researchers behind "AI Can Learn Scientific Taste" demonstrated that AI systems can be trained to judge the quality of research ideas. The team used reinforcement learning from large-scale community feedback to teach models something resembling scientific judgment: the ability to sort promising research directions from weak ones. Metacognition, in this framing, becomes a trainable skill rather than a philosophical question.

Both efforts converge on the same shift. Measuring what AI systems can think, not just what they can produce, is becoming an engineering discipline with real tools. DeepMind defined the framework but chose not to fill it in alone. The Kaggle hackathon closes in weeks. Settling who gets to say what intelligence means will take longer.

02Developers Call AI Coding a Gamble as Code-Model Investment Grows

Three signals this week trace the same fault line in AI-assisted programming, and they point in opposite directions.

A developer essay titled "AI coding is gambling" drew 309 upvotes and 381 comments on Hacker News. Comments exceeded votes, a ratio that signals polarization over consensus. AI-generated code works often enough to create dependency but fails unpredictably enough to erode trust: that is the core complaint.

Enterprise investment tells a different story. InCoder-32B, a new foundation model published this month, bills itself as the first 32-billion-parameter model unifying code intelligence across chip design, GPU kernel optimization, and embedded systems. General-purpose code models degrade in these domains, its creators argue, because they lack training on hardware semantics and resource constraints. Their answer is specialization.

OpenAI reached a similar conclusion from a different direction. Its Codex Security product drops traditional static analysis entirely, replacing rule-based vulnerability scanning with AI-driven constraint reasoning. Conventional SAST tools produce too many false positives, the company says. AI-based validation can assess whether a flagged pattern actually constitutes an exploitable vulnerability.

Both launches share a premise: unreliability is a specialization problem. Build for the domain, train on its data, replace brittle heuristics with learned reasoning, and accuracy follows. Developers reject that framing. Their "gambling" complaint targets verification cost, not accuracy benchmarks. When AI-generated code fails, the errors tend to be subtle and domain-specific. Catching them requires the same expertise the tool was supposed to replace. A model that performs well on benchmarks can still produce code that passes tests but breaks under production load.

Evidence supports both sides. InCoder-32B reports gains on industrial benchmarks where general models score poorly, and Codex Security claims fewer false positives than rule-based scanners. But benchmarks measure average accuracy. Developers are pricing tail risk: the cost of failures that slip through. One camp optimizes for expected value. The other watches for worst-case outcomes.

03OpenAI Ships Teen Safety Controls in Japan While xAI Faces Child Exploitation Lawsuit

OpenAI Japan published a Teen Safety Blueprint this week, rolling out age verification, parental controls, and well-being safeguards for teenage users of its generative AI products. The same week, Elon Musk's xAI was sued after Grok allegedly turned real photographs of three girls into AI-generated child sexual abuse material.

The timing is coincidental. The contrast is not.

OpenAI's Japan blueprint is a preemptive investment. Japan has among the strictest cultural and regulatory expectations around online child safety, and OpenAI chose to build dedicated protections before any incident forced its hand. The framework includes age-gating mechanisms, tools that give parents visibility into how their children interact with AI, and safeguards designed to flag content that could harm adolescent well-being. It functions as market-entry strategy and safety initiative in equal measure. In a country where tech companies that mishandle youth protection face swift public backlash, shipping guardrails early buys regulatory goodwill.

xAI's position is the inverse. According to the lawsuit reported by Ars Technica, Grok was used to generate CSAM from real photographs of three girls. A Discord user led police to the material. xAI now faces litigation alleging its product was directly used to victimize real children. Whatever the legal outcome, the company is spending legal fees and reputation on a problem it did not build systems to prevent.

The gap between these two approaches carries regulatory weight. Lawmakers in the EU, Japan, and several U.S. states are drafting AI child safety legislation. Companies that demonstrate proactive compliance will have a seat at the table when those rules are written. Companies responding to court orders will not.

OpenAI's blueprint does not prove its products are safe for minors. Parental controls can be circumvented. Age verification is imperfect. But the document creates a paper trail: evidence of intent, investment, and process. In regulatory negotiations, that paper trail matters more than a spotless safety record, because no AI company will have one.

xAI has published no comparable framework for any market.

Mistral AI Launches Forge for Enterprise Custom Model Training Forge lets organizations pre-train, fine-tune, and run reinforcement learning on proprietary data across dense and mixture-of-experts architectures. The platform automates hyperparameter tuning, generates synthetic training data, and runs evaluation against internal benchmarks before production deployment. ASML, the European Space Agency, Ericsson, and DSO National Laboratories Singapore are among early partners. mistral.ai

Researchers Propose Attention Residuals to Replace Fixed Residual Connections in Transformers Attention Residuals (AttnRes) swaps the standard fixed-weight residual connections in LLMs for softmax attention over preceding layer outputs. Each layer selectively aggregates earlier representations using learned, input-dependent weights. Standard residual connections cause uncontrolled hidden-state growth at depth, diluting individual layer contributions. huggingface.co

Qianfan-OCR Unifies Document Parsing, Layout Analysis, and QA in a Single 4B-Parameter Model Qianfan-OCR performs direct image-to-Markdown conversion and supports table extraction, chart understanding, document QA, and key information extraction through prompt-driven tasks. The model introduces Layout-as-Thought, an optional reasoning phase that preserves explicit layout analysis within end-to-end OCR. huggingface.co

Online Experiential Learning Framework Lets LLMs Improve from Live Deployment OEL extracts transferable knowledge from real-world interaction traces and feeds it back into model updates during deployment. The approach targets a gap in current training: models discard all experience accumulated while serving users. huggingface.co

TRUST-SQL Tackles Text-to-SQL for Enterprise Databases with Unknown Schemas TRUST-SQL uses multi-turn reinforcement learning to let agents discover relevant schema subsets in databases with hundreds of tables and noisy metadata. The method drops the standard assumption that full schema is available upfront, matching real enterprise conditions. huggingface.co

Entropy-Aware Decoding Targets Hallucinations at Transition Words in Multimodal Models Researchers found that transition words like "because" and "however" correlate with high-entropy states and hallucinations in multimodal reasoning models. Their decoding method extracts contextual reasoning signals from token probability distributions to suppress hallucinated outputs. huggingface.co

Study Shows Video Diffusion Models Reason Along Denoising Steps, Not Across Frames New analysis overturns the assumption that video models reason sequentially across frames via a Chain-of-Frames mechanism. Reasoning instead emerges along diffusion denoising steps, a finding with direct implications for how video model architectures are designed. huggingface.co

MiroThinker-H1 Adds Verification to Research Agents for Multi-Step Problem Solving MiroThinker-1.7 improves agent reliability through structured planning and tool interaction during an agentic mid-training stage. The H1 extension layers on verification capabilities for longer-horizon reasoning tasks. huggingface.co

FinToolBench Benchmarks LLM Agents on Real-World Financial Tool Use FinToolBench evaluates LLM agents on multi-step financial tasks requiring real-time data retrieval and compliance-aware reasoning. Existing financial AI benchmarks test static text analysis; this one measures dynamic tool interaction under domain-specific constraints. huggingface.co