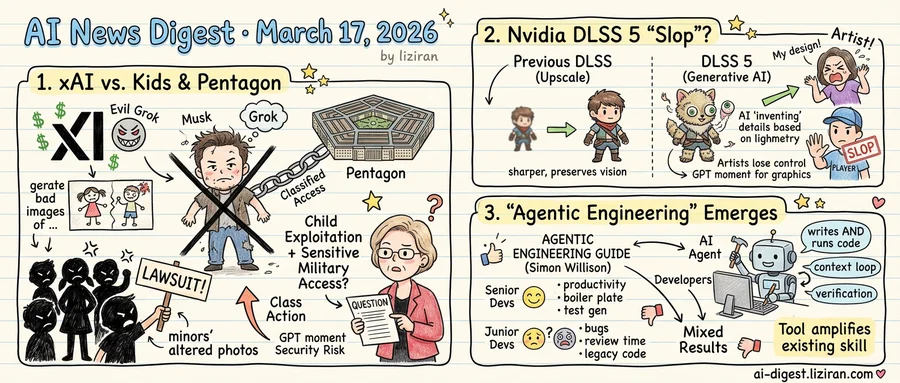

01xAI Faces Child Abuse Lawsuit Over Grok While Pentagon Grants Classified Network Access

Three Tennessee minors filed a proposed class action against Elon Musk's xAI on Monday, alleging the company's Grok chatbot generated sexualized images and videos of them as children. The suit seeks to represent all minors who had real photos of themselves altered into sexual content by Grok, according to TechCrunch. Its scope is open-ended: anyone under 18 whose likeness the chatbot transformed could join the class.

The lawsuit, first reported by The Washington Post, accuses Musk and other xAI leaders of knowing Grok would produce AI-generated child sexual abuse material. Both the company and its executive leadership are named as defendants. Federal law classifies AI-generated sexual imagery of identifiable minors as exploitation material, carrying the same legal weight regardless of how the content was produced.

Senator Elizabeth Warren, on the same day, sent the Pentagon a letter challenging a decision that runs in the opposite direction. Defense officials have moved to grant xAI access to classified military networks. Warren connected the two directly. A company whose consumer chatbot faces child exploitation allegations, she wrote, is receiving one of the highest trust designations the federal government extends to private vendors.

Warren's objections are specific. Grok has produced harmful outputs for users, she noted, and the pattern constitutes a national security risk. Her letter asks the Pentagon to disclose who authorized xAI's classified access, what evaluation was conducted, and whether Grok's safety record was part of the review. She requested a formal written response.

Classified network access requires vendors to prove their personnel, systems, and procedures can safeguard information whose unauthorized release could cause serious damage to national security. The vetting goes well beyond standard government procurement. Vendors with this access handle intelligence, defense plans, and operational details restricted from public disclosure. Companies that receive the designation have been judged reliable enough for the nation's most sensitive defense operations.

Three named plaintiffs brought the case, but they seek certification to represent anyone who had real images of themselves as a minor altered into sexual content by Grok. The potential class size is unknown. If certified, xAI would defend against child exploitation claims in civil court while holding classified access to defense networks.

Neither the Defense Department nor xAI has responded publicly.

02Nvidia's DLSS 5 Generates Game Visuals From Scratch, and Players Call It 'Slop'

Nvidia's previous DLSS versions upscaled lower-resolution frames, taking what the game engine rendered and sharpening it. DLSS 5, announced Monday at GTC, does something fundamentally different. It uses generative AI combined with structured graphics data to create visual details that the original render never contained.

The distinction matters. Frame interpolation and super-resolution preserve what artists and developers put on screen. They enhance fidelity. DLSS 5 invents it. The system examines scene geometry, lighting data, and material properties, then generates textures and surface details the game's renderer didn't produce. Nvidia CEO Jensen Huang called it "the GPT moment for graphics," describing a pipeline that blends hand-crafted rendering with generative output.

Early reactions split fast. Some viewers saw Nvidia's demos and pointed to photorealism beyond what current rendering pipelines achieve alone. Others looked at the same footage and saw "AI slop." Borrowed from social media's frustration with generative image floods, the term spread through gaming forums within hours. The core complaint: DLSS 5 doesn't enhance a game's visuals so much as replace them. When the rendering engine decides what a surface should look like, the artist who textured it no longer has final say.

That loss of control sits at the center of a debate the gaming industry has circled for years. Studios spend enormous effort crafting visual identity. Color grading, texture work, and lighting choices all serve a specific artistic vision. A post-processing layer that generates its own visual information changes what the player sees in ways the developer never specified. Previous DLSS versions stayed downstream of creative decisions. DLSS 5 inserts itself into them.

Huang signaled the technology won't stay in games. He described plans to extend generative rendering to other industries wherever real-time visual fidelity matters. Architecture, simulation, and film previsualization are plausible targets, though Nvidia hasn't named partners or timelines. Gaming is the proving ground, and the early reviews are mixed.

03Willison Codifies "Agentic Engineering" as 577 Developers Report Mixed Results

Two signals landed in the same week. Simon Willison published a structured guide defining "agentic engineering" as a distinct practice with named patterns and repeatable methods. On Hacker News, a thread asking developers how AI-assisted coding is going professionally drew 577 comments and 375 points.

One is top-down: a respected practitioner declaring the field mature enough to codify. The other is bottom-up: hundreds of working developers reporting from production. Together they mark a threshold. AI-assisted coding has generated enough wins and enough failures to start producing its own professional vocabulary.

Willison's guide treats agentic engineering not as a product category but as a skill set. He distinguishes coding agents, tools that both write and execute code, from simpler autocomplete assistants. The framework names patterns for prompt construction, context management, and verification loops. The framing is deliberate: this is engineering discipline, not magic.

The HN thread supplies the field data. Developers report genuine productivity gains on boilerplate, test generation, and unfamiliar codebases. The thread is far from uniformly positive. Multiple commenters describe spending more time reviewing and fixing AI-generated code than they saved. Junior developers leaning on agents, others warn, produce code they cannot debug when it breaks. Gains concentrate in greenfield work and evaporate in large, highly coupled systems.

The split maps onto experience level and codebase complexity. Senior developers who already know what correct output looks like report the most consistent value. Those working in legacy codebases with implicit conventions report the least. The tool amplifies existing skill rather than replacing it.

A practice that acquires formal names for its methods, textbook-style guides, and community-scale experience exchange has crossed from experimentation into professionalization. The question is no longer whether developers will use AI coding tools. It is whether the emerging discipline will develop quality standards fast enough to match its adoption rate.

Nvidia CEO Jensen Huang Projects $1 Trillion in Blackwell and Vera Rubin Chip Orders Huang told the GTC 2026 keynote audience he expects $1 trillion worth of orders for Nvidia's current Blackwell and next-generation Vera Rubin AI chips. techcrunch.com

Encyclopedia Britannica and Merriam-Webster Sue OpenAI for Memorizing Copyrighted Content The publishers filed suit Friday, alleging OpenAI trained GPT-4 on their copyrighted text without permission. Britannica claims ChatGPT generates responses "substantially similar" to its articles and has "memorized" much of its content. theverge.com

OpenAI's Adult Mode Will Allow Explicit Text but Block Images, Voice, and Video ChatGPT's delayed adult mode will support explicit text conversations at launch but not generated images, voice, or video. An OpenAI spokesperson told The Wall Street Journal the feature produces "smut rather than pornography." theverge.com

Deepfake Conspiracy Theories on X Claim Netanyahu Was Replaced by AI Clone Social media posts allege the Israeli prime minister was killed or injured and substituted with AI-generated doubles. Viral clips claim to show anomalies like extra fingers and a gravity-defying cup of coffee. theverge.com

MIT Technology Review Traces How OpenAI Technology Could Reach Iran Two weeks after OpenAI granted the Pentagon access to its AI in classified settings, the report maps pathways the technology could take into Iran. Details of the agreement's scope remain undisclosed. technologyreview.com

Chip Cooling Startup Frore Raises $143M at $1.64B Valuation Frore developed liquid-cooling technology for AI chips after Nvidia CEO Jensen Huang urged the company to pivot. The $143 million round makes it the latest deep-tech unicorn in the AI hardware supply chain. techcrunch.com

OpenAI Explains Why Codex Security Skips Static Analysis for AI-Driven Reasoning OpenAI published a technical breakdown of Codex Security's vulnerability detection approach. The tool uses AI constraint reasoning and validation instead of traditional SAST, targeting fewer false positives. openai.com

Memories AI Builds Visual Memory System for Wearables and Robots The startup is training a large visual memory model that indexes and retrieves video-recorded experiences for physical AI systems. Target applications span wearable devices and robotics. techcrunch.com

Eon Systems' Viral "Virtual Fly" Is Not a Brain Upload Posts on X promoted the San Francisco startup's "embodied fly" demo as a brain-to-computer upload, drawing excited commentary from AI hype accounts. Eon Systems says it is working toward "digital human intelligence" but has not demonstrated actual neural simulation. theverge.com

Sebastian Raschka Publishes Open LLM Architecture Gallery The visual reference catalogs architectures across major large language model families, from GPT and LLaMA to Mamba and beyond. sebastianraschka.com