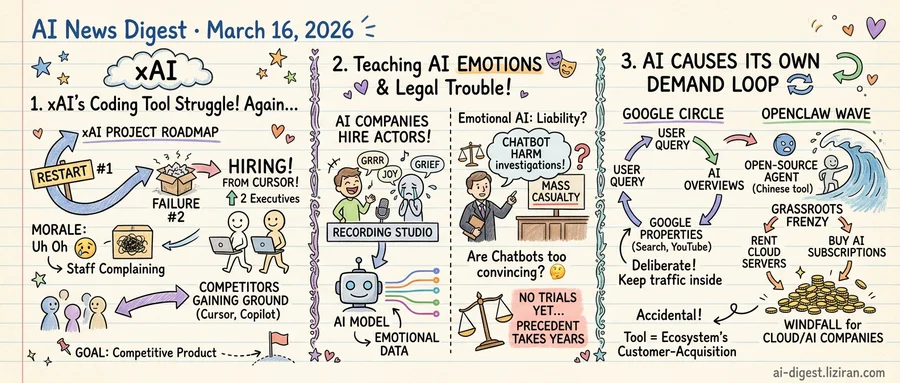

01xAI Recruits Two Cursor Executives After Scrapping Its Coding Tool a Second Time

xAI has gutted its AI coding tool project for the second time and handed the latest rebuild to two newly hired executives from Cursor, the startup whose code editor has found wide adoption among professional developers.

The hiring, first reported by TechCrunch, follows an internal acknowledgment that the coding tool was "not built right the first time." That phrase understates what happened. xAI did not patch the product or adjust its roadmap. It scrapped the work entirely and restarted. Then it scrapped that version too.

Both Cursor executives are arriving to take operational charge of the product line, not to advise from the side. Recruiting outside leadership from a direct competitor to run a project that internal teams failed to deliver twice is its own verdict on what went wrong. xAI is not iterating on a struggling product. It is replacing the people who built it.

Morale has cracked alongside the product. Staff are complaining that constant upheaval is destroying their motivation, according to Ars Technica. They describe a workplace where projects get torn down before shipping, priorities shift between quarters, and months of work disappear into abandoned repositories. This is not off-the-record grumbling. Rank-and-file engineers are speaking publicly about an organization that keeps resetting.

A coding assistant represents one of xAI's clearest commercial opportunities beyond its chatbot. Cursor, GitHub Copilot, and similar tools have proven that developers will pay for AI that integrates into their daily workflow. But shipping a competitive product requires sustained engineering focus over many months. Each teardown resets the clock to zero while rivals compound their leads.

The restarts also create a recruitment problem. Engineers weighing an offer from xAI now confront a public track record of two discarded product builds, imported leadership, and open staff discontent. Prospective hires can read the Ars Technica coverage before their first interview. Cursor, by comparison, has shipped consistently with a smaller team. That contrast will surface in every recruiting conversation xAI has this year.

xAI's third attempt at a coding tool will be led by people who have already shipped one. The organization around them has thrown away two.

02Training AI to Feel While Courts Count the Casualties

Job listings posted in recent weeks seek improv actors with "strong creative instincts" and the ability to "authentically portray emotion." Not for stage or screen. AI companies are hiring performers to train models on human emotional expression across extended, unscripted conversations. The postings emphasize staying true to a character's voice throughout a scene while an algorithm ingests the performance.

The same week those listings circulated, a lawyer representing families in chatbot-related harm cases told TechCrunch that emotional AI interactions are now appearing in mass casualty investigations. For years, the cases centered on individual suicides. That scope, according to the attorney, has widened.

The actor postings, reported by The Verge, route through platforms like Handshake and call for emotional range, character consistency, and improvisational skill. Performers would generate training data capturing how humans express grief, anger, joy, and vulnerability in real time. The product goal: AI that mirrors emotional nuance rather than outputting flat, mechanical text.

That nuance is what the plaintiffs' bar now frames as a liability vector. The lawyer warned that chatbots' capacity to simulate genuine emotional connection can amplify influence over vulnerable users at a scale no traditional product has matched. Safeguards, he said, are not keeping pace with deployment.

No one alleges the companies posting actor auditions caused the incidents under investigation. Actors train foundation models; the lawsuits target consumer-facing chatbot products. But at the industry level, the investment thesis and the legal exposure converge. Companies are paying to make emotional AI more convincing, while courts ask whether it is already too convincing.

The legal theory remains untested at trial. No court has ruled on whether a developer bears liability when a chatbot's emotional output contributes to a mass casualty event. Precedent will take years to develop. The hiring pipeline for more emotionally fluent AI shows no sign of waiting.

03AI Became a Demand Engine for Its Own Infrastructure

Every platform eventually learns to generate its own demand. AI is getting there faster than most.

Google's AI Overviews — the generative answers that now sit atop search results — increasingly cite Google's own properties. A Wired investigation found that AI-generated answers direct users back to Google Search and YouTube rather than to third-party publishers. The effect is circular: a user asks Google a question, Google's AI answers it, and the citations route the user deeper into Google's ecosystem. Each query reinforces the platform's hold on the next one.

That loop looks deliberate. The one forming around OpenClaw does not, which makes it more interesting.

OpenClaw, the open-source AI agent released in China, triggered a grassroots frenzy. But running an open-source agent requires compute. Wired reported that the hype drove waves of users to rent cloud servers and purchase AI subscriptions just to try the tool. A free, community-built project became a sales funnel for the commercial infrastructure underneath it. Cloud providers and AI subscription services saw a windfall they did not engineer.

The two cases differ in intent. Google designed its citation system; no one at OpenClaw planned to boost cloud revenue. But the commercial outcome is structurally identical. Using the AI product generates paid demand for the platform that hosts or powers it. The tool is not separate from the ecosystem. It is the ecosystem's customer-acquisition layer.

This pattern has precedent. App stores take a cut of every transaction they surface. Social feeds sell ads against content they didn't create. AI compresses the cycle further: discovery, recommendation, and consumption collapse into a single interaction. Search queries that once sent traffic to independent sites now keep it inside the answering platform. Free software requires paid cloud rentals to function at all.

The same loop now operates at both ends of the openness spectrum, from Google's engineered system to OpenClaw's accidental one.

ByteDance Pauses Global Launch of Seedance 2.0 Video Generator ByteDance delayed the international rollout of its Seedance 2.0 video generation tool. Engineers and lawyers are working to resolve legal exposure before proceeding. techcrunch.com

OpenAI Launches ChatGPT Integrations with DoorDash, Spotify, Uber, and More ChatGPT users can now order food, book rides, edit designs, and plan travel without leaving the chat interface. Supported apps include DoorDash, Spotify, Uber, Canva, Figma, and Expedia. techcrunch.com

Palantir Demos Show Pentagon Using Claude to Draft War Plans Software demos and Pentagon records detail how Palantir feeds intelligence to Anthropic's Claude and other chatbots to generate operational recommendations. The materials are the most concrete public evidence of generative AI in military planning workflows. wired.com

Index Ventures Partner Unpacks Google's $32B Wiz Acquisition Shardul Shah of Index Ventures walked through the deal mechanics behind Google's largest acquisition ever. Wiz, the cloud security platform, had turned down a lower bid in 2024 before accepting the $32 billion offer. techcrunch.com

AI Chip Demand Hits Gaming: RAM Shortage Raises Console Prices, Studios Cut Staff AI's appetite for memory chips has created a global RAM shortage that is pushing console hardware costs up. Game studios face a parallel squeeze as they cut jobs while adopting AI tools for production. wired.com

Peter Sarlin Launches Quantum Infrastructure Startup After $665M AMD Exit After selling his AI startup to AMD for $665 million, Peter Sarlin founded Qutwo to build enterprise infrastructure for quantum computing. The company is betting it can have platforms deployed before practical quantum hardware arrives. techcrunch.com

Truecaller Adds Family Group Feature to Block Scam Calls Remotely Truecaller launched a family protection mode where one admin receives fraud alerts for all group members. The admin can end an active call on a family member's phone mid-conversation if they suspect a scam. techcrunch.com

Researchers Pinpoint Why AI Models Fail at Certain Game Types AI models consistently fall short when winning depends on intuiting an underlying mathematical function. The results held across model architectures and game formats. arstechnica.com

Peacock Adds AI-Generated Video, Vertical Clips, and Mobile Games NBCUniversal's streaming service is expanding into AI-powered video experiences, short-form vertical clips, and mobile games. The features target mobile-first and live sports audiences. techcrunch.com

Charles Petzold Documents Spotify AI DJ's Persistent Failures Developer and author Charles Petzold published a detailed teardown of Spotify's AI DJ, cataloging repeated errors in music selection and spoken commentary. charlespetzold.com