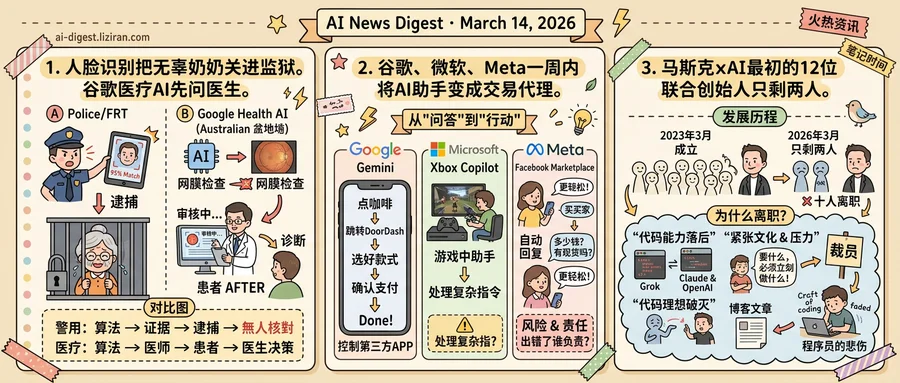

01Facial Recognition Jailed an Innocent Grandmother. Google's Health AI Asks a Doctor First.

A grandmother in North Dakota spent months behind bars after a facial recognition system matched her face to a fraud suspect. Law enforcement arrested her on the strength of that match, according to the Grand Forks Herald. No analyst independently verified the result. Officers used no second method to confirm the identification. She was innocent.

This is how facial recognition typically enters the criminal justice system. Vendors sell to police departments that set their own standards for corroboration, if they set any at all. The software produces a candidate. Officers convert that output into probable cause. A person goes to jail on the confidence score of an algorithm that has never been cross-examined.

Google is deploying the same category of technology in remote Australian communities. Its health initiative uses AI to analyze retinal images, screening for cardiovascular disease in populations that rarely access a cardiologist. The system flags patients who may be at risk. Then it stops. A clinician reviews every flagged result before a patient hears anything about their diagnosis.

Both systems perform the same core operation: an algorithm examines a human body and produces a classification. In North Dakota, that classification put an innocent woman in a cell for months. Rural Australia's version puts a file on a doctor's desk.

Google's program is not controversy-free. Health AI in underserved communities raises questions about data collection and corporate influence over public health. But the deployment architecture includes a checkpoint that policing lacks: a trained professional evaluates the algorithm's output before it reaches the person it affects. The AI screens; the doctor decides. That sequence exists because medical ethics protocols and clinical validation requirements demanded it before AI entered the field.

No equivalent framework governs facial recognition in law enforcement. The United States has no federal statute requiring human verification of an algorithmic match before an arrest. Departments write their own policies. Many have none.

AI identification is expanding beyond policing and medicine into border screening, benefits eligibility, and housing. Each new domain will inherit the same structural question the North Dakota case made visible. Medicine answered yes through regulation decades ago. Law enforcement has left the question open. The systems now adopting this technology are deploying before governance catches up.

02Google, Microsoft, and Meta Turn AI Assistants Into Transaction Agents in One Week

Tell your phone to order a coffee. Gemini opens DoorDash, picks your usual, and checks out in a virtual window you never touch. That scenario went live this week on Samsung Galaxy S26 and Google Pixel devices. It wasn't the only AI assistant that learned to spend money on a user's behalf.

Within days of each other, three of tech's largest platforms shipped AI features that cross the same threshold: from answering questions to executing real-world actions. Gemini now automates food delivery and rideshare orders across Android apps. The Gaming Copilot will arrive on current-generation Xbox consoles this year, an Xbox product manager revealed at GDC. On Facebook Marketplace, sellers can toggle on AI auto-replies that field buyer inquiries without human input.

The entry points differ. Gemini operates as a cross-app automation layer on the phone's OS, controlling third-party apps through a sandboxed interface. Xbox Copilot embeds inside a gaming ecosystem where Microsoft controls the full stack. Meta's version is narrower: an auto-responder bolted onto a peer-to-peer marketplace. But each converts a chatbot into an agent that acts in the real world, placing orders, managing conversations, and navigating services without waiting for human approval at every step.

Whichever company's AI becomes the default action layer captures the consumer interface. Google wants Gemini to replace the app drawer. For Microsoft, Copilot should be the first screen Xbox players see. Meta is targeting the friction that drives sellers off Marketplace. Each positions its assistant as the persistent intermediary between user and transaction.

The unresolved question is liability. When Gemini orders the wrong meal or a Marketplace auto-reply commits a seller to an unintended price, the cost lands somewhere. Google limits automation to a few app categories for now. Meta lets sellers switch auto-replies off. These are guardrails, not answers. As agents gain scope, the distance between "assisted" and "authorized" will determine who pays when they get it wrong.

03Musk's xAI Retains Two of Twelve Original Co-Founders

Guodong Zhang led xAI's Imagine team until last week. Then Musk blamed him for problems with the company's coding product, stripped his responsibilities, and Zhang told colleagues he was done. Zihang Dai, another co-founder, left days earlier. In late February, Toby Pohlen, a former DeepMind researcher, walked away just 16 days after Musk assigned him to lead "Macrohard," xAI's coding initiative announced in August 2025.

Three co-founders gone in weeks. The three-year-old company now retains only two of the twelve people who helped Musk launch it in March 2023.

The trigger is xAI's coding division. Musk has acknowledged that "Grok is currently behind in coding" relative to Anthropic's Claude Code and OpenAI's Codex, with training data reportedly insufficient to close the gap. His response: bring in auditors from SpaceX and Tesla to identify underperforming staff, order fresh layoffs, and declare he was "looking to rebuild xAI from the foundations up." This week, xAI hired two senior engineers from Cursor to patch the hole.

Staff describe a culture where Musk can "call us and demand anything at any time," according to one account from an xAI interview. A former Tesla employee described the rhythm: when Musk wanted something, you dropped everything to deliver it, then got pressed to finish what you'd already been working on. Remaining employees report burnout and falling morale.

The same week Zhang and Dai departed, a developer named Les Orchard published a blog post called "Grief and the AI Split." Orchard has been coding for forty years. The post wasn't about any one company. It documented a fracture running through developer communities as AI tools force people to confront why they write code at all. Some developers mourn the loss of craft itself. Others, like Orchard, grieve the ecosystem changes around them. Both groups, he argues, are processing real loss.

The boardroom exits and the blog post describe different scales of the same pressure. One is measured in headcount and IPO risk. The other shows up only when someone stops to write it down.

Anthropic's Claude Now Generates Charts and Diagrams Inline Claude can produce custom visualizations — charts, diagrams, and other graphics — directly within a conversation. The AI decides when a visual is useful based on context and inserts it inline rather than in a side panel. theverge.com

Netflix Commissions Custom AI Models for Film Production Studios are moving past off-the-shelf generators toward bespoke AI models trained on specific visual styles. Netflix's "Interpositive" project, involving Ben Affleck, uses a purpose-built model rather than general tools like Sora or Runway. The approach treats AI as a per-project tool, not a replacement for production pipelines. theverge.com

"Can I Run AI Locally?" Tool Checks Hardware Against Model Requirements A new web tool lets users input their hardware specs and see which open-weight AI models their machine can run. The site covers popular models across parameter sizes and quantization levels. canirun.ai

Researchers Propose Video-Based Reward Modeling for Computer-Use Agents A new paper introduces reward modeling from execution video — using keyframe sequences from agent trajectories to evaluate task completion. The method is agent-agnostic, judging results from screen recordings rather than internal reasoning traces. It addresses the scaling bottleneck in evaluating whether computer-use agents actually follow instructions. huggingface.co

IndexCache Cuts Sparse Attention Overhead by Reusing Cross-Layer Indices Researchers target the indexer bottleneck in sparse attention systems like DeepSeek Sparse Attention, where the token-selection step still runs at O(L²). IndexCache reuses index computations across transformer layers to reduce this cost. The optimization matters most for long-context agentic workloads where attention efficiency drives serving cost. huggingface.co

Spatial-TTT Brings Streaming Spatial Understanding to Vision Models via Test-Time Training A new method maintains and updates spatial representations from unbounded video streams using test-time training. Spatial-TTT selects and retains spatial evidence over time rather than relying on longer context windows. The approach targets real-world applications where an agent must continuously process visual input. huggingface.co

MADQA Benchmark Tests Whether Document Agents Reason or Just Search Randomly Researchers built a 2,250-question benchmark grounded in 800 heterogeneous PDFs to measure whether multimodal agents use genuine strategy when navigating document collections. The benchmark applies Classical Test Theory to maximize discrimination across agent skill levels. Early results suggest many agents rely on trial-and-error rather than structured reasoning. huggingface.co

GOLF Framework Uses Natural Language Feedback to Guide RL Exploration A new reinforcement learning framework aggregates group-level language feedback — not just scalar rewards — to steer exploration toward actionable improvements. Current RL methods discard the rich information in natural language signals from environment interactions. GOLF converts that feedback into targeted exploration strategies. huggingface.co