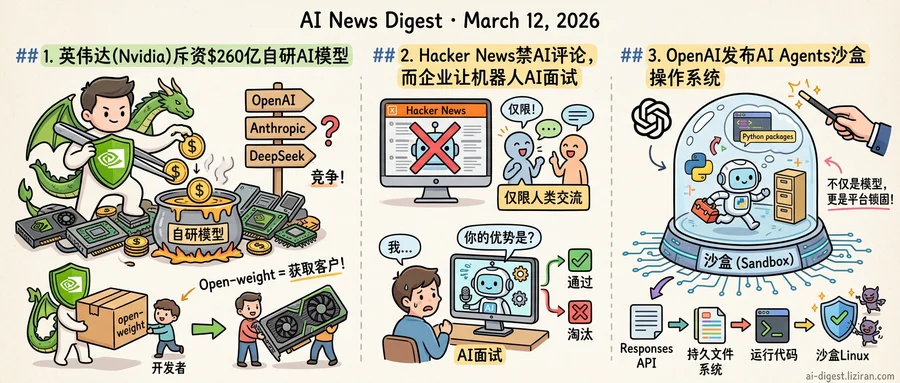

01Nvidia Commits $26 Billion to Build Its Own AI Models

SEC filings reviewed by Wired show Nvidia plans to spend $26 billion developing open-weight AI models. That is roughly a fifth of the company's $130 billion in annual revenue, redirected from hardware profits into direct competition with OpenAI, Anthropic, and DeepSeek. Days after announcing it would stop investing in outside AI companies, Nvidia made clear why: it intends to build the models itself.

The filing is one of two moves ahead of Nvidia's annual GTC conference. Ars Technica reports Nvidia is also developing NemoClaw, an open-source robotics grasping platform designed to rival OpenClaw. The company is courting enterprise partners to seed the ecosystem before launch. Together, the two initiatives extend Nvidia's reach from chips into both the model layer and the application layer.

Neither market is empty. In China, OpenClaw has already sparked a startup wave. MIT Technology Review profiled Feng Qingyang, a 27-year-old Beijing engineer who built a company on the framework in weeks. The demand existed before Nvidia arrived. Now the largest hardware company in AI wants to capture it.

Three signals point one direction. Nvidia owned the base of the AI stack: chips. It expanded into developer tooling with CUDA and networking with InfiniBand. Now it is building models and robotics software at the same time. The supplier is positioning itself as the competitor.

For pure-model companies, the arithmetic shifts. OpenAI, Anthropic, and Mistral buy Nvidia GPUs to train their systems. If Nvidia ships open-weight alternatives optimized for its own silicon, customers face a bundled offering no standalone provider can match on price. Open-weight is the distribution mechanism: every developer who adopts a Nvidia model becomes a GPU buyer by default. Releasing weights for free is not generosity. It is a customer-acquisition channel for GPUs that cost tens of thousands of dollars each.

Nvidia can sustain this longer than any model-only competitor. Its hardware revenue dwarfs every foundation-model company combined. Model development becomes a loss leader that feeds chip sales. The startups on the other side of this equation carry no such hedge.

The flywheel is straightforward. Open models drive GPU demand, and GPU profits fund more open models. For companies that sell only models, the margin pressure now comes from their own supplier.

02Hacker News Bans AI Comments While Companies Let Bots Run Job Interviews

Hacker News updated its site guidelines last week with a blunt addition: "Don't post generated/AI-edited comments. HN is for conversation between humans." The post drew 2,278 upvotes and 851 comments, a rare show of consensus for a community known for contentious debate.

That same week, The Verge reported on a shift moving in the opposite direction. AI-powered avatars now conduct live video job interviews for major employers. They ask behavioral questions, evaluate tone and content, and decide which candidates advance. No human watches. The applicant sits alone with a synthetic face that holds their career prospects.

The people cheering the HN ban largely build AI for a living, shipping code with Copilot and drafting documents with Claude. What they reject isn't the technology itself. It's AI masquerading as a human participant in conversation. Moderator dang had enforced this norm informally for years. Codifying it drew near-universal support. Top comments argued that AI-generated replies erode the signal that makes HN worth visiting: genuine human judgment under real names.

Job applicants don't get a comparable vote. When a company adopts an AI interviewer, candidates face a binary: perform for the bot or exit the pipeline. The Verge's reporter went through the process firsthand, describing an AI avatar that maintained eye contact, nodded along, and never left its script. Employers point to the math. A single job opening can draw thousands of applications. Automated screening isn't cruelty; it's triage.

HN's developers chose where AI belongs in their community and drew a hard line. Job seekers have that line drawn for them by hiring departments they'll never meet. The engineer who upvoted "HN is for humans" on Tuesday may work at a company deploying an AI recruiter by Wednesday. Both decisions respond to real operational pressure. Only one group had a say in the outcome.

03OpenAI Ships a Sandboxed OS for AI Agents

A developer building an AI agent that installs Python packages, writes scripts, and reads output files can now do all of that inside OpenAI's infrastructure. The company shipped a container-based execution environment for its Responses API, turning what was a text-generation endpoint into something closer to a hosted operating system.

The setup gives each agent session a sandboxed Linux container with shell access, a persistent file system, and the ability to run arbitrary code. An agent can spin up, install dependencies, execute a multi-step workflow, and write results to disk — all without the developer provisioning a single server. OpenAI manages the compute, the isolation, and the state.

That's a different business than selling API calls per token.

The same week, OpenAI published its framework for defending ChatGPT against prompt injection — the class of attacks where malicious instructions hiding in external data hijack an agent's behavior. The paper describes a layered system. It identifies high-risk tool calls, constrains actions that touch sensitive data, and applies separate rules based on whether instructions come from the user or from external content.

The timing is not coincidental. A sandbox that lets agents run shell commands is an invitation for prompt injection at scale. Without guardrails distinguishing trusted instructions from poisoned inputs, opening that environment to external developers would be reckless. OpenAI needed both pieces before either was safe to ship.

For developers, the pitch is concrete. Today, running a code-executing agent means stitching together container orchestration, sandboxing, file storage, and security layers. OpenAI is offering that entire stack as a managed service. The Responses API becomes not just a model call but a session: stateful, persistent, and capable of real computation.

The strategic shift matters more than any single feature. OpenAI spent 2024 competing on model benchmarks. This move competes on platform lock-in. Once an agent's workflows, files, and execution state live inside OpenAI's containers, switching to Anthropic or Google means rebuilding the runtime, not just swapping an API key.

Anthropic Launches the Anthropic Institute Anthropic announced a new organization called the Anthropic Institute. The company has not yet disclosed the institute's full mandate, funding, or leadership details. anthropic.com

Rakuten Cuts Mean Time to Resolution by 50% with OpenAI's Codex Rakuten deployed OpenAI's Codex coding agent across its software engineering workflow. The company reports halved bug-fix times, automated CI/CD review processes, and full-stack builds delivered in weeks instead of months. openai.com

Wayfair Automates Product Catalog and Support Triage with OpenAI Models Wayfair integrated OpenAI models to classify support tickets and enrich millions of product attributes automatically. The system handles customer-facing triage and backend catalog accuracy at scale. openai.com

Google Deploys AI to Screen for Heart Disease in Remote Australia Google launched an AI program targeting cardiovascular health in remote Australian communities. The initiative focuses on populations with limited access to specialist cardiac care. blog.google

Study Finds Reasoning Mode Unlocks Factual Knowledge LLMs Otherwise Cannot Recall Chain-of-thought reasoning helps LLMs answer simple factual questions they get wrong without reasoning enabled. The effect applies to single-hop queries requiring no multi-step logic, suggesting reasoning activates latent parametric knowledge. huggingface.co

MM-Zero Trains Vision-Language Models from Scratch Without Any Seed Data Researchers introduced MM-Zero, a multi-model system that bootstraps vision-language model training without seed images or paired data. The approach removes the data dependency that typically gates VLM self-improvement. huggingface.co

New Benchmark Exposes Consistency Failures in Long-Form LLM Story Generation Researchers released ConStory, a benchmark measuring how often LLMs contradict their own facts, character traits, and world rules across long narratives. Prior benchmarks measured plot quality and fluency but left internal consistency untested. huggingface.co

CoCo Replaces Natural-Language Planning with Code for Text-to-Image Generation A new framework called CoCo uses executable code as chain-of-thought reasoning during image generation. Code-based planning handles spatial layouts, structured elements, and dense text more precisely than abstract language prompts. huggingface.co

LoGeR Scales Dense 3D Reconstruction to Minutes-Long Video Without Post-Optimization Researchers presented LoGeR, an architecture that reconstructs 3D scenes from long video sequences by processing chunks with hybrid memory. The design bypasses quadratic attention costs that block existing models from handling extended footage. huggingface.co