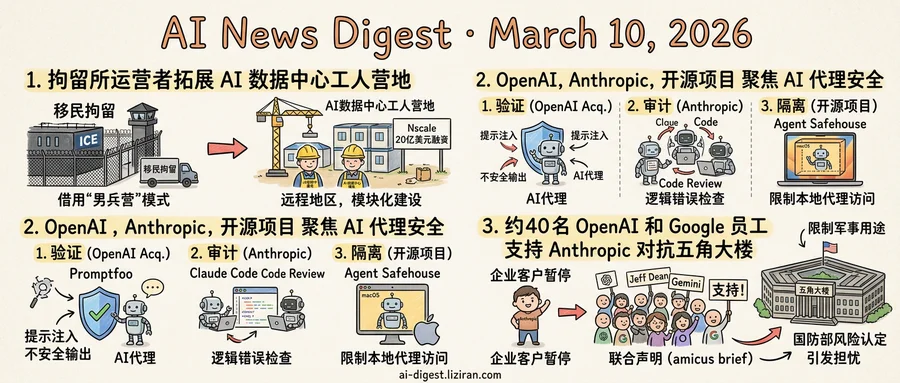

01ICE Detention Facility Operator Expands Into AI Data Center Worker Camps

A company that operates immigration detention facilities for ICE has found its next growth market: housing the thousands of construction workers building America's AI data centers.

The model is borrowed from the oil patch. Remote drilling sites in North Dakota and West Texas long relied on "man camps": prefabricated dormitory complexes near wellheads, where roughnecks slept between shifts. The camps offered beds, cafeterias, and little else. Workers arrived, built infrastructure, and moved on. As data center construction accelerates across the country, the same housing format is following the demand.

Data centers cost billions and take years to build. They often sit in rural areas chosen for cheap land and power access. Crews number in the thousands and need somewhere to sleep. The detention facility operator recognized the overlap: remote locations, large transient populations, institutional-scale housing needs. Its existing business model translates with minimal retooling.

The camps themselves are modular and temporary. Rows of identical units house workers cycling through months-long construction stints. A company built to hold large populations in controlled environments needs little adaptation to shift from detainees to electricians.

This pivot is happening while Silicon Valley's elite pile into the other end of the same supply chain. Nscale, a British AI infrastructure startup, closed a $2 billion round last week at a $14.6 billion valuation. Its board now includes Sheryl Sandberg and Nick Clegg. Less than a year ago, Clegg served as Meta's president of global affairs. Nvidia is among Nscale's backers.

The distance between these two stories is shorter than it looks. Nscale sells compute to AI companies. That compute runs in data centers, which get built by construction workers who sleep in camps operated by a company whose other clients include U.S. Immigration and Customs Enforcement. Sandberg and Clegg will help court sovereign AI deals across Europe. Nobody at their level of the stack spends much time thinking about where the welders sleep.

But the detention operator does. It sees a building boom measured in hundreds of billions of dollars and has spent years learning to house people at scale in places nobody else wants to build. The expertise behind ICE facilities is now its pitch to data center developers.

02OpenAI, Anthropic, and Open Source Converge on Agent Security

Within days of each other, three separate teams placed bets on the same problem. OpenAI agreed to acquire Promptfoo, a platform that tests AI systems for vulnerabilities during development. Inside Claude Code, Anthropic shipped Code Review, a multi-agent feature that audits AI-generated code for logic errors. On the open-source side, Agent Safehouse drew 781 points and 174 comments on Hacker News for its macOS-native sandbox that isolates local AI agents.

Each targets a different failure mode. Promptfoo catches prompt injection and unsafe outputs before deployment. At the code level, Anthropic's review feature flags logic errors in what agents write. Agent Safehouse constrains what agents can access at runtime. Together they map the full agent lifecycle: build, review, execute.

AI agents now run code, call external APIs, and manage cloud infrastructure on behalf of users. A supply chain attack on a popular coding agent earlier this year showed these risks are practical, not hypothetical. When an agent can delete production files or leak API keys through a malicious extension, model accuracy alone is not a safety argument.

OpenAI's acquisition is the most direct response. Bringing Promptfoo in-house means owning the verification layer rather than leaving it to third parties. For a company selling enterprise agent products, that removes a key procurement objection: proving autonomous systems are safe before deployment.

Anthropic built review tooling into its own developer environment instead. Code Review uses multiple agents to cross-check each other's output, treating AI-generated code as untrusted by default. The approach mirrors how enterprises already handle third-party dependencies.

Agent Safehouse fills the runtime gap, providing filesystem and network isolation for local agents. Its Hacker News reception suggests individual developers want the same containment that enterprise security teams would otherwise build internally.

Web applications went through a similar transition. Mainstream adoption in the early 2000s created demand for firewalls and static analysis tools within a few years. Agent verification tooling is on that trajectory now.

03Nearly 40 OpenAI and Google Employees Break Ranks to Back Anthropic Against the Pentagon

Nearly 40 employees from OpenAI and Google filed an amicus brief Monday supporting Anthropic's lawsuit against the Department of Defense. Among the signatories: Jeff Dean, Google's chief scientist and head of its Gemini AI effort.

The filing came hours after Anthropic sued the DoD in a California district court over its supply chain risk designation. But the amicus brief was the more unusual move. Tech employees almost never publicly endorse a direct competitor. These signatories did exactly that, detailing concerns about the Trump administration's approach to military AI.

Their employers have taken no such position. Google holds substantial defense contracts and has rebuilt its military AI business since the 2018 Project Maven controversy. Last year, OpenAI removed its ban on military applications and moved steadily toward government work. Neither company joined the brief or issued statements of support.

Dean signed in what appears to be a personal capacity. But his title — chief scientist at Google — travels with his name. Whether Google views his signature as an individual act or an institutional signal, enterprise clients and Pentagon officials will read it as the latter.

Anthropic told Wired that enterprise customers have paused contract negotiations since the designation. Executives warned the fallout could cause a major revenue hit, potentially reaching billions of dollars. The label does not need to survive a legal challenge to do damage. It just needs to make procurement officers hesitate.

The confrontation runs on two levels. In court, Anthropic argues the government punished it for restricting military use of its AI. Inside Google and OpenAI, employees are saying publicly what their companies won't. The administration's approach to AI companies that set military guardrails worries them enough to back a rival's lawsuit.

The brief binds neither Google nor OpenAI to anything. But it puts their leadership in a harder position. Staying silent reads differently once your own staff have spoken up.

X Adds Toggle to Block Grok From Editing Your Photos X rolled out an iOS setting that lets users prevent other people from manipulating their uploaded images with Grok. The toggle appears in image upload settings but offers no retroactive protection for photos already on the platform. theverge.com

Qualcomm and Neura Robotics Partner on Next-Gen Robot Hardware Neura Robotics will build new robots on Qualcomm's IQ10 processors, first announced at CES. The partnership pairs Neura's humanoid robot designs with Qualcomm's edge AI silicon. techcrunch.com

Apple Pushes Smart Home Display to Fall Launch With iOS 27 Apple's long-rumored "HomePod with a screen" slipped again — from 2025, to spring 2026, now to fall 2026. Leaker Kosutami reported the delay first; Bloomberg's Mark Gurman confirmed it. theverge.com

AI-Powered Intelligence Dashboards Turn the Iran Conflict Into a Spectator Event Real-time AI dashboards tracking the Iran conflict have attracted large online audiences, with users treating military intelligence feeds as live entertainment. The trend blurs the line between open-source intelligence and voyeurism. technologyreview.com

Blog Post Argues AI Reimplementation Erodes Copyleft Even When Legal A new essay examines how AI-assisted code reimplementation can replicate copyleft software's functionality without triggering license obligations. The author distinguishes legality from legitimacy, arguing the practice undermines open-source norms that copyright law alone cannot protect. writings.hongminhee.org

Wired Asks Whether AI Will Disrupt Venture Capital Itself VCs fund AI companies premised on disrupting every industry — but Wired examines whether AI deal-sourcing, due diligence, and portfolio management tools could shrink the role of VCs themselves. wired.com

Penguin-VL Challenges Assumption That Vision Models Need Massive Pretraining Researchers propose Penguin-VL, a compact vision-language model (2B–8B parameters) that drops the standard CLIP/SigLIP vision encoder. The paper shows competitive performance without contrastive pretraining, targeting deployment on phones and edge devices. huggingface.co

BandPO Fixes PPO's Suppression of Low-Probability Actions in LLM Training A new paper identifies a flaw in PPO's fixed clipping bounds: they disproportionately suppress high-advantage but low-probability actions, causing rapid entropy collapse. BandPO introduces probability-aware bounds that scale constraints based on action likelihood. huggingface.co

Essay Revives Knuth's Literate Programming for the AI Agent Era A blog post argues that literate programming — writing code as a human-readable narrative — fits naturally with AI agents that read and generate code. The approach prioritizes intent clarity over syntax, which may help agents produce more coherent outputs. silly.business