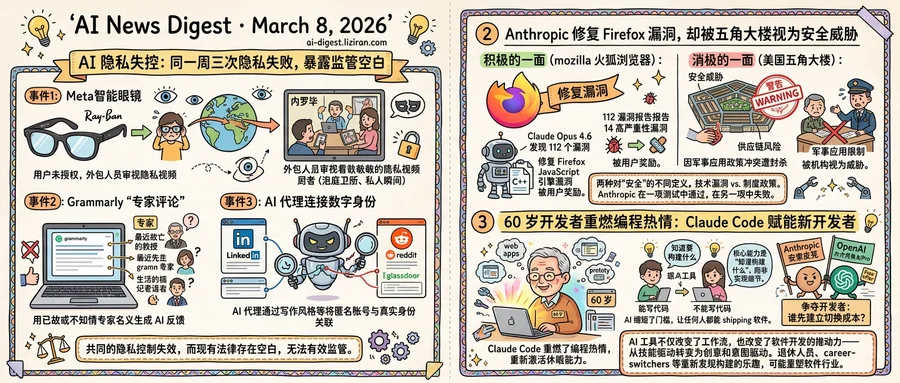

01Three AI Privacy Failures in One Week Reveal the Same Regulatory Void

A Swedish journalism team wanted to know what happens to footage captured by Meta's Ray-Ban smart glasses. They found out: some of it ends up on screens in Nairobi. An investigation by Svenska Dagbladet and Göteborgs-Posten found that Meta contractors in Kenya have reviewed videos showing bathroom visits, sex, and other intimate moments. Users who activate the glasses' AI assistant send queries through a processing pipeline. That pipeline includes human review by outsourced workers in East Africa, according to the report.

The same week, the identity layer cracked open.

Grammarly launched an "expert review" feature offering writing advice "inspired by" named subject matter experts. Wired reported that the roster includes recently deceased professors. The Verge's own testing found living journalists listed as AI advisors without their knowledge. Real names and implied expertise were attached to AI-generated feedback. No one on the list had authorized the use, and Grammarly's interface gave users no indication the "experts" hadn't personally participated.

Then a recently published study showed that AI agents can link pseudonymous online accounts to real identities by cross-referencing writing style, posting patterns, and public data. Maintaining a gap between a professional LinkedIn profile and an anonymous Reddit account or Glassdoor review requires more operational security than most users practice. AI narrows that gap fast.

Three unrelated incidents. One structural pattern: the mechanisms people rely on to control their digital visibility are failing at every layer simultaneously. Meta users didn't consent to intimate footage reaching reviewers on another continent. Grammarly's named experts had no idea the feature existed. Anonymous posters face a threat few have even considered.

Each case sits in a different gap in existing law. Data protection rules govern storage and processing but poorly cover AI systems routing personal footage across borders for contractor review. Identity law has nothing to say about AI-generated content "inspired by" a real person's credentials. No regulation requires platforms to defend users against algorithmic de-anonymization.

The companies involved aren't coordinating. They don't need to be. Each product optimizes for a narrow function: better AI training, better writing tools, better search. Privacy loss is the compound side effect, and the regulatory architecture has no answer for it.

02Anthropic Patched Firefox for Hundreds of Millions of Users. The Pentagon Calls It a Security Threat

Anthropic's red team spent two weeks in February scanning nearly 6,000 C++ files in Firefox's codebase. Claude Opus 4.6 found its first vulnerability in twenty minutes. By the end, the team had submitted 112 unique reports to Mozilla. Fourteen were classified high-severity. Most were patched in Firefox 148.0, shipped to hundreds of millions of users.

That same stretch, the Defense Department formally labeled Anthropic a "supply-chain risk," escalating a months-long standoff over the company's acceptable use policies.

One designation rewards the company for finding memory flaws that could let attackers overwrite data with malicious content. The other treats the company itself as the threat.

The Firefox work was specific and verifiable. Anthropic's model autonomously identified use-after-free vulnerabilities in Firefox's JavaScript engine, then expanded to other browser components. Human researchers validated each finding in independent virtual machines. Mozilla required minimal test cases, detailed proofs-of-concept, and candidate patches before accepting submissions. The discovery rate exceeded any single month in 2025 and accounted for roughly one-fifth of all high-severity Firefox vulnerabilities remediated that year.

The Pentagon's label is a different kind of judgment. It followed weeks of failed negotiations and public ultimatums over Anthropic's restrictions on military applications of its models. The designation, first reported by The Wall Street Journal, could trigger procurement restrictions across the defense supply chain and may end up in court.

What separates the two verdicts is the definition of "security" itself. Mozilla's version is technical: find the bug, write the patch, ship the fix. The Pentagon's is institutional: does this vendor's policies align with our operational requirements? Anthropic passed one test by producing concrete results. It failed the other without any claim that its technology is defective.

The model that found those fourteen high-severity bugs spent $4,000 in API credits trying to develop working exploits from them. It succeeded twice. Anthropic noted the system was "much better at finding these bugs than it is at exploiting them." The Pentagon, apparently, isn't evaluating the bugs.

03A 60-Year-Old Developer Picked Up Claude Code and Couldn't Stop Building

A Hacker News user posted last week that at 60, Claude Code had re-ignited a passion for programming. The thread drew nearly a thousand upvotes and over 800 comments, most from people recognizing their own experience in the story.

The responses painted a pattern. Retirees shipping side projects. Career-switchers building prototypes without bootcamps. Managers who'd stopped writing code years ago, now starting again. AI tools hadn't replaced their abilities. They had reactivated dormant ones.

That dynamic extends beyond hobbyists. Developer Yasin Taha argued in a recent blog post that "we might all be AI engineers now." The line between people who can code and people who can't is losing meaning, he wrote. AI handles implementation well enough for anyone with clear intent to ship working software. Product managers can produce prototypes; data analysts can write deployment scripts. The skill that matters is knowing what to build, not how to express it in a particular language.

OpenAI and Anthropic are racing to capture this expanding base. Anthropic offered six months of free Claude Max to open-source maintainers with 5,000-plus GitHub stars or one million-plus NPM downloads. OpenAI countered days later with a matching offer: six months of ChatGPT Pro, including Codex access, for core maintainers. Both programs carry the same $200/month retail price. The pitch from each: embed our tools in developer workflow before the other side does.

The targeting is deliberate. Open-source maintainers shape toolchains that thousands of downstream developers adopt. Win a maintainer's daily habits, win their ecosystem. Neither company disclosed application numbers, but the identical structure and pricing suggest each is benchmarking directly against the other. The real product isn't the free subscription. It's the switching cost that builds over six months of daily use.

Back on Hacker News, the 60-year-old poster wasn't tracking platform wars. They were building. That impulse, multiplied across people who'd written off coding as someone else's job, may reshape who writes software more than any model benchmark.

Netflix Acquires Ben Affleck's AI Production Startup InterPositive Netflix bought InterPositive, Ben Affleck's AI company that builds tools for film and television production. All 16 of InterPositive's engineers will join Netflix. theverge.com

Apple Music Launches Voluntary AI Transparency Tags for Songs and Artwork Apple now asks artists and record labels on Apple Music to tag content made with AI. The "Transparency Tags" metadata system covers four categories: track, composition, artwork, and music videos. Tagging is voluntary. theverge.com

Google Open-Sources SpeciesNet Model for Wildlife Conservation Google released SpeciesNet, an open-source AI model that helps conservation researchers identify animal species from camera trap images worldwide. blog.google

Researchers Propose Interactive Benchmarks to Replace Saturated Static Tests A new paper argues standard AI benchmarks fail due to saturation, subjectivity, and poor generalization. The proposed framework evaluates models on their ability to actively acquire information under budget constraints across two settings: interactive proofs and interactive retrieval. huggingface.co

DreamWorld Framework Jointly Models Physics and Geometry for Video Generation A new paper presents DreamWorld, a video generation system that unifies multiple forms of world knowledge—physics, geometry, semantics—into a single model. Prior approaches incorporated only one type of world knowledge or relied on rigid alignment strategies. huggingface.co

DARE Retrieval Model Helps LLM Agents Pick the Right R Statistical Tools Researchers released DARE, a retrieval model that matches LLM agents with appropriate R statistical functions by incorporating data distribution information. Standard retrieval methods ignore distribution characteristics, leading to poor tool selection for statistical workflows. huggingface.co

Google Explains Query Fan-Out Method Behind AI-Powered Visual Search Google published a technical breakdown of how AI Mode in Search processes visual queries. The system splits image-based searches into multiple sub-queries to assemble answers from different sources. blog.google

OpenClaw Holds First Open-Source AI Conference in Manhattan OpenClaw, an open-source AI community, hosted ClawCon in New York City. The multi-floor event drew developers and sponsors under lobster-themed branding. theverge.com