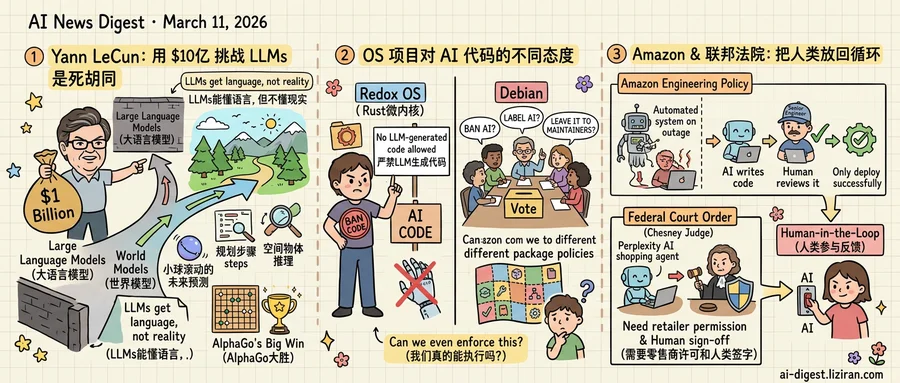

01Yann LeCun Raised $1 Billion to Prove Large Language Models Are a Dead End

For years, Yann LeCun told anyone who would listen that large language models were headed nowhere interesting. Token prediction could produce fluent text, he argued, but would never yield machines that understood cause and effect, physics, or spatial reasoning. Coming from Meta's chief AI scientist and a Turing Award laureate, the argument was hard to dismiss. Easy to ignore, though: LLMs kept getting better, and LeCun had no alternative to show.

Now he does. LeCun raised $1 billion for a new venture building what he calls "world models": AI systems trained not on text but on representations of physical reality, according to Wired. The company aims to produce models that can predict what happens next in a scene, plan multi-step actions, and reason about objects in space. By dollar amount, it represents the largest direct challenge to the premise that scaling language models leads to general intelligence.

The bet is structurally familiar. Ten years ago this month, DeepMind's AlphaGo defeated Lee Sedol in a match most AI researchers expected the machine to lose. DeepMind had spent years on reinforcement learning when the field's momentum pointed elsewhere. The victory redirected billions in research funding and reframed what AI could do. Google's retrospective, published for the anniversary, traces a line from that Go match to AlphaFold's protein structure predictions and beyond.

LeCun's supporters see the same arc forming. But the analogy has a limit. Go offered a closed board, fixed rules, and a binary win condition. "Understanding the physical world" has none. LeCun's published research describes a Joint Embedding Predictive Architecture (JEPA) that learns abstract representations rather than pixel-level predictions. Promising results exist on video understanding benchmarks. No one has shown the approach working at the scale or generality that LLMs have already demonstrated in language.

What LeCun does have is a thesis that identifies a real gap. LLMs still hallucinate, still fail at basic spatial reasoning, still cannot reliably plan physical actions. Robotics labs, autonomous vehicle companies, and industrial automation firms all need AI that models physics, not prose. A billion dollars buys enough runway to find out whether world models fill that gap. Or "world models" stays a label for a problem no one yet knows how to solve.

02Redox OS Bans AI-Generated Code While Debian Can't Even Vote on It

Redox OS updated its contributor guidelines this week with a flat prohibition: no code produced by large language models will be accepted. Contributors must now sign a certificate of origin affirming that every line they submit is human-written. The Rust-based microkernel project, maintained by a small core team, made the call unilaterally.

That same week, Debian's developer community tried to address the same question. It failed. A proposed general resolution on AI-generated contributions stalled before reaching a vote, according to a report on LWN.net. The project couldn't agree on whether to ban AI code, label it, or leave the decision to individual package maintainers. Debian's governance requires supermajority consensus across more than a thousand voting developers. On a question this polarizing, that structure produced paralysis.

The split isn't about whether AI-generated code is good or bad. It's about who gets to decide. Redox OS operates under a benevolent-dictator model: its lead maintainer sets policy, contributors comply or leave. Debian runs on constitutional democracy, with formal voting procedures, amendment cycles, and a secretary who interprets procedural rules. One structure can move in a day. The other can spend months deciding whether to start deciding.

Both positions face the same enforcement problem. Hong Min-hee, writing on the erosion of copyleft, argued that AI models can now produce functional reimplementations of open-source code carrying no detectable trace of the original. If an LLM absorbs a GPL-licensed library and outputs equivalent logic in clean-room style, no certificate of origin will catch it. The contributor may not even know. Redox's ban assumes AI involvement is visible and declarable. That assumption gets weaker with every model generation.

Debian's indecision carries its own cost. Without a project-wide policy, individual maintainers set their own rules. Some Debian packages already accept AI-assisted contributions; others reject them. The result is a patchwork where the same distribution enforces different standards depending on which human reviews the merge request.

Neither approach solves the underlying problem. A ban that can't be verified and a debate that can't be concluded both leave the door open to exactly the outcome each side fears.

03Amazon and a Federal Court Both Put Humans Back in the AI Loop

Amazon now requires senior engineers to approve AI-assisted code changes before deployment. The policy, reported by Ars Technica, followed service outages that the company traced to code generated or modified by AI tools. Automated systems had pushed changes into production without adequate human review, causing downtime. The fix was structural: a senior engineer must sign off before any AI-assisted modification goes live.

Days later, US District Judge Maxine Chesney ordered Perplexity to stop its AI shopping agents from placing orders on Amazon. The ruling stated that Amazon provided "strong evidence" that Perplexity's Comet browser accessed user accounts "without authorization" from the retailer. Perplexity's agents could browse and recommend products. They could not, the court ruled, complete a purchase autonomously.

One response is corporate policy triggered by system downtime. The other is a federal court order triggered by unauthorized account access. But the structural logic is identical: AI gained the ability to act on its own, and institutions responded by reinstalling a human gate. That convergence, across an engineering mandate and a judicial order in the same week, is not coincidence.

Companies spent 2024 and 2025 racing to give AI agents more autonomy over code, web browsing, and financial transactions. The operating assumption was that automation scales by removing human bottlenecks. Both corrections this week reverse that assumption. When autonomous AI breaks something or oversteps its authority, the first institutional reflex is to insert a person back into the chain.

Amazon's case reveals the mechanism clearly. The outages did not stem from AI writing bad code in isolation. They resulted from AI-modified code reaching production through deployment pipelines that lacked human review gates. The sign-off policy targets the pipeline, not the model. Judge Chesney's order applies the same principle to commerce: Perplexity's agents were not banned from helping users shop, only from completing transactions without the retailer's permission.

Neither response questions whether AI should assist with the task. Both draw the line at AI completing it alone. The distinction between assistance and autonomy is where institutional guardrails are now being built.

OpenAI Drops Oracle from Stargate Data Center Expansion OpenAI is walking away from expanding its Stargate data center project with Oracle. The split raises questions about Oracle's debt-fueled buildout strategy as AI infrastructure demands shift. cnbc.com

Iran Conflict Threatens Data Center Electricity Costs Rising oil and gas prices from the spiraling Iran conflict are pushing up electricity costs for data centers. The Atlantic Council's Reed Blakemore warns the energy price impact could tighten margins across AI infrastructure operators. theverge.com

OpenAI Publishes IH-Challenge to Harden Models Against Prompt Injection OpenAI released IH-Challenge, a training method that teaches models to prioritize trusted instructions over injected ones. The approach improves instruction hierarchy compliance, safety steerability, and resistance to prompt injection attacks across frontier models. openai.com

Google Claims State-of-the-Art Performance for Gemini in Sheets Google launched new beta features putting Gemini directly into Google Sheets for creating, organizing, and editing spreadsheets via natural language. Users can describe tasks from basic formatting to multi-step data analysis. blog.google

Niantic's Pokémon Go Map Data Now Guides Delivery Robots Niantic is licensing the spatial mapping data collected by millions of Pokémon Go players to give delivery robots precise, street-level navigation. The dataset offers inch-perfect 3D views of sidewalks, curbs, and obstacles that standard maps lack. technologyreview.com

Ford Launches AI-Powered Fleet Management Service Ford announced Ford Pro AI, a service that analyzes commercial vehicle data — speed, seat belt use, engine health — and converts it into action items for fleet managers. The system layers generative AI on top of Ford's existing telematics platform. theverge.com

ChatGPT Adds Interactive Visual Explanations for Math and Science OpenAI shipped a feature letting ChatGPT render interactive diagrams for math and science topics. Students can manipulate formulas and variables in real time rather than reading static text explanations. openai.com

Analysis Debunks $5,000-Per-User Cost Claim for Claude Code A detailed breakdown by Martin Alderson challenges widely circulated estimates that Anthropic spends $5,000 per Claude Code user. The analysis reexamines the assumptions behind the viral figure. martinalderson.com

Anthropic Opens Sydney Office, Its Fourth in Asia-Pacific Anthropic is establishing a Sydney office, expanding its Asia-Pacific presence to four locations. The move follows the company's recent expansions across the region. anthropic.com

HuggingFace Paper Explores How Far Unsupervised RLVR Can Scale LLM Training A new study provides a taxonomy and experimental analysis of unsupervised reinforcement learning with verifiable rewards (URLVR), which derives training signals without ground-truth labels. The method uses model-intrinsic signals to push past the supervision bottleneck. huggingface.co