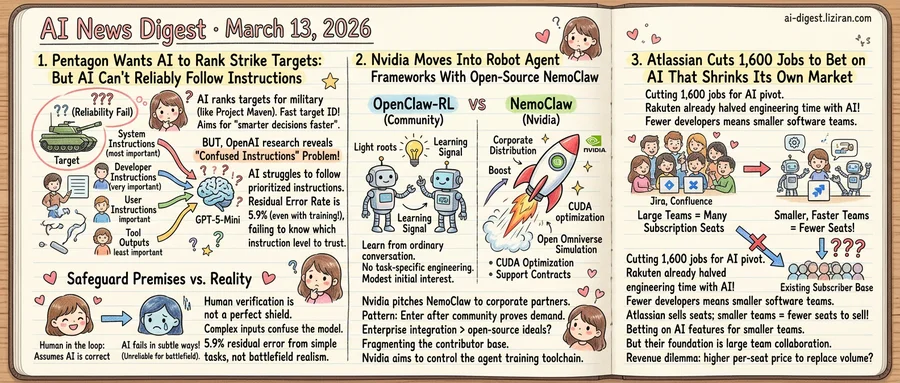

01The Pentagon Wants AI to Rank Strike Targets. The AI Can't Reliably Follow Instructions

A Defense Department official disclosed this week that the US military may use generative AI chatbots to rank strike targets and recommend which to hit first. Humans would vet the AI's recommendations before action. The systems would layer onto Project Maven, the Pentagon's existing intelligence platform, adding a conversational interface to accelerate target identification from hours to seconds.

Admiral Brad Cooper, US Central Command leader, said AI helps warfighters make "smarter decisions faster than the enemy can react." A Pentagon spokesperson added that "humans will always make final decisions on what to shoot."

That same week, OpenAI published two research papers acknowledging that its models still struggle with a foundational requirement for such systems: knowing which instructions to trust.

On March 10, OpenAI released IH-Challenge, a training dataset built around a four-level instruction priority system. System instructions override developer instructions, which override user instructions, which override tool outputs. Fine-tuning GPT-5-Mini on the dataset improved instruction-hierarchy compliance from 84.1% to 94.1%. After targeted training, the model still disobeys the correct priority roughly one time in seventeen.

One day later, OpenAI published a framework for defending AI agents against prompt injection. The company's position: the problem "is not expected to be fully eliminated," comparable to online scams that can be managed but never solved. OpenAI's recommended defense is not better models but better system design. Agents should take only limited, reversible actions and pause for human confirmation before anything consequential.

That recommendation maps poorly onto military targeting. Strike prioritization is neither limited nor reversible.

The Pentagon's human-review safeguard rests on a premise: the AI's ranking reflects a correct reading of its inputs. OpenAI's own research shows models still confuse instruction sources at measurable rates. IH-Challenge identified three failure modes: models mishandle complex instructions, struggle with subjective conflicts between instruction levels, and learn shortcuts like rejecting harmless requests to appear safe. The largest gaps appeared in conflicts between developer-level and user-level instructions. A military system would require exactly that kind of layered authority structure.

Rep. Sara Jacobs stated it directly: "AI tools aren't 100% reliable — they can fail in subtle ways." OpenAI's March publications quantify those subtle failures. A 5.9% residual error rate comes from controlled benchmarks with simple, scripted tasks. Battlefield data is neither simple nor scripted.

02Nvidia Moves Into Robot Agent Frameworks With Open-Source NemoClaw

A research paper demonstrated that reinforcement learning can train arbitrary software agents through ordinary conversation, with no task-specific engineering. Days later, Nvidia announced an open-source framework targeting the same space with corporate distribution behind it.

OpenClaw-RL, posted to Hugging Face this month, showed that every agent interaction generates a usable training signal: user replies, tool outputs, terminal state changes. A single policy can learn from all of them without hand-crafted reward functions. The paper drew 59 upvotes on Hugging Face — modest for a technical paper but enough to signal genuine interest.

NemoClaw, Nvidia's open-source robot agent framework, is reportedly being pitched to corporate partners ahead of the company's annual GTC conference, according to Ars Technica. Nvidia has spent two years entering every software layer that generates demand for its chips, from open-weight language models to simulation platforms. The agent framework tier is the latest target, where developer adoption converts directly into GPU purchases for training and inference.

NemoClaw doesn't need to outperform OpenClaw-RL on research benchmarks. It needs CUDA optimization, Omniverse simulation hooks, and the support contracts that procurement teams require. The pattern from Nvidia's open-weight model push applies: arrive after open-source communities prove demand, then offer enterprise integration the originals can't match. Academic frameworks rarely compete on those terms.

For robotics agent developers, this shifts the competitive balance. Independent projects attract contributors because they sit outside any single vendor's control. A well-resourced Nvidia alternative could pull enterprise users toward a framework optimized for one company's hardware, fragmenting the contributor base. Deep learning frameworks traced the same arc a decade ago, when corporate-backed projects like TensorFlow and PyTorch absorbed the communities that independent tools had built.

Nvidia faces earnings pressure to prove its software investments generate sustained chip revenue. Whoever controls the agent training toolchain shapes hardware purchasing the way CUDA shaped deep learning infrastructure.

03Atlassian Cuts 1,600 Jobs to Bet on AI That Shrinks Its Own Market

Scott Farquhar and Mike Cannon-Brookes built Atlassian on a simple premise: software teams are big, and big teams need tools to coordinate. Jira tracks their work. Confluence holds their docs. The entire product suite assumes dozens of engineers passing tickets back and forth across sprints.

Now Atlassian is cutting roughly 1,600 employees to redirect resources toward AI product development, Reuters reported. The company frames it as a strategic pivot, not a retreat. But the pivot points toward a future that undermines its own foundation.

The evidence is already in production. Rakuten, the Japanese e-commerce conglomerate, deployed OpenAI's Codex across its engineering organization and reports cutting its mean time to resolution by 50%. CI/CD pipeline reviews that once required human sign-off now run through automated agents. Full-stack features that took months ship in weeks. The company deployed Codex not as a pilot but to reduce the engineering hours each project requires.

That is what makes Atlassian's position distinct. Most companies laying off staff to fund AI are trimming costs in one division to invest in another. Atlassian is building products whose success depends on a market that AI is actively compressing. If a team of twelve becomes a team of four, those four still need version control and a CI pipeline. They probably don't need a 47-field Jira ticket workflow or a Confluence space with nested approval chains. Collaboration tooling scales with headcount. AI shrinks headcount.

Atlassian generates nearly all its revenue from subscription seats. Fewer developers per team means fewer seats. The company has begun shipping AI features inside Jira and Confluence, betting it can replace lost volume with higher per-seat pricing. That bet requires customers to pay more per person for tools designed to coordinate people they no longer employ.

The layoffs will fund AI features designed for smaller, faster teams. Atlassian's existing subscriber base was built to serve larger ones.

Anthropic Commits $100 Million to Build Out Claude Partner Network Anthropic launched a $100 million investment program to fund companies building on Claude. The Claude Partner Network will back integrations, tooling, and go-to-market efforts across the ecosystem. anthropic.com

InternVL-U Packs Multimodal Understanding, Generation, and Editing Into a 4B-Parameter Model Researchers released InternVL-U, a 4-billion-parameter model that handles visual understanding, reasoning, image generation, and editing in a single framework. The model uses decoupled visual representations to avoid the typical trade-off between comprehension and generation quality. huggingface.co

Flash-KMeans Makes Classical Clustering Fast Enough for Real-Time GPU Workloads A new implementation of k-means removes the memory and compute bottlenecks that kept the algorithm offline-only on GPUs. Flash-KMeans treats clustering as a first-class online primitive, opening direct use in inference pipelines and embedding systems. huggingface.co

Omni-Diffusion Replaces Autoregressive Backbone With Masked Discrete Diffusion for Multimodal Models A new architecture swaps the standard autoregressive backbone in multimodal large language models for masked discrete diffusion. The approach unifies visual understanding and image generation under a single non-autoregressive framework. huggingface.co

Reinforcement Learning Method Enforces Multi-View Consistency in 3D Scene Editing A geometry-guided RL approach solves the multi-view consistency problem in diffusion-based 3D editing without requiring paired 3D training data. The method treats 3D consistency verification — easier than generation — as a reward signal for fine-tuning 2D diffusion models. huggingface.co