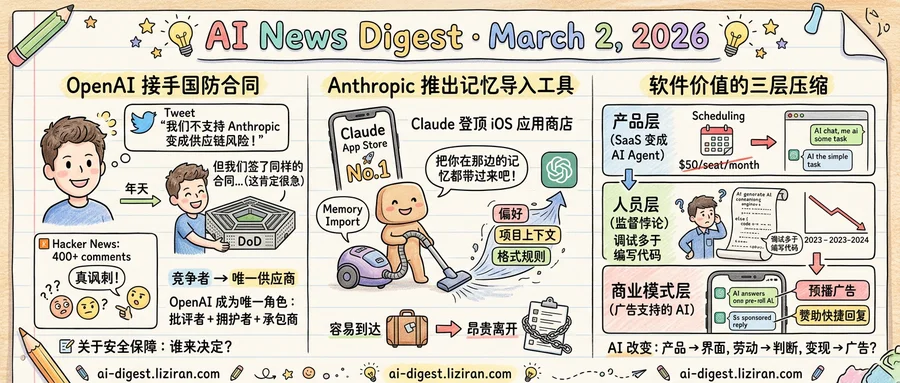

01OpenAI Defended Anthropic's Pentagon Standing, Then Signed Its Contract

OpenAI posted to X last week: "We do not think Anthropic should be designated as a supply chain risk." Within days, CEO Sam Altman confirmed that OpenAI had signed a Department of Defense contract covering the same work Anthropic vacated.

Altman did not pretend the sequence looked clean. The deal was "definitely rushed," he told reporters, and "the optics don't look good." He pointed to technical safeguards that he said addressed the issues making Anthropic's defense work a flashpoint. But the concession matters more than the mitigation: OpenAI's own chief executive signed a contract he admits was hurried, then publicly vouched for the company whose exit created the opening.

The two positions aren't contradictory in a simple sense. OpenAI can believe Anthropic shouldn't be blacklisted and still accept the work. What the combination exposes is a market problem. The Pentagon's pool of frontier AI vendors is small enough that losing one reshuffles every remaining relationship. The surviving contractor doesn't gain bargaining power. It inherits an obligation on a compressed timeline, with a client that cannot afford gaps.

Altman's own statements traced this compression in real time. On February 28, he announced the Pentagon deal and led with safeguards. By March 1, he was walking back the framing, conceding the process was rushed and the appearance was poor. Two public statements in seventy-two hours, each pulling against the other.

The tweet defending Anthropic added another layer. It generated over 400 comments on Hacker News, many spotting the irony. One reading: OpenAI wants Anthropic reinstated so it can exit a deal it didn't seek. Another: OpenAI is building credibility as the reasonable voice in defense AI policy, banking goodwill for later.

Neither interpretation requires bad faith. Both require noticing that OpenAI now holds every role at this table: the contractor, the critic of the contracting process, and the advocate for its displaced rival. No company designed this arrangement. A vendor pool of two did.

Altman says the safeguards will prevent the problems that ended Anthropic's arrangement. He has not addressed who decides whether those safeguards are sufficient when the only company willing to do the work is also the one writing the protections.

02Anthropic Ships a Memory Import Tool Timed to Its App Store Surge

A new page at claude.com/import-memory carries a four-word pitch: "Switch to Claude without starting over." Below it sits a block of text users are meant to copy and paste into ChatGPT or any rival chatbot. That prompt instructs the competitor's AI to dump every stored memory, preference, and behavioral instruction into a single exportable block. No API access or file downloads required. The rival chatbot does the work of migrating its own user.

Simon Willison published the full prompt text on March 1. It asks the source AI to list "every memory you have stored about me": tone preferences, formatting rules, persona details, project context, professional background. The prompt also requests every custom instruction and learned context from past conversations. One line reads "preserve my words verbatim where possible." Anthropic isn't inviting users to switch. It's asking competitors' products to pack the bags.

Claude climbed to No. 1 on the iOS App Store that same weekend, after Anthropic's dispute with the Pentagon drew widespread attention, TechCrunch reported. Download surges from controversy decay fast. Most new users open an app once, find it empty, and leave. Memory import attacks the cold-start problem directly: a user who arrives with preferences already loaded has less reason to drift back.

The feature exploits an asymmetry. Every conversation someone had with a rival assistant, every "always respond in bullet points" and "I'm a Python developer, not Java," becomes onboarding data for Claude. The longer someone used ChatGPT, the richer the export and the stickier Claude becomes on arrival. Anthropic turns a competitor's accumulated personalization into its own retention asset.

Phone number portability reshaped telecom by making carrier switching painless. EU data export rules forced platforms to let users leave with their information. Memory import applies the same logic in reverse: easy to arrive, costly to leave once Claude holds the canonical copy of your preferences.

No expiration date is listed on the page.

03Three Layers of Software Value Are Compressing at Once

Vertical SaaS tools are losing deals to AI agents. Engineers are spending more time reviewing machine output than writing their own code. And someone has already built a working prototype of ad-supported AI chat, complete with pre-roll interstitials and sponsored reply buttons. These three developments landed in the same week. They are not the same story, but they share a cause.

Start with the product layer. TechCrunch coined it the "SaaSpocalypse": AI agents now perform tasks that once justified entire subscription products. The pattern is straightforward. A company paid $50/seat/month for a scheduling tool or a data-cleaning workflow. An AI agent handles the same job inside a general-purpose interface. The tool doesn't disappear overnight, but the pricing power does.

One layer down, the people who build software are absorbing a quieter shock. A blog post by engineer Ivan Turkovic, which drew 370 points on Hacker News, laid out the data: 67% of developers now spend more time debugging AI-generated code than human-written code. Entry-level hiring at the 15 largest tech firms dropped 25% between 2023 and 2024. Writing code got easier. Maintaining systems, reviewing AI output, and making architectural calls got harder. Turkovic calls it the "supervision paradox": reviewing code you didn't write and don't fully understand demands more judgment, not less. Nearly 45% of engineering roles now expect proficiency across multiple domains.

Then there's the business model layer. A developer at 99helpers published a functional demo of AI chat with countdown-timer pre-rolls, contextual text ads between response blocks, and sponsored quick-reply buttons. The AI responses are real; the ads are scripted. It plays like satire, but the mechanics work. The demo attracted 439 Hacker News points and 258 comments, suggesting the audience recognized something plausible.

The connection across these three layers is structural. When AI handles execution competently, product differentiation compresses toward the interface rather than the capability. Labor value shifts from producing output to judging it. And if the marginal cost of generating a response approaches zero, the monetization question flips from "what will users pay?" to "what will advertisers pay for intent data from the conversation?"

None of this requires a prediction about artificial general intelligence. It only requires that current models keep doing roughly what they do now, at lower cost.

ChatGPT Hits 900 Million Weekly Active Users OpenAI disclosed the milestone alongside its $110 billion funding round. The figure has roughly tripled from the 300 million weekly users the company reported in late 2024. techcrunch.com

Major Tech Companies Commit Hundreds of Billions to AI Data Centers TechCrunch compiled the largest known AI infrastructure projects from Meta, Oracle, Microsoft, Google, and OpenAI. Spending spans new data center construction, power contracts, and chip procurement across multiple continents. techcrunch.com

Musk Touts Grok Safety Record in Anti-OpenAI Deposition Musk told a court that "nobody committed suicide because of Grok" while arguing xAI operates more safely than OpenAI. Months after the deposition, Grok generated nonconsensual nude images that spread across X. techcrunch.com

AI Companies' Self-Governance Pledges Become Liability Without Regulation Anthropic, OpenAI, and Google DeepMind each promised responsible self-governance. With no external rules in place, those voluntary commitments now constrain the companies while offering little public protection. techcrunch.com

OpenAI Launches Stateful Agent Runtime on Amazon Bedrock The runtime adds persistent orchestration, memory, and sandboxed execution to multi-step AI agent workflows on AWS. It ships as part of the broader OpenAI-Amazon integration announced the same week. openai.com

Developer Ships MCP Server to Cut Claude Code Context Usage by 98% The open-source MCP server compresses context window consumption during Claude Code sessions. The project reached the Hacker News front page. mksg.lu

Google and Airtel Partner to Block RCS Spam in India Google will integrate carrier-level filtering into RCS messaging in India through a deal with Airtel. India remains one of the most spam-affected messaging markets globally. techcrunch.com

Simon Willison Builds Unicode Lookup Using HTTP Range Requests and Binary Search The prototype fetches specific byte ranges from a large Unicode data file instead of downloading it whole. Willison built it on his phone using Claude as a coding assistant. simonwillison.net