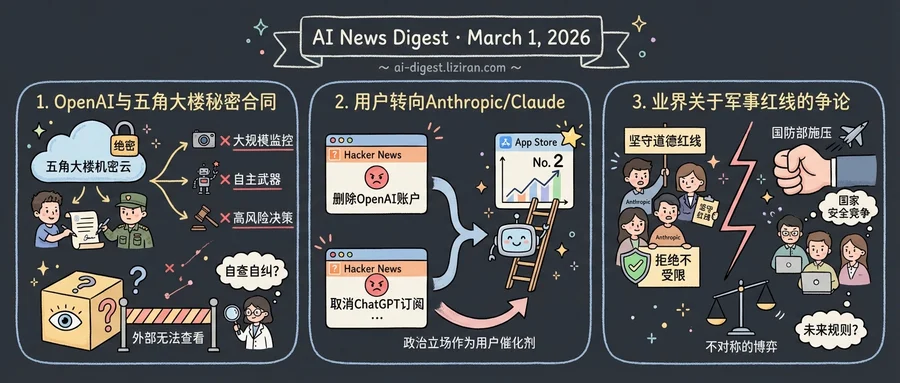

01OpenAI Publishes Its Pentagon Contract Terms. No One Outside the Classified Network Can Check Them

Sam Altman announced late on a Friday night that OpenAI had signed a deal to deploy its models inside the Department of War's classified cloud network. He posted the news on X and linked to a company blog post listing the agreement's safety provisions. The timing was not subtle: hours earlier, Defense Secretary Pete Hegseth had designated rival Anthropic a "supply chain risk to national security."

OpenAI says the contract contains three red lines. Its technology cannot be used for mass domestic surveillance, cannot direct autonomous weapons systems, and cannot power high-stakes automated decisions like social credit systems. The company says it retains "full discretion" over its safety stack, will confine models to cloud environments rather than edge devices, and will station cleared engineers and safety researchers alongside DoW personnel. Its deployment architecture, OpenAI claims, will let the company "independently verify that the red lines are not crossed, including running and updating classifiers."

That verification happens inside a classified network. By definition, classified environments restrict who can see what occurs within them. OpenAI is promising to police itself in a space where no external researcher, journalist, or congressional staffer can observe the policing. The company describes a "multi-layered" enforcement approach: contractual protections, technical safeguards, and on-site personnel. Each layer depends on OpenAI's own judgment about what constitutes a violation.

More than 60 OpenAI employees and 300 Google employees had signed an open letter days before the deal, urging their employers to back Anthropic's stance against unrestricted military AI use. "They're trying to divide each company with fear that the other will give in," the letter stated. Altman responded by telling employees that OpenAI shares "the same red lines" as Anthropic. The Pentagon, which had pressured Anthropic to allow its models for "all lawful purposes," apparently accepted those same restrictions from OpenAI without public dispute.

OpenAI also claims its agreement "has more guardrails than any previous agreement for classified AI deployments, including Anthropic's." That claim is unverifiable for the same reason the safeguards are: the terms operate under classification. The public gets a blog post. The classified network gets the models.

Altman framed the deal as proof that safety and national security work can coexist. The Pentagon framed it by blacklisting the company that refused to bend. Both statements can be true at once. Neither answers the question OpenAI's own employees raised.

02"Delete Your OpenAI Account" Tops Hacker News as Claude Hits App Store No. 2

Two Hacker News posts hit the front page in the same week: "How to delete your OpenAI account" and "How do I cancel my ChatGPT subscription?" The first drew 1,799 points and 341 comments; the second, 1,010 points and 238 comments. Neither post contained original reporting or analysis. They were links to OpenAI's own help pages.

The timing is the tell. Both posts surged during the same week that Anthropic's public dispute with the Pentagon dominated tech news. In that same window, Claude, Anthropic's chatbot, climbed to No. 2 in the iOS App Store, according to TechCrunch. It trailed only ChatGPT among AI assistants.

Each signal alone is easy to dismiss. App Store rankings fluctuate. Hacker News crowds skew libertarian and contrarian. Help-page links go viral for all sorts of reasons. But three independent indicators pointing the same direction in the same week is harder to explain away. Users appear to be rewarding Anthropic for the same stance that put it at odds with the Defense Department.

This would mark something new in the AI industry: political positioning as a consumer acquisition channel. Tech companies have long made vague gestures toward ethics in marketing copy and corporate blog posts. Rarely has a specific policy dispute with the federal government coincided with measurable user migration toward the company that said no.

The pattern has its limits. Hacker News users are not representative of the broader consumer market. App Store rank captures download velocity, not retention or revenue. Anthropic has not disclosed whether the surge translated into paid subscriptions. Claude's steady product improvements over recent months provide an alternative explanation that has nothing to do with Pentagon politics.

Still, the convergence is hard to dismiss. OpenAI's own support pages became protest vehicles. Anthropic gained App Store ground without launching a new product or cutting prices. The catalyst, by timing at least, was a government contract dispute.

03The AI Industry Confronts Its First Collective Fight Over Military Red Lines

When the Pentagon told Anthropic to accept "any lawful use" of its AI models or risk a supply chain designation, the pressure didn't stay inside one company's boardroom. It radiated across the entire AI industry, forcing engineers, researchers, and executives at rival labs to confront a question they had mostly avoided: where should the line be?

The Verge's reporting captures the moment that question became unavoidable. Tech workers across multiple AI companies began debating, publicly and privately, whether firms can or should refuse military contracts permitting mass surveillance of Americans or fully autonomous lethal weapons. The phrase "unsupervised killer robots" appeared not in a sci-fi pitch but in statements from employees watching the standoff unfold in real time.

Positions split along familiar fault lines. Some workers argued that refusing Pentagon terms is both a moral and practical obligation: once a model deploys without guardrails, the developer loses control over downstream use. Others countered that if American AI companies won't serve the military, Chinese competitors will. Both sides claimed national security as their justification.

What separates this from past tech-military fights is scale. When Google employees walked out over Project Maven in 2018, one company pulled out of one contract. Foundation models change the math. A single policy decision about usage restrictions ripples across thousands of downstream applications simultaneously.

The standoff also exposed a structural asymmetry. A "supply chain risk" designation from the Pentagon can cost hundreds of billions in lost contracts. AI companies have no equivalent countermeasure. The negotiating table tilts one direction.

Anthropic has held its position on restricting autonomous weapons and mass surveillance. Other labs have stayed quieter, watching whether resistance carries a survivable cost. The workers who spoke up did so knowing the answer will shape not just their companies but the default terms under which AI meets military power for years to come.

OpenAI Fires Employee for Trading on Prediction Markets with Inside Knowledge OpenAI terminated a staffer who used confidential company information to place bets on platforms including Polymarket and Kalshi. The employee violated an internal policy banning the use of inside knowledge for personal gain. OpenAI did not name the individual; Polymarket hosts active wagers on OpenAI product launches and IPO timing. wired.com

Anthropic Gives Open-Source Maintainers Six Months of Free Claude Max Anthropic launched a program offering six months of Claude Max 20x — valued at $1,200 — to open-source maintainers and core contributors. Eligibility requires maintaining a public repo with 5,000+ GitHub stars or 1M+ monthly NPM downloads. The program caps enrollment at 10,000 developers. claude.com

Google Opens Nano Banana 2 Image Model to Developers Google released Nano Banana 2, branded as Gemini 3.1 Flash Image, for image generation and editing via its developer tools. The model targets Pro-level output quality at Flash-tier cost and speed. blog.google

OpenAI Adds Parental Controls and Distress Detection to ChatGPT OpenAI shipped parental controls, a trusted contacts feature, and improved detection of users in distress as part of its mental health safety work. The update also addresses ongoing litigation over ChatGPT interactions with minors. openai.com

Simon Willison Introduces "Cognitive Debt" Framework for Agent-Written Code Simon Willison argues that code written by AI agents creates cognitive debt when developers lose track of how it works. He proposes interactive explanations — agent-generated walkthroughs of their own output — as a mitigation pattern. simonwillison.net

Google and Massachusetts Launch Free Statewide AI Training Google partnered with the Massachusetts AI Hub to offer no-cost AI training to all state residents. The program uses Google's existing training curriculum. blog.google

Researchers Propose Hybrid Parallelism to Accelerate Diffusion Model Inference A new framework combines conditional guidance scheduling with data-pipeline parallelism to speed up diffusion model inference across multiple GPUs. Current distributed methods suffer from visible generation artifacts; the hybrid approach reduces these while scaling more proportionally with added hardware. huggingface.co

AgentDropoutV2 Prunes Faulty Messages in Multi-Agent Systems at Test Time AgentDropoutV2 acts as a firewall between agents in multi-agent systems, intercepting and either correcting or rejecting erroneous messages before they cascade. The framework works without retraining and operates purely at inference time. huggingface.co