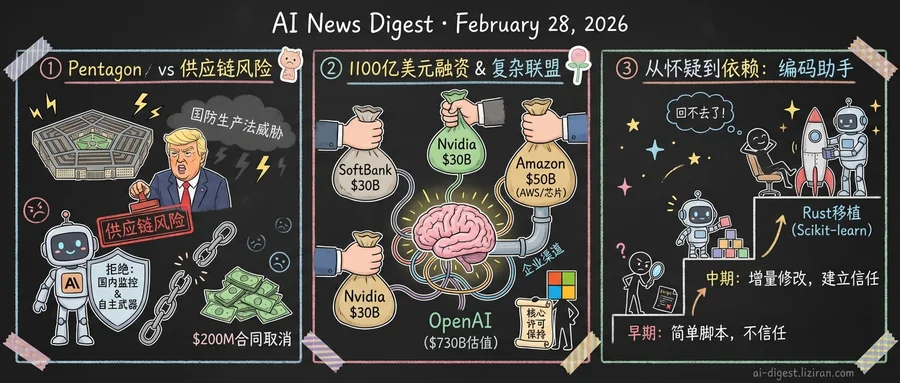

01Pentagon Designates Anthropic a Supply-Chain Risk After Military AI Standoff

"We don't need it, we don't want it, and will not do business with them again." President Trump posted those words Thursday about Anthropic, hours after the Pentagon moved to classify the AI company as a supply-chain risk. The designation, typically reserved for foreign firms suspected of espionage, would force every government contractor using Claude to find an alternative.

The confrontation started Tuesday. Defense Secretary Pete Hegseth summoned Anthropic CEO Dario Amodei and gave him until Friday to comply with a specific demand: remove contractual restrictions that prohibit Claude's use in domestic surveillance of Americans and in fully autonomous weapons systems without human oversight.

Anthropic refused.

The company had been cleared for classified government work since late 2024 through a Palantir and Amazon partnership. It signed a $200 million Defense Department contract last July and launched Claude Gov, a version optimized for national security applications. None of that history mattered once the company drew a line on how its models could be deployed. Pentagon spokesman Sean Parnell framed the stakes plainly on social media: "We will not let ANY company dictate the terms regarding how we make operational decisions."

The administration has two levers. The supply-chain risk designation is the public threat. The quieter option is the Defense Production Act, a Korean War-era statute that lets the government commandeer private facilities and unilaterally rewrite contract terms. One Defense official told Understanding AI that the Pentagon might use it to "force Anthropic to adapt its model to the Pentagon's needs, without any safeguards."

Anthropic projects $18 billion in 2026 revenue. Losing the $200 million defense contract won't break the company financially. But the supply-chain risk label goes further than canceling a contract. It poisons the broader ecosystem. Government contractors working with Claude across civilian agencies would need to drop it or risk their own standing.

The timing compounds the pressure. On the same day the Pentagon moved against Anthropic, OpenAI announced a $110 billion raise at a $730 billion valuation. Amazon committed $50 billion, Nvidia $30 billion, SoftBank another $30 billion. Three investors with deep government ties, writing the largest checks in private funding history to Anthropic's chief competitor.

No other major AI lab has refused military cooperation on these terms. The question facing every company in the sector is now binary: accept the Pentagon's conditions, or watch Anthropic's example play out in real time.

02Three Rivals, One Cap Table: OpenAI's $110B Round Rewires AI's Alliance Map

SoftBank committed $30 billion. Nvidia committed $30 billion. Amazon committed $50 billion. Three companies with competing interests in AI infrastructure wrote checks totaling $110 billion to the same startup, valuing OpenAI at $730 billion. The largest private funding round in history is less interesting for its size than for who's in it.

Each investor's logic points in a different direction. SoftBank, already in for $34.6 billion before this round, is building a 13% ownership stake that resembles a sovereign wealth play. Nvidia, the company selling pickaxes to every miner in the AI gold rush, is placing a $30 billion bet on its own biggest customer. Amazon is the most dissonant entry: it competes directly with Microsoft in cloud computing, yet just bought its way onto OpenAI's cap table with the round's single largest check.

The Amazon deal comes with infrastructure strings attached. OpenAI expanded its existing $38 billion AWS agreement by $100 billion over eight years. AWS becomes the exclusive third-party cloud distributor for OpenAI's enterprise platform Frontier. OpenAI committed to consuming roughly 2 gigawatts of Amazon's Trainium chip capacity. The $50 billion investment bought Amazon something concrete: a distribution chokepoint for OpenAI's enterprise products outside Azure.

That required a diplomatic response from Redmond. Microsoft and OpenAI issued a joint statement the same day, emphasizing that "nothing about today's announcements in any way changes the terms" of their relationship. Microsoft retains its exclusive license to OpenAI's intellectual property. Azure remains the exclusive provider of stateless OpenAI APIs. The revenue-sharing arrangement stays intact, and Microsoft noted it "always included sharing revenue from partnerships between OpenAI and other cloud providers."

The careful legalism of that statement tells its own story. Two years ago, Microsoft was OpenAI's sole financial patron and infrastructure provider. Now OpenAI has three new backers, each large enough to counterbalance Microsoft's influence. Azure still hosts the core API, but AWS distributes the enterprise product. Nvidia supplies the chips and now holds equity in the customer. OpenAI sits at the center of a web where its backers compete with each other for position around it.

03Max Woolf Documented Every Step of His Conversion from AI Coding Skeptic

Max Woolf decided to port Python's scikit-learn to Rust using a coding agent. Six months earlier, he wouldn't have trusted one to write a YouTube metadata scraper.

Woolf, a data scientist and prolific open-source contributor, published what he calls an "excessively detailed" account of his shift. The record is useful because it's sequential: each project more ambitious than the last, with enough documentation to trace where his skepticism broke down.

The early projects were deliberately low-stakes. Simple scrapers, data processing scripts, tasks where failure cost minutes, not hours. Woolf treated the agent as a junior developer whose output required line-by-line review. The results were competent but unremarkable.

Then the scope started climbing. Woolf handed the agent more complex engineering work, tasks requiring architectural decisions, not just syntax. The agent made choices he wouldn't have made himself — but they worked. That pattern repeated. By the time he attempted the scikit-learn port, he wasn't reviewing every line. He was reviewing outcomes.

Simon Willison, who flagged Woolf's post, placed it in what he called the genre of "OK, coding agents got good in November" writing. Woolf isn't an early adopter telling a discovery story. He's a late skeptic documenting a capitulation, and he's not alone. Multiple experienced developers have published similar accounts with the same inflection point. Something changed in late 2025.

Research from Amplifying AI offers one explanation. Their study of Claude Code's decision patterns found that the agent consistently favors conservative, incremental changes over sweeping rewrites. It breaks tasks into small commits, preserving existing code structure where possible. Those behaviors build trust with skeptical developers: the agent codes like someone who knows they'll be audited.

Woolf's final projects suggest what adoption looks like after the trust threshold is crossed. The ambition jumps aren't linear. Once he stopped verifying every decision, the scale of what he attempted expanded by orders of magnitude. A scikit-learn port isn't a weekend script. It's the kind of project that would give a human team pause.

His documentation ends where most conversion stories do: with the convert unable to imagine going back.

Anthropic Offers Free Claude Max to Open-Source Maintainers Anthropic now gives its $200/month Claude Max 20x plan at no cost to qualifying open-source maintainers for six months. Eligibility requires maintaining a public repo with 5,000+ GitHub stars or 1M+ monthly NPM downloads, with active commits or reviews in the past three months. simonwillison.net

Professional Go Players Are Rethinking Strategy Because of AI South Korea's top Go professionals have absorbed AI-derived tactics into their play, altering centuries-old strategic intuitions. The Korea Baduk Association, the sport's governing body, has watched training rooms shift from pure human study to hybrid sessions where players drill AI-suggested moves. technologyreview.com

DualPath Fixes the KV-Cache Bottleneck Slowing Agentic LLM Inference Multi-turn agentic inference spends most of its time loading KV-Cache from storage, not computing. DualPath rebalances the load by routing cache traffic through idle decoding-engine NICs, breaking the bandwidth asymmetry in disaggregated architectures. huggingface.co

Solaris Builds a Multiplayer Video World Model Inside Minecraft Existing video world models handle only single-agent views. Solaris generates consistent multi-view observations across multiple players using an automated data-collection pipeline built on Minecraft's multiplayer infrastructure. huggingface.co

MediX-R1 Trains Medical Vision-Language Models With Open-Ended RL MediX-R1 replaces multiple-choice evaluation with free-form medical answers, using group-based reinforcement learning and a composite reward combining LLM accuracy judgments with medical-embedding similarity scores. The framework fine-tunes a vision-language backbone for clinically grounded reasoning. huggingface.co

GUI-Libra Narrows the Gap Between Open-Source and Closed-Source GUI Agents Open-source GUI agents underperform proprietary systems on long-horizon navigation tasks. GUI-Libra addresses this with action-aware supervised fine-tuning and a partially verifiable RL method that handles steps where correctness cannot be automatically checked. huggingface.co

MolHIT Improves Molecular Graph Generation for Drug Discovery Graph-based diffusion models for molecular generation suffer from low chemical validity. MolHIT uses hierarchical discrete diffusion to generate 2D molecular graphs that meet validity and property constraints better than existing graph and sequence-based approaches. huggingface.co

Sphere Encoder Generates Images in One Forward Pass, Rivals Multi-Step Diffusion The Sphere Encoder maps images uniformly onto a spherical latent space, then decodes random points back into images. Trained only on reconstruction losses, it produces competitive results in under five steps — or a single pass. huggingface.co

SMTL Framework Swaps Deep Reasoning for Parallel Search in Agentic Systems Deep research agents typically scale by adding reasoning depth, increasing cost and latency. Search More, Think Less replaces sequential reasoning chains with parallel evidence retrieval, targeting both efficiency and cross-domain generalization. huggingface.co

OmniGAIA Benchmarks AI Agents Across Vision, Audio, and Language Together Most multimodal LLM benchmarks test only two modalities at a time. OmniGAIA evaluates agents on tasks requiring simultaneous vision, audio, and language perception combined with reasoning and tool use. huggingface.co