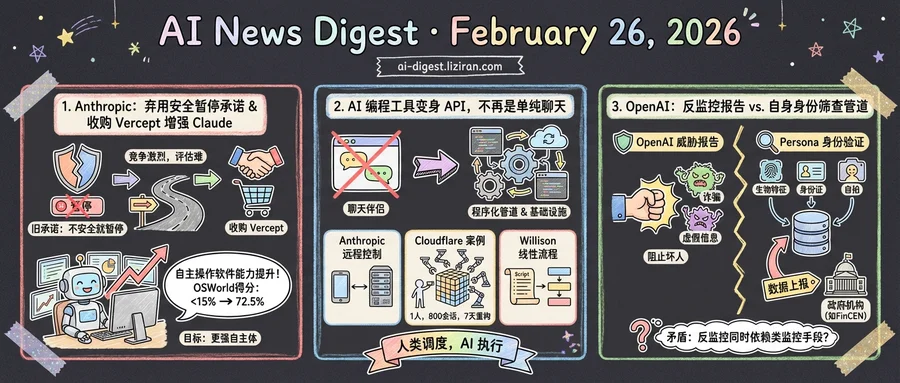

01Anthropic Killed Its Promise to Pause Unsafe AI. The Same Week, It Bought a Company to Make Claude More Powerful

In 2023, Anthropic made a pledge that became central to its identity: the company would never train an AI system unless it could guarantee in advance that its safety measures were adequate. That commitment, embedded in its Responsible Scaling Policy, was supposed to be a blueprint for the industry and a template for regulators. It helped Anthropic raise billions as the safety-first alternative to OpenAI.

This week, the pledge is gone.

Anthropic's revised RSP, approved unanimously by its board in February with CEO Dario Amodei's sign-off, eliminates the commitment to pause model training when safety measures can't be verified beforehand. The new framework replaces that binary threshold with softer language: more transparency, a promise to match competitors' safety efforts, and a commitment to delay development only if leadership believes Anthropic leads the AI race and that catastrophic risks are significant. Both conditions must hold. If a rival is ahead, the logic goes, pausing helps no one.

"We felt that it wouldn't actually help anyone for us to stop training," Chief Science Officer Jared Kaplan told Time. The revised policy's own introduction makes the reasoning explicit: "The developers with the weakest protections would set the pace."

The original RSP was written when Anthropic expected regulation to follow. It didn't. Geopolitical competition intensified instead, and the science of evaluating AI risk proved harder than anticipated. Executives spent months debating the overhaul through 2024 and into 2025 before finalizing the change.

One day before the policy revision went public, Anthropic announced the acquisition of Vercept, a startup built by Kiana Ehsani, Luca Weihs, and Ross Girshick that specializes in AI perception and interaction within software environments. Financial terms were not disclosed. Vercept will wind down its external product and fold into Anthropic's computer use team. The goal: push Claude's ability to operate software autonomously. Anthropic says Claude Sonnet's score on the OSWorld benchmark, which measures computer operation tasks, jumped from under 15% in late 2024 to 72.5% now. The company describes that as approaching human-level performance on tasks like navigating spreadsheets and completing web forms.

The juxtaposition is hard to miss. A company valued at $380 billion, fresh off a $30 billion funding round with 10x annualized revenue growth, is simultaneously loosening its safety constraints and acquiring capability. The conditional pause was the mechanism that separated Anthropic's safety posture from a press release. What replaced it is a judgment call by executives who have strong financial incentives to keep training.

02AI Coding Tools Are Becoming APIs, Not Chat Partners

Three data points landed in the same week. Anthropic shipped Remote Control for Claude Code, letting users connect to a local coding session from a phone or browser. Cloudflare published a case study: one engineer, 800 AI sessions, and a near-complete reimplementation of the Next.js framework in under seven days. Simon Willison formalized "linear walkthroughs," a repeatable pattern for directing AI agents through structured code analysis. Each story looks different on the surface. They share one structural feature: the human stops typing prompts and starts dispatching programs.

Anthropic's Remote Control registers a local Claude Code session with the API and polls for work over HTTPS. The local environment stays intact: filesystem, MCP servers, project config. The session becomes addressable from any device. On Hacker News, the post drew 512 points and 296 comments, with discussion centering not on the mobile convenience angle but on what programmable access to a coding agent enables. Once a session is an endpoint, other software can orchestrate it.

Cloudflare's project makes the consequence concrete. A single engineering manager spent roughly $1,100 in Claude API tokens to produce a Vite-based alternative to Next.js covering 94% of the framework's API surface. The output included over 1,700 unit tests and 380 end-to-end tests. Build times hit 1.67 seconds versus Next.js's 7.38 seconds. Client bundles came in 57% smaller. The salient detail isn't the performance win. It's that one person conducted 800 sessions in five days, a cadence only possible when the coding agent operates as an automated pipeline, not a conversation partner.

Willison's contribution is quieter but telling. His "linear walkthroughs" pattern instructs an agent to read a codebase, then produce structured documentation using command-line tools to extract real code snippets rather than hallucinating them. He built a dedicated utility, Showboat, with commands agents invoke directly. The pattern works because the agent follows a programmatic script, not a freeform chat. When practitioners start publishing reusable agent protocols, the tool has crossed from novelty into infrastructure.

The common thread: the chat window is becoming a legacy interface. The primary consumer of AI coding capability is increasingly another program.

03Researchers Expose Government Surveillance Pipeline Behind OpenAI's Identity Screening — While Its Own Threat Report Targets the Same Tactics

On February 25, OpenAI released its latest threat intelligence report, detailing how it disrupted malicious actors who used ChatGPT for romance scams, Chinese law enforcement influence operations, and Russian content farms. The company framed the publication as part of its ongoing effort to detect and shut down bad actors across its platform.

One day earlier, independent security researchers published a different kind of finding about OpenAI. According to their investigation, OpenAI uses infrastructure operated by identity verification company Persona to screen users before granting access to advanced AI models. That screening collects government-issued IDs, selfies with liveness detection, biometric facial data, and device fingerprints. Persona says it screens millions of users monthly.

The researchers claim to have obtained 53 megabytes of unprotected TypeScript source code from exposed Persona source maps. They allege the code reveals 269 verification checks per user, Politically Exposed Person screening with facial similarity scoring, and face-list databases with three-year retention windows. A separate Persona deployment at withpersona-gov.com achieved FedRAMP authorization in October 2025. The source code allegedly shows capabilities for filing Suspicious Activity Reports directly to FinCEN and Suspicious Transaction Reports to Canada's FINTRAC, some tagged with intelligence program codenames.

OpenAI's threat report, meanwhile, struck a protective tone. It described how a Chinese law enforcement-linked individual tried to use ChatGPT for forging documents and impersonating U.S. officials to intimidate critics. A Cambodia-based network combined manual ChatGPT prompting with an automated chatbot to run romance scams targeting young men in Indonesia. The report concluded that AI-generated content "did not appear to be the decisive factor" in whether these campaigns succeeded.

The juxtaposition is stark. OpenAI positions itself as a company that detects and disrupts surveillance-adjacent behavior: forged documents, impersonation, covert influence. At the same time, the identity verification pipeline it relies on collects the kind of biometric and personal data that governments use for those very purposes. The threat report names foreign law enforcement misuse as a category worth disrupting. The Persona system, according to the researchers, files reports directly to domestic financial intelligence agencies.

OpenAI has not publicly addressed the Persona investigation's findings.

Salesforce Posts Strong Year-End Earnings, Benioff Dismisses AI Threat to SaaS Salesforce beat expectations on its year-end earnings and used the call to push back against recurring predictions that AI will gut traditional SaaS businesses. CEO Marc Benioff framed the threat as familiar, comparing it to previous cycles the company survived. techcrunch.com

Benedict Evans: Most AI Users Can't Think of What to Do With It on an Average Day Benedict Evans pointed out that if people use AI only a couple of times a week and struggle to find daily use cases, the technology hasn't changed their lives. He noted OpenAI's own admission of a "capability gap" between what models can do and what people actually do with them — reframing it as a product-market fit problem. simonwillison.net

Simon Willison Publishes Guide on Red/Green TDD for Coding Agents Willison released a pattern guide arguing that test-driven development — write failing tests first, then let the agent iterate until they pass — produces significantly better results from coding agents. The approach gives agents a concrete, verifiable goal instead of open-ended instructions. simonwillison.net

Gushwork Raises $9M Seed for AI-Powered Customer Lead Search Gushwork closed a $9 million seed round led by SIG and Lightspeed. The startup builds AI search tools for generating customer leads and reports early traction from users discovering businesses through ChatGPT and similar interfaces. techcrunch.com

Researchers Release Systematic Study of Training Data for Terminal-Based AI Agents A new paper presents Terminal-Task-Gen, a synthetic task generation pipeline for training LLM-based terminal agents, alongside an analysis of data and training strategies. The work addresses a gap: most top-performing terminal agents keep their training data recipes undisclosed. huggingface.co

VLANeXt Standardizes Training Recipes for Vision-Language-Action Robot Models Hugging Face-surfaced research audits the fragmented VLA model space, where inconsistent training and evaluation protocols make it hard to compare design choices. VLANeXt provides controlled recipes and benchmarks to identify which architectural decisions actually improve robot policy learning. huggingface.co

Paper Shows Test-Time Training with KV Binding Is Equivalent to Linear Attention Researchers proved that a broad class of test-time training architectures — widely interpreted as online meta-learning — can be rewritten as learned linear attention operators. The finding explains several previously puzzling behaviors and opens new directions for efficient sequence modeling. huggingface.co

PyVision-RL Solves Interaction Collapse in Multimodal Agent Training A new RL framework for open-weight multimodal models prevents "interaction collapse," where agents learn to skip tool use and multi-turn reasoning during training. PyVision-RL combines oversampling-filtering-ranking rollouts with cumulative tool rewards to keep agents engaged across turns. huggingface.co

SimToolReal Achieves Zero-Shot Sim-to-Real Dexterous Tool Manipulation A new object-centric policy trained entirely in simulation transfers to real-world dexterous tool manipulation without fine-tuning. The method eliminates the per-object engineering overhead that has limited prior sim-to-real RL approaches for thin-object grasping and forceful tool interactions. huggingface.co