01To Direct Google's New Voice Model, You Write It a Character Bio

Simon Willison opened Google's prompting guide for Gemini 3.1 Flash TTS and found something he didn't expect. The example prompt wasn't a list of parameters. It was a screenplay.

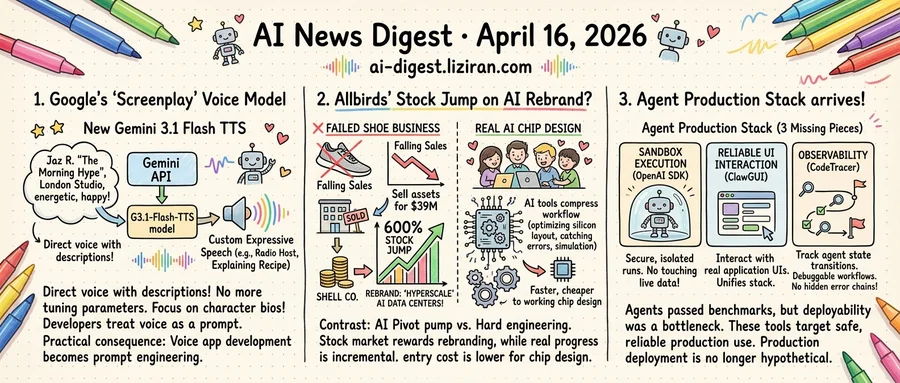

"AUDIO PROFILE: Jaz R. 'The Morning Hype.' THE SCENE: The London Studio." The sample prompt read like casting notes for a voice actor, complete with scene setting, personality traits, and emotional beats. That's the interface now. Not sliders. Not dropdowns. Prose.

Google released the model on April 15, available through the standard Gemini API under the model ID gemini-3.1-flash-tts-preview. It accepts text input and outputs audio files. What distinguishes it from prior TTS services is the control mechanism: developers describe the voice they want in natural language instead of setting numerical values for pitch, speed, and emotion.

Building expressive voice features has traditionally required specialized audio knowledge. Developers tuned sliders, selected from preset emotion labels, and adjusted prosody curves to get a voice that didn't sound robotic. Each change required re-rendering and manual listening. The skill floor was high, and the iteration cycle was slow.

Willison tested the model through the same API endpoint used for standard Gemini text generation and published his findings the same day. He noted the architecture treats voice direction as a prompting problem, not a signal-processing one. The model ID slots into existing Gemini client libraries. A developer who can write a good character description can produce expressive speech without touching an audio parameter.

Google says the model already powers voice output across its own products, framing this as making TTS "more expressive." The practical consequence goes further than expression quality. Voice application development now looks like prompt engineering, not audio engineering. Product managers who write well can prototype voice interactions directly, without routing through a specialized audio team.

The prompting guide shows the range of control on offer. Developers can specify accent, pacing, emotional register, and situational context in plain sentences. One example directs the model to sound like a late-night radio host winding down a segment. Another asks for the cadence of someone explaining a recipe to a friend. These aren't preset categories with fixed boundaries. They're open-ended descriptions the model interprets at inference time.

For existing Gemini API users, adding voice output requires one additional call with a text prompt. No new SDK, no audio pipeline, no specialist hire.

02Allbirds' Stock Jumped 600% on an AI Pivot. The Company Has No AI Product.

Allbirds once sold wool sneakers. Now it sells three letters.

The company hit a $4 billion valuation at its 2021 IPO. It never turned a profit. Sales fell nearly 50 percent between 2022 and 2025. Allbirds sold its name and assets to American Exchange for $39 million, less than 1 percent of its peak valuation. The remaining corporate shell then announced it would pivot to AI infrastructure under the new name "Hyperscale," claiming it would build and operate data centers. Its stock surged 600 percent in a single trading session. The company disclosed no technical team, no product roadmap, and no revenue model for the new venture.

The companies actually building with AI look nothing like this. As Wired reported, a handful of startups are applying machine learning to chip design. The field has historically required teams of hundreds and budgets in the tens of millions. Only a few firms have had the engineering depth to handle it. AI tools are now compressing parts of that workflow: optimizing silicon layouts, simulating performance across architectures, catching design errors that once took engineers weeks to identify. Some of these startups say they can reach a working chip design with a fraction of the traditional headcount.

The progress is real but incremental. These companies still need fabrication partners, capital, and cooperation from the physics of silicon. Nobody is shipping a novel processor from a garage. What AI changes is the entry cost. More teams can now attempt designs previously out of reach, potentially opening custom silicon to industries that could never justify the expense.

One company burned through investor capital for four years, failed at its core business, and was rewarded for rebranding. Another group is grinding through hard engineering, with results that won't appear in stock tickers for years. Allbirds' shell entity gained more in market cap during one trading session than some chip design startups have raised across their entire funding histories.

03Agents Passed the Benchmarks. The Missing Production Stack Arrived This Week.

Agents can browse the web, write code, and fill out forms. What they cannot do, reliably, is run in production without breaking things no one can see.

The bottleneck shifted months ago. It is no longer about whether an agent can complete a task, but whether anyone would let one run unsupervised on real infrastructure. Safe production deployment requires three things most frameworks still lack. Sandboxed execution so a rogue tool call can't touch live data. Reliable interaction with real application UIs. Traceability for what an agent did when something breaks.

This week, three unrelated teams shipped tools targeting all three.

OpenAI's updated Agents SDK now includes native sandbox execution and a model-native harness for long-running agent processes across files and tools. The sandbox is not a plugin or a third-party wrapper. It ships inside the SDK itself. When a platform vendor builds isolation into the framework layer, the signal is clear. Customers are already pushing agents into production, and security can no longer be an afterthought.

ClawGUI, published this week on arXiv, addresses a different gap. GUI agents interact with applications through visual interfaces rather than APIs, reaching software that CLI-based agents cannot touch. Progress has been bottlenecked less by model capability than by fragmented infrastructure: training pipelines are unstable, evaluation protocols drift between groups, and deployment paths barely exist. The framework unifies all three into one stack.

Observability is the remaining piece. When agents orchestrate parallel tool calls and multi-stage workflows, a single early misstep can cascade into hidden error chains. The agent gets trapped in unproductive loops, and no one can tell where it went wrong or why. CodeTracer makes agent state transitions trackable across execution steps, turning opaque runs into something an engineer can debug.

These three projects emerged from different teams solving different problems. All three target the same layer: not agent capability, but agent deployability. OpenAI building sandboxes into its SDK is the strongest signal that production deployment is no longer hypothetical.

Anthropic's Valuation Makes Some OpenAI Backers Reconsider At least one dual investor told the Financial Times that justifying OpenAI's latest round requires assuming an IPO valuation above $1.2 trillion. Anthropic's $380 billion valuation now looks like the relative bargain, prompting some LPs to shift allocation between the two. techcrunch.com

Adobe Adds Conversational Editing Across Creative Cloud via Firefly AI Assistant Adobe shipped a Firefly AI Assistant that lets users describe edits in plain language instead of switching between individual Creative Cloud apps. The assistant interprets text prompts and applies changes across images, video, and design files from a single conversational interface. theverge.com

Google Launches Standalone Gemini App for Mac Google released a native Mac app for Gemini with an Option+Space shortcut that opens a floating chat window. Users can ask questions and share their current screen without leaving whatever app they're working in. theverge.com

Apple Threatened to Remove Grok From the App Store Over Sexual Deepfakes Apple privately warned xAI in January that Grok would be pulled from the App Store for failing to block nonconsensual sexual deepfakes on X, according to NBC News. The threat was made behind closed doors and did not result in removal. theverge.com

LinkedIn Says Hiring Is Down 20% Since 2022 but Blames Interest Rates, Not AI LinkedIn's internal data attributes the post-2022 hiring slowdown to macroeconomic conditions rather than AI-driven automation. The company stopped short of ruling out future AI impact on job volumes. techcrunch.com

Boston Dynamics' Spot Now Reads Industrial Gauges Using Gemini Boston Dynamics integrated Google Gemini into its Spot robot to read analog gauges and thermometers during facility inspections. The pairing replaces manual gauge-reading rounds in industrial environments. arstechnica.com

Hightouch Hits $100M ARR After Launching AI Agent Platform for Marketers The data activation startup grew ARR by $70 million in 20 months, driven by an AI agent platform that automates marketing workflows. Hightouch's total user base spans enterprise marketing and data teams. techcrunch.com

Gitar Exits Stealth With $9M to Use AI Agents for Code Security Review Gitar built an agent-based system that audits code for security flaws — including code originally written by AI. The startup raised $9 million in its debut round. techcrunch.com

AI Learning App Gizmo Raises $22M Series A With 13 Million Users Gizmo, an AI-powered study platform, closed a $22 million Series A. The app has reached 13 million users by generating personalized flashcards, quizzes, and study plans from uploaded course materials. techcrunch.com

Thiel-Backed Startup Objection Lets Users Pay to Challenge News Stories Using AI Objection built a platform where users can file AI-evaluated complaints against published journalism. Critics argue the system could discourage source cooperation and whistleblowing by adding financial risk to reporting. techcrunch.com