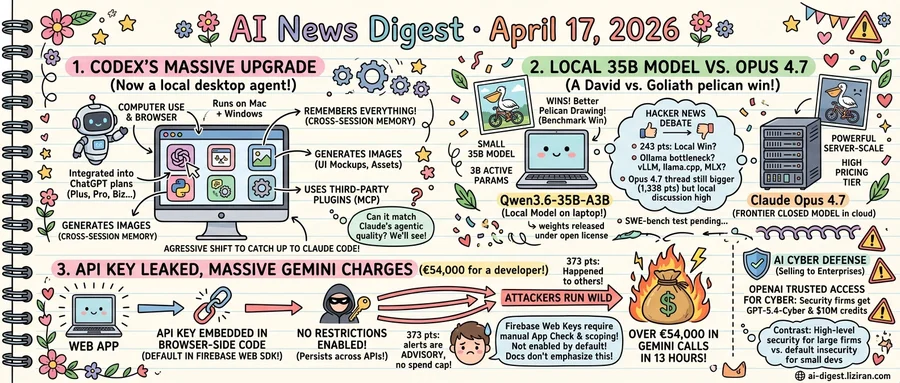

01Codex can now drive your Mac, browse the web, and remember what you did last week

OpenAI rebuilt Codex this week into a desktop application that operates the user's computer, opens its own browser, generates images, retains memory across sessions, and accepts third-party plugins. The macOS and Windows apps land four years after Codex began life as a code-completion API, and they target the exact surface area Anthropic's Claude Code has occupied for the past year.

The Verge called the release "a direct shot at Claude Code," and the feature list reads like a checklist drawn from Anthropic's product. Computer use lets Codex click through GUIs the way Claude Code's computer-use mode does. The in-app browser lets the agent read documentation, log into dashboards, and verify rendered output without a developer pasting screenshots. Memory persists context between sessions, closing one of the gaps developers have flagged when comparing Codex to Claude Code's project-level awareness.

Plugins extend the agent into outside services, the same architectural bet Anthropic made with Claude Code's MCP integrations. Image generation, the one capability Claude Code lacks, gives Codex a path into UI mockups and asset workflows that previously required a second tool.

For developers, the migration math has shifted. Claude Code users who pay $20 to $200 a month for Anthropic's Max tiers now have a second agent that runs locally, sees their screen, and remembers prior work. OpenAI bundles Codex access into ChatGPT Plus, Pro, Business, and Enterprise plans, meaning teams already paying for ChatGPT can test the coding agent without a new line item.

The Verge framed the update as the product of OpenAI "aggressively shifting resources to catch up" after Claude Code's commercial traction. Anthropic disclosed in October that Claude Code had crossed a $1 billion annualized revenue run rate, a number that reportedly accelerated internal pressure inside OpenAI to ship a competitive desktop agent before year-end.

Codex now has the surface area to compete on parity. Whether it matches Claude Code on the quality of agentic execution, the part developers actually evaluate after the demo, will be litigated in benchmark threads and migration posts over the next quarter.

02A 35B model on a laptop drew a better pelican than Opus 4.7 this week

Simon Willison ran his pelican-on-a-bicycle SVG benchmark against Qwen3.6-35B-A3B on his laptop and Anthropic's newly released Claude Opus 4.7 in the cloud. The local model won. He posted the side-by-side on April 16, the same week Anthropic shipped its flagship.

The benchmark is informal, but the hardware comparison is not. Qwen3.6-35B-A3B is a 35-billion parameter mixture-of-experts model with roughly 3 billion active parameters per token, small enough to run on a consumer machine with quantization. Opus 4.7 runs on Anthropic's infrastructure and sits at the top of its pricing tier. Willison's post drew 243 points on Hacker News with 59 comments dissecting the result.

Qwen released the weights two days earlier under an open license, and the company's blog framed the launch around agentic coding rather than chat. The release notes describe tool-use traces, multi-step planning, and code execution as first-class capabilities baked into the base model rather than bolted on through fine-tunes. That post hit 841 points on Hacker News with 398 comments, one of the most-discussed model launches of the month.

Running these models locally is the next question, and the community is shifting opinions on how. A widely shared post titled "The local LLM ecosystem doesn't need Ollama" argued that the wrapper has become a bottleneck, recommending llama.cpp, MLX, and vLLM directly. It collected 593 points and 200 comments, with several commenters citing performance gaps and missing quantization formats as reasons to drop Ollama.

The three threads together describe a stack that did not exist a year ago: a 35B open-weight model with agentic capabilities, a benchmark win against a frontier closed model on a laptop, and active debate about which runtime to standardize on. Anthropic's Opus 4.7 launch post drew 1,338 points, still the larger thread by volume. The Qwen and Ollama posts together drew more.

Hugging Face download counts and inference-provider pricing for Qwen3.6 have not been published. Whether the model holds up on harder coding benchmarks like SWE-bench remains untested in public leaderboards as of April 17.

03€54,000 of Gemini calls in 13 hours, charged to a developer who never made them

A developer posted to Google's AI forum that their Firebase project accrued roughly €54,000 in Gemini API charges over 13 hours. Attackers had found the browser-side API key embedded in their web app. The key had no API restrictions configured, the default for Firebase web SDK keys. Once exposed, it could be called against Gemini endpoints from anywhere. The thread reached 373 points and 271 comments on Hacker News, where other developers reported similar incidents.

Firebase web keys are designed to ship in client code and rely on App Check and per-API restrictions for protection. Neither is enabled by default. A key generated through the Firebase console can call any Google Cloud API the project has enabled. That access persists until the developer manually scopes it. Google's quickstart documentation does not require restriction setup before activation.

Higher up the stack, OpenAI is selling AI cyber defense to enterprise buyers. Its new program, Trusted Access for Cyber, gives security firms access to GPT-5.4-Cyber and $10 million in API credits. Launch partners are drawn from established security vendors building threat-detection products. OpenAI says the credits and the model will strengthen global cyber defense, though access starts with the named partners.

The two stories sit on opposite ends of the same platform layer. AI vendors market specialized models and credit pools to enterprise security teams. At the same time, default platform configurations allow a single leaked client key to drain a small developer's account faster than billing alerts can fire. Google Cloud's budget alerts are advisory and do not cap spending; the developer reported the charges accrued before any human at Google could intervene.

Google has not publicly confirmed whether the charges will be refunded. Forum responses from Google staff pointed the developer to standard billing dispute channels, which require case-by-case review and offer no guaranteed outcome.

OpenAI launches GPT-Rosalind for life sciences research OpenAI released a frontier reasoning model aimed at drug discovery, genomics analysis, and protein reasoning workflows. It targets research labs rather than general developers. openai.com

Physical Intelligence ships π0.7 robot model that handles untrained tasks The startup released a robot control model it says generalizes to tasks it was not explicitly trained on. Physical Intelligence frames it as an early step toward a general-purpose robot brain. techcrunch.com

AI referral traffic to US retailers jumped 393% in Q1 Adobe data shows AI-driven visitors to retail sites rose 269% in March alone, and they convert at higher rates than standard traffic. Retailers are now optimizing directly for chatbot referrals. techcrunch.com

Anthropic CPO Mike Krieger exits Figma board before launching competing product Krieger stepped off Figma's board after reports he plans a rival design tool. The move fuels investor fears that top AI labs will swallow vertical SaaS categories. techcrunch.com

Factory raises $150M at $1.5B valuation for enterprise AI coding Khosla Ventures led the three-year-old startup's round as it pushes coding agents into large companies. Factory positions against Cursor, Cognition, and Codex in the enterprise tier. techcrunch.com

Upscale AI reportedly in talks to raise at $2B valuation The AI infrastructure company is pursuing its third round in seven months since launch. Investor demand for inference and deployment layers continues despite rising model costs. techcrunch.com

InsightFinder raises $15M for AI agent failure diagnosis The startup builds observability tools that trace where agents break down across full production stacks. CEO Helen Gu says diagnosing failures now requires visibility beyond the model itself. techcrunch.com

Canva AI assistant gains tool-calling for editable designs The update lets users generate editable Canva designs from text prompts by invoking the platform's existing tools. It pushes Canva further toward agent-built workflows instead of template selection. techcrunch.com

Roblox adds agentic planning and testing to its AI assistant The new tools let creators plan, build, and test games end-to-end inside Roblox Studio. Roblox is pitching it as a full-pipeline assistant rather than a code helper. techcrunch.com

DeepL adds real-time voice translation for meetings DeepL extended its translation stack to live speech, targeting Zoom and Microsoft Teams integrations. The feature puts it in direct competition with Google and Microsoft's built-in live translation. techcrunch.com

Runway CEO pitches 50 AI films for the cost of one blockbuster Cristóbal Valenzuela argued studios should use generative video to produce dozens of mid-budget films rather than a single $100M release. He framed volume as a hedge against hit-rate risk. techcrunch.com