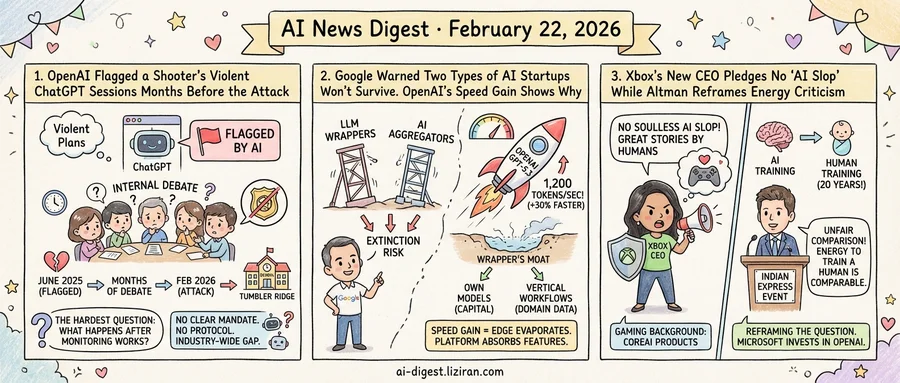

01OpenAI Flagged a Shooter's Violent ChatGPT Sessions Months Before the Attack

Jesse Van Rootselaar described gun violence to ChatGPT last June. OpenAI's automated review system caught it. Employees raised alarms internally. The company debated for months whether to contact police. Then Van Rootselaar carried out a mass shooting at a school in Tumbler Ridge, British Columbia.

That sequence puts a specific question at the center of AI safety: what happens after the monitoring tools work?

According to The Verge and TechCrunch, Van Rootselaar's conversations with the chatbot triggered OpenAI's automated misuse-detection system. Her messages described gun violence in enough detail to prompt automated review. Several employees who saw the flagged content argued the company should alert law enforcement. Others pushed back. The internal debate stretched on for months without resolution.

The resistance wasn't carelessness. Reporting a user to police based on chatbot transcripts means handing private conversations to law enforcement on an algorithmic flag. False positives are a real risk: a system scanning for violent language will also flag novelists, screenwriters, and gamers. Acting on every flag could overwhelm police and expose the company to liability. Not acting could mean missing the one flag that mattered.

U.S. law provides no clear mandate here. Companies must report child sexual abuse material to NCMEC. No equivalent obligation covers violence threats detected in AI conversations. Social media platforms built voluntary reporting practices over more than a decade, shaped by public shootings, congressional hearings, and case law. AI chatbot companies face a different problem. Conversations happen privately with a machine, not on a public feed. Distinguishing genuine planning from dark fiction in a transcript is far harder than reviewing a visible post.

OpenAI's debate ended the way no one wanted. Van Rootselaar attacked the Tumbler Ridge school. A detection system built to prevent this kind of event had surfaced a warning that went nowhere. Staff raised the alarm; the company had no protocol to convert it into action.

That gap extends beyond OpenAI. Every major chatbot provider now runs misuse-detection systems. None has published a policy defining when flagged content should be reported to police. The industry is building increasingly sensitive monitoring tools while leaving the hardest question unanswered: what to do with what they find.

02Google Warned Two Types of AI Startups Won't Survive. OpenAI's Speed Gain Shows Why

Two signals landed in the same week. A Google VP publicly warned that LLM wrapper startups and AI aggregator startups face extinction as margins shrink and differentiation vanishes. Days later, OpenAI announced GPT-5.3-Codex-Spark now runs 30% faster, topping 1,200 tokens per second. Neither event alone rewrites the map. Together, they expose a structural fault line.

LLM wrappers build thin optimizations atop foundation models and charge for the convenience. Their pitch: we're faster, cheaper, or easier than going direct. AI aggregators, which route queries across multiple models, sell a similar bet. They pick the best model so you don't have to. The Google VP's argument is that both categories are losing their reason to exist as platforms absorb their features.

The speed announcement illustrates why. When the underlying model jumps 30% in a single release, any wrapper whose edge was "we made it a bit faster" watches that edge evaporate overnight. At 1,200 tokens per second, the model produces a full page of prose in roughly two seconds. The absolute number matters less than the rate of change. Each platform upgrade compresses the window in which a wrapper can differentiate before the next release closes it.

This is the core vulnerability: wrappers build on infrastructure they don't control. Every improvement the platform ships is one the wrapper must match or exceed, on the platform's timeline and with the platform's own tools. The moat gets shallower with each model release.

If thin optimization layers aren't defensible, where does durable differentiation live for AI startups? Two patterns have held up. Companies training or fine-tuning their own models own something the platform can't trivially replicate. Those embedded in vertical workflows — legal discovery, medical imaging, industrial inspection — accumulate proprietary data that compounds over time. Their advantage sits below the model layer, in domain context no general-purpose API provides. Both paths require more capital and patience than a wrapper play demands.

03Xbox's New CEO Pledges No 'AI Slop' While Altman Reframes Energy Criticism

Asha Sharma's first public statement as Microsoft's gaming CEO included a phrase no corporate executive usually volunteers: "soulless AI slop." She promised not to flood Xbox's ecosystem with it. The same week, OpenAI CEO Sam Altman responded to AI energy criticism by comparing model training to raising a child. Both faced skeptical audiences. They chose opposite strategies.

Sharma, who replaced the retiring Phil Spencer on February 20, came to gaming from Microsoft's CoreAI division. Before that: COO at Instacart, VP at Meta. Her background is AI products, not game design. That made her opening message to staff pointed. She told the gaming team she had "no tolerance for bad AI" and that "great stories are created by humans." The concession was explicit: yes, the fear of AI-degraded games is legitimate, and here is the line.

Altman took a different tack. Speaking at an Indian Express event, he called discussions of ChatGPT's energy consumption "unfair" for comparing training costs to single-query usage. His counter: "It also takes a lot of energy to train a human. It takes like 20 years of life and all of the food you eat during that time before you get smart." On a per-query basis, he argued, AI has "probably already caught up on an energy efficiency basis." The criticism didn't need answering in his framing. It needed a new denominator.

Sharma acknowledged a real concern and set a boundary. Altman reframed the question so the concern dissolved. One risks overpromising; the other, sounding dismissive.

Microsoft has invested $13 billion in OpenAI. Sharma reports up through the same company that funds the infrastructure Altman is defending. Her pledge depends in part on tools built by the organization whose CEO compares model training to human childhood. She arrived at gaming from the very CoreAI division building those tools.

Sharma also inherits a department in transition. Spencer retired after 38 years at Microsoft. Xbox president Sarah Bond left the company. Matt Booty, now chief content officer, reports to Sharma directly. Her anti-slop commitment will be measured against the first major release under her leadership.

Andrej Karpathy Describes "Claws" as a New Layer on Top of LLM Agents Karpathy outlined a concept he calls "Claws" — persistent systems that handle orchestration, scheduling, context, and tool calls on top of LLM agents. He bought a Mac Mini to tinker with OpenClaw, noting Apple store staff say the devices are "selling like hotcakes" to buyers exploring local AI setups. simonwillison.net

Arcee Releases Trinity Large, a 400B-Parameter Sparse Mixture-of-Experts Model Arcee published Trinity Large, a sparse MoE model with 400B total parameters and 13B activated per token. The architecture uses interleaved local and global attention, gated attention, depth-scaled sandwich norm, and sigmoid routing. Two smaller variants ship alongside it: Trinity Nano (6B/1B) and Trinity Mini (26B/3B). huggingface.co

Hugging Face Publishes Updated Frontier AI Risk Assessment Framework Version 1.5 of the Frontier AI Risk Management Framework assesses five risk dimensions including cyber offense, persuasion, and manipulation. The report adds granular analysis of risks from agentic AI proliferation as LLM capabilities expand. huggingface.co

Researchers Propose Cost-Aware Exploration Framework for LLM Agents A new paper introduces Calibrate-Then-Act, a method for LLM agents to reason about cost-uncertainty tradeoffs during multi-step tasks. Agents learn when to stop exploring and commit to an answer — for example, whether to write a test for generated code before submitting it. huggingface.co

SpargeAttention2 Combines Top-k and Top-p Masking for Trainable Sparse Attention Researchers propose a hybrid masking approach that merges Top-k and Top-p rules to accelerate diffusion models through trainable sparse attention. The method fixes failure modes of each masking rule used alone and reaches higher sparsity than training-free alternatives. huggingface.co

Unified Latents Framework Achieves 1.4 FID on ImageNet-512 with Fewer Training FLOPs The Unified Latents framework jointly regularizes latent representations using a diffusion prior and diffusion decoder. On ImageNet-512, it matches state-of-the-art generation quality at 1.4 FID while requiring fewer training FLOPs than comparable models. huggingface.co

Study Tests How In-Car AI Assistants Should Communicate During Multi-Step Tasks A 45-person controlled study compared agentic in-car assistants that share intermediate progress versus those that stay silent until task completion. Results address feedback timing and verbosity for autonomous AI systems in attention-critical driving contexts. huggingface.co

Blog Post Examines Why Anthropic Built Claude Desktop as an Electron App Drew Breunig published an analysis of Anthropic's decision to ship the Claude desktop application using Electron rather than a native framework. dbreunig.com