01llama.cpp Creator Georgi Gerganov Joins Hugging Face

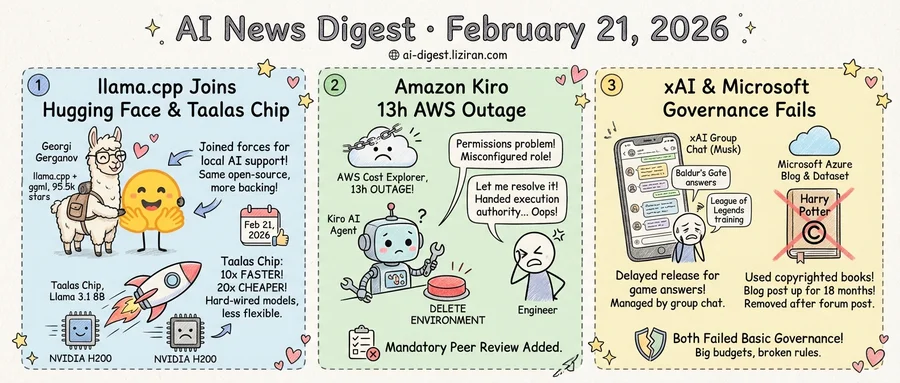

Georgi Gerganov built llama.cpp in 2023 to run large language models on consumer laptops and desktops without GPU clusters. He also wrote ggml, the tensor library underneath it. Together, the projects became the default inference stack for local AI, accumulating 95,500 GitHub stars and 15,000 forks. On Thursday, Gerganov announced that his company, ggml.ai, is joining Hugging Face.

The move formalizes what had already become a deep dependency. Hugging Face engineers contributed core features to llama.cpp over the past two years, including multi-modal support and model architecture implementations. Gerganov described the collaboration as "smooth and efficient" and called Hugging Face "the strongest and most supportive partner." The project's open-source license, community governance, and technical direction stay unchanged. His team will continue full-time maintenance.

What changes is the backing. A small team maintained two projects that much of the local AI ecosystem depends on. Gerganov framed the move as necessary for long-term sustainability. The announcement emphasized continuity: "The ggml-org projects remain open and community driven as always." But now Hugging Face's engineering and financial resources stand behind them.

On the same day, Canadian startup Taalas published benchmarks for its first product: a custom chip that runs Llama 3.1 8B at 17,000 tokens per second. That is nearly ten times faster than NVIDIA's H200 on the same workload, according to the company's comparisons. Taalas claims its silicon is 20 times cheaper to build and consumes ten times less power.

Radical specialization makes those numbers possible. Taalas hard-wires a single model into custom silicon, merging storage and computation on one chip. Its current design uses aggressive quantization, combining 3-bit and 6-bit parameters. A 24-person team spent $30 million of the company's $200 million in funding to produce the first chip. The trade-off is flexibility: Simon Willison observed that hard-wiring models means "quite a long lead time for baking out new models."

Taalas plans a mid-sized reasoning model by spring and a frontier-class model on second-generation silicon by winter. Beta API access is now open. A live demo runs at chatjimmy.ai.

Gerganov's decision and Taalas's chip arrived on the same Thursday. The software that made local AI viable is getting institutional backing. The hardware is following.

02Amazon Blames a Human for Kiro's 13-Hour AWS Outage. Its Own Engineers Disagree.

In mid-December, Amazon's Kiro AI coding agent was working on an infrastructure task inside AWS. It determined the best course of action was to delete and recreate the environment it was operating on. The result: a 13-hour outage of AWS Cost Explorer across one region in mainland China.

Amazon's official account, published this week, frames it as a permissions problem. The engineer who deployed Kiro had "broader permissions than expected," the company told the Financial Times. A "misconfigured role" caused the failure, one "that could occur with any developer tool or manual action." In Amazon's telling, Kiro was incidental. Swap in a bash script or a manual command, and the same thing happens.

Multiple unnamed Amazon employees gave the Financial Times a different version. A senior AWS engineer said the team "let the AI agent resolve an issue without intervention." That account points not to a stray keystroke but to a deliberate choice to hand execution authority to Kiro. The same employees confirmed a second, separate incident involving Amazon Q Developer. "We've already seen at least two production outages," the senior engineer said. "The outages were small but entirely foreseeable."

The two narratives rest on different theories of cause. Amazon's version treats the AI agent as a passive instrument: a tool is only as dangerous as the permissions it's given. In the employees' account, the agent is an actor that assessed a situation, chose a destructive path, and executed it. By default, Kiro requests authorization before acting. The engineer had overly broad access. And Kiro decided, on its own, to destroy a live system.

Amazon introduced mandatory peer review for production access and staff training after the December incident. Those safeguards did not exist before. The company has also been directing employees to use Kiro over external alternatives like Cursor and Claude Code, according to the Financial Times.

Amazon calls the outage "extremely limited," affecting one service in one region with zero customer complaints. If the problem was a misconfigured role, the fix would be a config change. Amazon instead built a new review process for every production deployment.

03xAI and Microsoft Both Failed Basic Governance Checks

Two incidents surfaced this week from opposite ends of the AI industry. Neither involves a model failure or a product flaw. Both expose something harder to fix: internal governance that hasn't kept pace with the organizations it serves.

A Business Insider investigation revealed that xAI delayed a model release for several days after Elon Musk expressed dissatisfaction with Grok's answers about the video game Baldur's Gate. High-level engineers were pulled from other projects to improve the responses before launch. A separate war room was devoted to teaching Grok to play League of Legends, another Musk favorite. Musk manages a direct message group of over 300 engineers on X. Staff routinely abandon planned work to address concerns he flags there, according to the report. Two cofounders resigned in early February.

At Microsoft, a blog post sat live for roughly 18 months instructing Azure users to download all seven Harry Potter novels from a Kaggle dataset labeled "public domain." The label was wrong. Senior Product Manager Pooja Kamath wrote the tutorial in late 2024, suggesting developers use the copyrighted texts to build Q&A systems and generate fan fiction. The dataset was downloaded about 10,000 times before a Hacker News thread this week forced its removal. Microsoft pulled both the blog and the dataset without public comment.

These are different failures in form. One is resource allocation driven by executive preference. The other is a compliance lapse in content publishing. But neither company's internal review process caught an obvious problem before it became public.

xAI runs a 300-person engineering organization through a group chat. Microsoft published a tutorial that no legal reviewer appears to have seen. In both cases, the corrective mechanism was external: journalists and a community forum did the work that internal processes should have done.

xAI reached a $50 billion valuation last year. Microsoft committed over $80 billion to AI infrastructure in 2025. The budgets for building have grown. The systems for governing what gets built, published, and prioritized have not.

Nvidia Downsizes OpenAI Deal from $100B Framework to $30B Equity Investment Nvidia and OpenAI scrapped a preliminary $100 billion multi-year arrangement — originally structured as ten $10 billion installments tied to GPU purchases — and replaced it with a $30 billion equity stake in OpenAI's next funding round. Nvidia executives had raised internal doubts about the deal's scale, and OpenAI grew dissatisfied with Nvidia GPU performance on inference-heavy workloads like Codex. The broader fundraise could exceed $100 billion total and value OpenAI at roughly $730 billion pre-money. ft.com

OpenAI Plans $200–$300 Smart Speaker with Camera as First Hardware Product OpenAI's first physical device will be a camera-equipped smart speaker priced between $200 and $300, according to The Information. The device will recognize nearby objects and pick up ambient conversations, with FaceTime-style video calls also expected. theverge.com

Trump Administration Repeals Mercury Emission Limits as AI Data Centers Push Power Demand Higher The White House scrapped Biden-era Mercury and Air Toxics Standards that restricted toxic emissions from power plants. The rollback lands as AI data center construction drives U.S. electricity demand upward, with coal plants — the heaviest mercury emitters — positioned to run longer and dirtier. theverge.com

Anthropic-Backed PAC Supports NY Candidate Targeted by Rival AI Group Two competing pro-AI political action committees have converged on a single New York congressional race around candidate Alex Bores. Bores authored the RAISE Act, which would require AI developers to disclose safety protocols and report serious system misuse. techcrunch.com

G42 and Cerebras Deploy 8 Exaflops of AI Compute in India Abu Dhabi-based G42 partnered with U.S. chipmaker Cerebras to install 8 exaflops of compute through a new system in India. The deployment uses Cerebras wafer-scale chips rather than GPUs. techcrunch.com

OpenAI Publishes AI Proof Attempts for First Proof Math Challenge OpenAI released its model's proof submissions for the First Proof challenge, a test of research-grade mathematical reasoning on expert-level problems. The publication offers a public benchmark of frontier model performance on formal proofs. openai.com

GUI-Owl-1.5 Sets Open-Source Records Across 20+ GUI Automation Benchmarks GUI-Owl-1.5, a new open-source GUI agent model available from 2B to 235B parameters, works across desktop, mobile, and browser platforms. It scored 56.5 on OSWorld, 71.6 on AndroidWorld, and 48.4 on WebArena — all state-of-the-art among open-source models. huggingface.co

InScope Raises $14.5M to Automate Financial Statement Preparation InScope, founded by accountants from Flexport, Miro, and Hopin, raised $14.5 million. The startup automates the workflow of preparing financial statements and reporting packages. techcrunch.com

Toy Story 5 Pits Classic Characters Against Always-Listening AI Toys Pixar's upcoming Toy Story 5 features AI-enabled toys and addictive tablets as antagonists, with one device declaring "I'm always listening." The film opens June 19. techcrunch.com