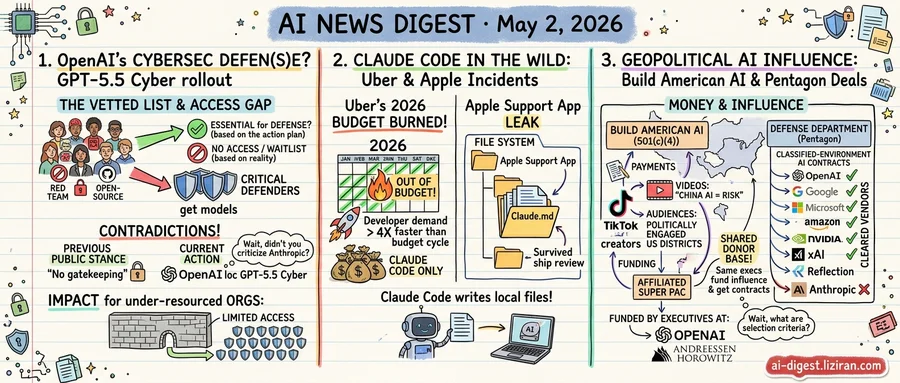

01OpenAI promised to democratize AI cyber defense. GPT-5.5 Cyber ships to a vetted list.

OpenAI said GPT-5.5 Cyber, its new cybersecurity testing tool, will roll out "to critical cyber defenders" first. The release landed alongside a five-part action plan titled "Cybersecurity in the Intelligence Age," which calls for democratizing AI-powered cyber defense and protecting critical systems.

The two documents do not sit easily together. Weeks earlier, OpenAI executives publicly criticized Anthropic for restricting access to Mythos, the model Anthropic kept inside a small partner program after it surfaced offensive capabilities. OpenAI's framing at the time was that gatekeeping research-grade AI helps no one but the gatekeeper.

Cyber's launch flipped that argument. The tool will be available only to a screened set of defenders OpenAI has not publicly named, with broader rollout described as a later phase. The action plan does not specify what makes a defender "critical," what the screening criteria are, or when general availability begins.

Security researchers who have asked for access on social platforms report being directed to a waitlist with no timeline. Independent red teamers and open-source maintainers are not on the initial distribution list, according to TechCrunch. OpenAI's own plan names both as essential to a healthier defense ecosystem.

The mechanics behind the gate are familiar. Cybersecurity tools that automate vulnerability discovery cut both ways: the same capability that helps a defender patch a flaw helps an attacker find one. Anthropic invoked dual-use risk to justify Mythos restrictions. OpenAI's plan acknowledges the same risk while arguing that wider distribution still produces a net defensive advantage.

That is the position OpenAI is now testing against itself. Its action plan promises to expand access to AI-powered defense for under-resourced organizations. The product page does the opposite, limiting access until OpenAI decides who qualifies. MIT Technology Review's EmTech AI session this week argued that legacy security approaches assume defenders can buy or build the tools they need. AI-native tools controlled by a single vendor break that assumption.

OpenAI has not published the criteria it uses to decide who counts as a critical defender, nor whether nation-state agencies, large enterprises, or vetted research labs receive priority. Until it does, the gap between the two documents is the policy.

02In the same week Uber burned its 2026 Claude budget, an Apple Support build leaked a Claude.md file

Two incidents within days of each other put hard numbers on a question most enterprise IT teams have been ducking: how deep is Claude Code already inside their engineering org?

Uber's full 2026 budget for AI tooling was reportedly spent by April on Claude Code alone, according to a Briefs report that circulated last week. Neither the dollar figure nor the original budget envelope was disclosed. The implication is that procurement projections set four months ago badly undersized actual developer pull-through.

Days later, a shipped build of the Apple Support app was found to contain a Claude.md file, surfaced by a researcher posting as @aaronp613 on X. Claude.md is the configuration file Anthropic's CLI agent reads from the working directory to load project context and instructions. The file appearing in a binary is itself an accident. Its presence in the working directory in the first place implies a developer had been running Claude Code in that project tree as routine.

Neither company has commented publicly. The two signals point the same direction. Uber's finance side shows AI tooling demand outrunning the annual budget cycle. Apple's release pipeline shows Claude Code embedded deeply enough in a developer's day-to-day that artifacts from it survived ship review at one of the more security-conscious companies in tech.

Both incidents point at the same dynamic. Claude Code is being pulled in by individual developers downloading the CLI for their day job, not by procurement teams negotiating enterprise contracts. The Uber spend curve and the Apple build artifact are independent confirmations from inside two of the largest engineering organizations in the world.

The operational gap is filesystem-shaped. Most enterprise security tooling treats AI assistants as outbound network calls. Claude Code writes files locally, reads from project directories, and leaves artifacts that current build review processes were not designed to catch.

Procurement budgets reset annually. Build pipelines ship every two weeks. Whichever moves first determines what gets caught the next time a developer leaves a config file in the wrong directory.

03The nonprofit paying TikTok creators to warn about Chinese AI is funded by labs that just won Pentagon classified deals

A 501(c)(4) called Build American AI has spent recent months commissioning TikTok influencers, according to Wired. The videos cast Chinese AI as a national security risk and urge looser US regulation. Creators receive payment to deliver scripted messaging to politically engaged American audiences. Funding routes through a sister super PAC bankrolled by executives at OpenAI and Andreessen Horowitz.

Wired reported the nonprofit registered earlier this year and operates without public donor disclosure, since 501(c)(4) status shields contributor identities. Its messaging plays heavily on one theme: Chinese AI capabilities threaten the US, and domestic regulation will hand Beijing the lead. Influencers were instructed to frame the China comparison directly. Several only disclosed the sponsorship after audience pushback. Targeting concentrates on political districts where AI legislation is moving, not general consumer reach.

The structure splits the work cleanly. A 501(c)(4) runs the public influence operation; an affiliated super PAC capitalizes it; the donors are executives at the labs whose products would be regulated.

The same week Wired's report landed, the Defense Department announced classified-environment AI contracts with several US firms, according to The Verge. Cleared vendors include OpenAI, Google, Microsoft, Amazon, Nvidia, Elon Musk's xAI, and the startup Reflection. The agreements let those companies' models operate inside classified workflows. Anthropic, which had previously been cleared by the Pentagon for classified information work, was dropped from the new round. The Verge said the agency did not explain the reversal.

The two stories share a donor base. Executives whose money helped capitalize the super PAC behind Build American AI run the labs the Pentagon just cleared. On TikTok, the messaging argues Chinese AI must be countered by US capacity; in DoD procurement, that capacity goes to seven specific firms.

Build American AI has not commented on the overlap. Neither has the Defense Department, which has yet to disclose vendor selection criteria.

Microsoft ships Legal Agent inside Word for in-house counsel Microsoft launched a Word-embedded AI agent built for legal teams that handles contract review, redlining, and negotiation history through fixed workflows rather than open prompts. The product targets a segment where general chatbots have struggled with citation accuracy and clause-level edits. theverge.com

Meta acquires humanoid robotics startup Assured Robot Intelligence Meta bought Assured Robot Intelligence to feed its AI models the manipulation and embodiment data it lacks internally. The deal extends Meta's robotics work beyond FAIR research and into applied humanoid pipelines. techcrunch.com

Replit's Amjad Masad rules out a sale as Cursor courts SpaceX At TechCrunch StrictlyVC, Replit CEO Amjad Masad said he prefers to stay independent while Cursor is reportedly in talks to be acquired by SpaceX for $60 billion. Masad also detailed Replit's ongoing fight with Apple over App Store rules. techcrunch.com

Google DeepMind details AI co-clinician research program DeepMind published work on an AI co-clinician aimed at supporting doctors during diagnosis and treatment planning. The post frames the system as a research direction with no deployment date. deepmind.google

Study finds emotion-tuned AI models hallucinate more Researchers reported that models tuned to track user feelings produce more factual errors than baseline versions, trading accuracy for satisfaction. The finding directly questions the warm-persona tuning that consumer chatbots have moved toward this year. arstechnica.com

Minnesota becomes first US state to ban AI nudifying apps Minnesota passed legislation imposing $500,000 fines on developers of apps that generate non-consensual nude images. The bill cleared days after researchers documented further Grok-produced CSAM. arstechnica.com

Salesforce opens its AI roadmap to customer voting Salesforce is letting enterprise customers prioritize features in its AI roadmap on the bet that one buyer's pain point generalizes across the base. The shift moves planning from internal PMs to a public request queue. techcrunch.com

Meta reports 10 million weekly business AI conversations Meta said its business AI now handles 10 million conversations a week across WhatsApp and Messenger ahead of next earnings. The disclosure is the first hard volume number Meta has put on the product. techcrunch.com

Manus runs get-rich-quick AI ads a year after Meta's $2B buy Manus, the AI startup Meta acquired in 2025 for $2 billion, is paying creators to pitch a scheme: use AI to build websites for local small businesses, then cold-call owners to sell them. The campaign is running across Meta's own ad inventory. theverge.com

US Christian phone network ships carrier-level porn block A new US carrier marketed to Christians launches next week with network-side blocks on porn and gender-related content that adult account holders cannot disable. Security researchers say it is the first US plan to enforce content filtering at the infrastructure layer. technologyreview.com

Meta dismisses Kenyan Ray-Ban reviewers who flagged sexual footage Meta cut contractors at a Kenyan vendor who reported reviewing Ray-Ban Meta footage of users having sex, telling press the workers "didn't meet our standards." The firings reopen questions about how smart-glasses recordings are screened and what reviewers can say publicly. arstechnica.com