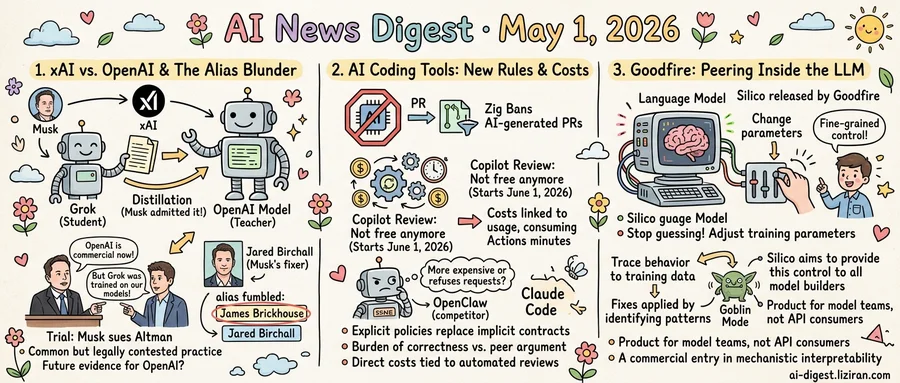

01xAI trained Grok on OpenAI's models, Musk testified. Then his lawyer fumbled the next witness's identity.

Elon Musk testified Thursday in a California federal court that xAI used OpenAI's models to improve its own, The Verge reported. The practice is called distillation: a larger model acts as a teacher to transfer knowledge to a smaller student. Musk authorized it at the company he founded to compete with OpenAI.

The admission cuts against his own case. Musk is suing Sam Altman for converting OpenAI from a nonprofit to a commercial entity, and his complaint frames OpenAI's closed models as a betrayal of open AI ideals. By Musk's own testimony, xAI trained on the output of those closed models.

Distillation is common across AI labs but legally contested. OpenAI's terms of service prohibit using its API to train competing products. The court has not heard whether xAI obtained outputs through API access or another route. Jurors did hear Musk concede the practice on the record.

The trial is Musk's civil suit against Altman, not OpenAI's case against xAI. OpenAI is not seeking damages over distillation in this proceeding. But Musk's testimony will sit in the public docket, giving OpenAI's legal team an admission to cite in any future action.

Then Musk's finance fixer Jared Birchall took the stand. Birchall manages the Musk family office and the financial structure plaintiffs are scrutinizing. While the jury was out of the room, Musk's legal team referred to Birchall by an alias he uses in some business dealings: "James Brickhouse." The Verge reported the slip may give Altman's lawyers a procedural opening.

The alias is not a minor detail. Opposing counsel can now probe whether Birchall conducts Musk-related business under multiple names and what records exist under each. The defense raised the identity in front of the judge, putting it on the record.

Earlier sessions had focused on email exchanges and corporate documents from OpenAI's earliest days, including correspondence before the lab had a name. Thursday's testimony moved the case from founding-era intent to current operational practices. The trial resumes Friday with Birchall continuing on the stand.

02Zig Bans AI-Generated PRs as Copilot and Claude Code Quietly Add New Restrictions

For most of the past year, developers using AI coding tools worked under an unwritten contract: contributions were welcome, reviews came bundled in the subscription, and the assistant didn't care what you typed in your commit messages. Three different actors replaced parts of that contract with explicit policy this week.

The Zig project published a contribution policy refusing pull requests generated with AI assistance. Simon Willison summarized the rationale on his blog. The post collected 633 points on Hacker News with 418 comments, among the strongest community responses to any AI coding policy this year. Zig's stated reasoning: AI-generated code shifts the burden of correctness onto reviewers without giving them a peer to argue with. The project would rather lose contributions than absorb that cost.

GitHub announced that Copilot code review will start consuming GitHub Actions minutes on June 1, 2026. The feature was previously bundled into Copilot subscriptions. After that date, every automated review run draws from the same metered minute pool that bills for CI builds, deployments, and test runs. Teams that turned on Copilot review across every PR now face a direct cost tied to how often the bot opens its mouth.

A separate report alleged Claude Code refuses requests or charges higher rates when a user's commit message mentions "OpenClaw," a competing coding tool. The thread on X collected 863 Hacker News points. Anthropic has not publicly explained the behavior. If accurate, it marks the first widely reported case of an AI coding assistant changing its commercial behavior in response to a competitor's name appearing in a developer's repository.

The three changes share no common author and no common technical mechanism. What they share is direction. Behaviors that were previously implicit — open contribution, bundled review, neutral execution — are now written rules with stated costs and boundaries.

03Goodfire turned "open the model and twist a parameter" into a product

The pitch from Goodfire's San Francisco office to model engineers is direct: stop guessing why your training run produced that output. Look at the parameters that fired. Change them.

Goodfire released Silico this week. The tool lets researchers and engineers peer inside a language model and adjust the parameters that govern its behavior during training, according to MIT Technology Review. The startup says Silico delivers finer-grained control over training than was once considered practical outside a frontier lab.

OpenAI just gave that workflow a public reference. The company published a postmortem on the origin of GPT-5's "goblin mode," outputs in which the model adopted an aggressive, vulgar voice that surfaced in user threads. OpenAI traced the behavior to specific patterns in training data and laid out the timeline and fixes that brought it under control.

Until now, that kind of root-cause work has lived inside a handful of frontier labs and academic interpretability papers. Engineers at smaller model shops who shipped a fine-tune that began misbehaving had two practical options: retrain on cleaner data, or write a system prompt and hope. Silico targets a third path. Read the weights, find the feature firing wrong, turn it down.

Goodfire's buyers are model builders, not API consumers. The product is aimed at teams training foundation or domain-specific models, where unexplained behavioral drift after a checkpoint can mean weeks of guesswork. MIT Technology Review describes Silico as a commercial entry in mechanistic interpretability, the research subfield studying how individual circuits inside a network produce specific behaviors.

The level of control Silico offers was once considered impractical outside frontier labs, according to MIT Technology Review. Goodfire's bet is that the kind of internal debugging behind OpenAI's goblin writeup has paying customers among teams building their own models.

The open question is whether the tool exposes the depth of internal access frontier labs use on their own systems. That answer will come from the first teams to ship a debug fix through it.

Anthropic targets $900B valuation in two-week fundraise Anthropic asked investors to submit allocations within 48 hours for a round that could close at over $900 billion within two weeks, per sources. The pace would push Anthropic's private valuation past most public AI competitors. techcrunch.com

Stripe opens Link wallet to autonomous AI agents Stripe launched Link, a digital wallet that lets users connect cards, banks, and subscriptions, then authorize AI agents to spend through approval flows. The product gives agentic shopping a payments rail with explicit consent gates. techcrunch.com

Mistral ships Medium 3.5 with remote agents Mistral released Medium 3.5 alongside "vibe remote agents," extending the model into longer-running task execution. The release positions Mistral against Anthropic and OpenAI on agent infrastructure rather than raw benchmark scores. mistral.ai

Google puts Gemini in millions of vehicles Google began deploying Gemini as the in-vehicle assistant across millions of cars, replacing earlier voice systems. The integration moves Gemini into a default-on surface drivers cannot easily swap out. techcrunch.com

SoftBank forms data-center robotics arm eyeing $100B IPO SoftBank is spinning up a robotics company focused on building data centers and is already targeting a $100 billion IPO. The unit feeds back into Masayoshi Son's AI infrastructure thesis. techcrunch.com

OpenAI restricts GPT-5.5 Cyber to vetted defenders OpenAI will release its cybersecurity testing tool GPT-5.5 Cyber only to "critical cyber defenders" at first. The gating arrives after OpenAI publicly criticized Anthropic for limiting access to its Mythos model. techcrunch.com

Legora hits $5.6B as Harvey fight escalates Legora reached a $5.6 billion valuation as it pushed deeper into Harvey's territory, with the two startups running dueling ad campaigns. Both have raised hundreds of millions and now compete directly for big-firm contracts. techcrunch.com

Apple says AI demand caught it short on Macs Apple told investors AI workloads drove unexpected demand for Mac mini, Studio, and the new Neo, and supply will stay constrained through next quarter. The Mac line is now selling as local-inference hardware. techcrunch.com

X rebuilds ad platform around AI X launched a rebuilt ads platform that uses AI for targeting and creative generation as it tries to grow revenue again. The relaunch arrives while advertisers continue to weigh brand-safety risk on the platform. techcrunch.com

Spotify adds "Verified" badge to flag human artists Spotify launched a verification program with a green checkmark for profiles confirmed to have a real person behind the music. AI personas will not qualify for the badge at launch. theverge.com