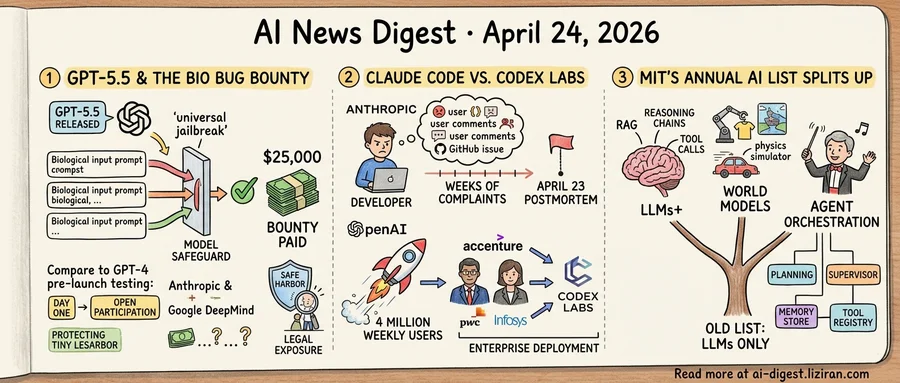

01The day GPT-5.5 shipped, OpenAI put a $25,000 price on breaking its bio guardrails

OpenAI released GPT-5.5 this morning. In the same wave of posts, it announced the GPT-5.5 Bio Bug Bounty. The program pays up to $25,000 to any researcher who can produce a universal jailbreak against the model's biological-risk safeguards.

The bounty page defines a universal jailbreak as an input that reliably bypasses safeguards across multiple categories of biological uplift. Partial bypasses qualify for smaller rewards. Submissions must survive OpenAI's own replication before payout clears. Rewards scale with severity and breadth rather than a flat rate.

The format mirrors OpenAI's cybersecurity bug bounty, which has run on HackerOne since 2023 and has paid out for conventional security vulnerabilities like API auth flaws. Extending those mechanics to model-level safety, and specifically to bio, is the new move. This is the first time any frontier lab has framed bio risk as a named-scope, publicly priced research challenge. OpenAI has not said whether the bio program will use HackerOne for intake or run through an internal triage team.

Previous OpenAI cycles drew the line elsewhere. The GPT-4 system card treated biological red-teaming as a pre-launch exercise, completed ahead of shipping and under private contract. GPT-5 added ongoing monitoring but kept bio testing largely in-house or routed through research partnerships. Paying bounties on day one, on the live production model, signals OpenAI treats its own coverage as incomplete.

OpenAI's prior biosecurity evaluations were run through contracted external partners and published only in summary form. The bug bounty uses the opposite structure: open participation, itemized payouts, per-finding disclosure.

The GPT-5.5 System Card, published alongside the model, foregrounds biological risk. Under OpenAI's Preparedness Framework, bio uplift is one of the tracked capability areas that gate release decisions.

The program also shifts legal exposure. Bounty participants operate under safe-harbor terms that shield good-faith testing from OpenAI's usage policies. Without that cover, a researcher who extracted uplift content would face export-control rules and the Biological Weapons Convention on their own.

Anthropic's responsible scaling policy and Google DeepMind's Frontier Safety Framework have not named comparable per-finding figures for bio risk. OpenAI is setting the number.

The program opens today. OpenAI says it will publish aggregate findings after the first submission wave closes.

02The week Anthropic acknowledged Claude Code's regression, OpenAI said Codex hit 4 million weekly users

Anthropic's engineering team published a postmortem on April 23 responding to sustained user complaints that Claude Code had regressed. The post, titled "An update on recent Claude Code quality reports," drew 494 points and 368 comments on Hacker News within a day. That volume typically lands only when paying users feel the pain themselves.

Complaints had been building for weeks. Claude Code users reported degraded output quality across multiple request types on Anthropic's forum, on GitHub, and on Hacker News. The April 23 post is Anthropic's first engineering-level response to that volume of reports.

OpenAI picked the same week for a different announcement. Codex now has 4 million weekly active users, the company said. OpenAI also launched Codex Labs, with Accenture, PwC, and Infosys as deployment partners to roll the agent into large enterprises.

The two disclosures read like dispatches from different stages of the product cycle. Anthropic is formally acknowledging that complaints circulating on forums and GitHub for weeks were real. The postmortem format is itself a concession that Anthropic let the regression run longer than heavy users found acceptable.

OpenAI is going the other direction: widening the footprint before Codex has faced its own public reliability audit from a large paid user base. Codex Labs formalizes the enterprise path, and the three consultancies bring the implementation muscle to deploy the agent across large corporate engineering orgs. These firms sell into regulated industries where a silent model regression turns into SOW disputes and audit findings, not forum threads.

For developers picking between the two agents this quarter, the question is less about raw capability and more about which failure mode they can stomach. Anthropic has shown it will eventually publish the debrief, weeks after the fact. Whether Codex produces a similar document when 4 million weekly users feel a regression at once is still untested.

03MIT's Annual AI List Splits Into Three Tracks Where LLMs Used to Sit Alone

MIT Technology Review's 2026 roundup of what matters in AI breaks its top entries into three parallel tracks: LLMs+, world models, and agent orchestration. For three years running, large language models carried this list as the presumed trunk of everything else. This year they share the frame with two siblings, each pointed at a different thing the base model cannot do.

The LLMs+ track covers the scaffolding around the model: retrieval, memory, reasoning chains, tool invocation. It reads as an admission that the base model has plateaued on tasks needing context, arithmetic, or action it lacks. The "+" is doing the work.

World models sit in a different lane. MIT's coverage notes that text predictors compose novels and write code well but cannot fold laundry or cross a street. Building a system that represents physical causality (objects, forces, continuity) is a research agenda distinct from scaling next-token prediction. Robotics, autonomous vehicles, and simulation work from a different substrate than chat labs.

Agent orchestration is the third branch, and the one closest to shipping product. The problem it names is coordination. A single model, however capable, cannot be handed a multi-step workflow with external systems, handoffs, and failure modes and finish it. The response is infrastructure: planners, supervisors, memory stores, tool registries, wrapped around model calls.

The framing shift has a practical edge. A team building an AI product in 2026 can no longer assume the stack question resolves to "which model." Each of the three tracks comes with its own tool ecosystem, its own benchmarks, and its own talent pool. LLMs+ pulls teams toward vector stores and RAG vendors. World models require physics simulators and robotic datasets. For agent orchestration, the stack fills with workflow engines and eval harnesses for multi-step runs.

Picking a lane now commits a roadmap, and the lanes no longer converge on the same vendor list.

Meta cuts 10% of staff in May Meta will lay off roughly 8,000 employees in May and close around 6,000 open roles, per a memo from chief people officer Janelle Gale. The cuts follow record capital spending on AI infrastructure and data centers. theverge.com

Microsoft puts Agent Mode in Word, Excel, and PowerPoint Microsoft began rolling out Agent Mode across Office apps this week, extending Copilot from suggestions to multi-step task execution. Internally the team has been pitching the feature as "vibe working." theverge.com

Google rebuilds Workspace around Workspace Intelligence Google added automated functions across Gmail, Docs, Sheets, and Drive powered by a new system called Workspace Intelligence. The update lets Workspace act inside documents and inboxes without explicit prompting. techcrunch.com

Anthropic adds Spotify, Uber, and TurboTax connectors to Claude Anthropic shipped personal app connectors for Audible, Spotify, Uber, AllTrails, TripAdvisor, Instacart, and TurboTax. The release pushes Claude beyond work suites into consumer transactions and entertainment. theverge.com

Tesla triples capex to $25B for 2026 Tesla raised 2026 capital spending to $25 billion, three times its historical average, with the CFO confirming free cash flow will go negative through year-end. The company tied much of the increase to AI compute and Optimus production. techcrunch.com

MIT Tech Review maps China's open-weight playbook Chinese AI labs are shipping downloadable open-weight models while US labs gate access behind APIs, per a new MIT Technology Review piece. Developers fine-tune and self-host the Chinese models without supplier negotiations or per-token billing. technologyreview.com

Token bills force Anthropic and OpenAI to tighten consumer limits The Verge reported Anthropic restricted the viral OpenClaw agent earlier this month after inference load became unsustainable. Other labs are quietly capping heavy users as the gap between subscription revenue and compute cost widens. theverge.com

Delve tied to second customer breach in a week TechCrunch confirmed Delve handled compliance certification for Context AI, the agent-training startup that disclosed a security incident last week. It is the second Delve client to suffer a major breach in seven days. techcrunch.com

Sierra acquires YC-backed Fragment Bret Taylor's customer-service agent startup Sierra bought Paris-based, YC-backed Fragment. Sierra did not disclose terms or a product roadmap for the acquired team. techcrunch.com

X replaces Communities with Grok-curated feeds X rolled out AI-powered custom timelines built on Grok, retiring the Communities product. The feeds ship with new ad slots embedded in the recommendation flow. techcrunch.com

DeepMind publishes Decoupled DiLoCo for distributed training DeepMind released Decoupled DiLoCo, a method for resilient model training across geographically split, heterogeneous compute. The technique targets fault tolerance when nodes drop mid-run, a recurring issue in cross-datacenter training. deepmind.google