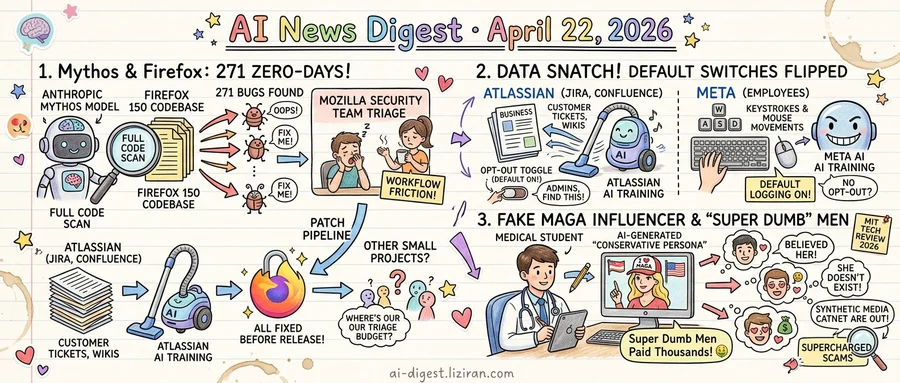

01Mythos found 271 zero-days in Firefox 150. Mozilla says developers are in for a rough few years anyway.

The Firefox 150 codebase went through something new this quarter: a full-coverage scan by Anthropic's Mythos model. The result was 271 security vulnerabilities, all patched before the release shipped, according to Mozilla's disclosure this week.

Mozilla ran Mythos against its own tree as a controlled deployment. The model flagged candidate bugs. Human engineers on the security team triaged, confirmed, and fixed them through Mozilla's existing patch pipeline. Ars Technica reported Anthropic's CTO called the model "every bit as capable" as top security researchers. Mozilla's engineers were more measured.

Speaking to Wired, the Firefox security team said they do not expect emerging AI capabilities to upend cybersecurity in the long run. Attackers and defenders will get the same tools; equilibrium returns. Their shorter-term view was bleaker. Software developers, they told Wired, are in for a rocky transition.

That warning carries weight from this particular vantage point. Mozilla is not a cloud vendor pitching a demo. It is a browser foundation on a six-week release cadence that cannot afford to mis-triage an AI-flagged finding. When its own team says the next few years will be painful, it is describing the operational reality it just lived through.

The friction is in the workflow, not the detection. A 271-bug audit means every finding had to be reviewed, reproduced, prioritized, and patched. Reviewers must now assume any codebase, including third-party dependencies, contains latent issues a sufficiently capable model will surface. Maintainers of smaller projects, without Mozilla's security headcount, will get the same flood without the triage budget.

Mozilla's deployment is the first large open-source project to publicly report running a frontier model across its entire tree. The foundation has not said whether Mythos will become a standing part of Firefox's release pipeline or whether the audit was a one-off trial. Neither Ars Technica's nor Wired's reporting specified the Mythos variant used or the commercial terms.

Firefox 150 shipped with the 271 fixes included.

02Atlassian flipped customer tickets to AI training by default. Meta will log every employee keystroke. Same week.

Two consent defaults flipped in seven days. Atlassian enabled customer data collection for AI model training by default, leaving admins to find and turn off the opt-out toggle themselves. Meta will begin capturing employee mouse movements and keystrokes as AI training data, Reuters reported on April 21.

The announcements sit on different sides of a boundary companies used to respect. Atlassian's data is governed by enterprise contracts. Meta's is governed by the employment relationship. Both just got rewritten toward the same end: feeding models.

Neither company started collecting data this week. Atlassian already processed customer tickets, wikis, and code repositories for product functionality. Internal security teams at Meta already captured employee telemetry for their own purposes. What changed is the default position of the switch. An admin who does nothing is now training Atlassian's models on their company's Jira and Confluence. Inside Meta, the same inaction routes an employee's keystrokes to the training pipeline.

Opt-in was the prior convention, at least in public-facing policy. Anthropic, OpenAI, and most major SaaS vendors introduced AI training consent as explicit toggles. Business tiers typically defaulted the toggle off. The assumption was that enterprise buyers and employees retained some baseline control over what got ingested.

That assumption is under pressure. Training-grade corpora of domain-specific enterprise workflows and expert human-computer interaction patterns are scarce. Public web scrapes do not produce them. Atlassian sits on roughly two decades of tracked engineering work across Jira and Confluence. Meta's workforce generates the kind of interface behavior that is hard to buy at any price.

For administrators, the question this week is where the switches live. Atlassian's AI training toggle sits in organization-level data privacy settings; turning it off requires admin access and applies forward, not retroactively. Meta employees have no documented opt-out at time of reporting.

03A med student built a MAGA influencer who doesn't exist, and says "super dumb" men paid him thousands

A medical student told Wired he has earned thousands of dollars selling photos and videos of a young conservative woman he fabricated entirely with generative tools. The character does not exist. The buyers, he told the reporter, are "super dumb" men who believe she does. He uses off-the-shelf image and video models, wraps the output in MAGA iconography, and routes payments through standard creator platforms.

He is not an outlier. Wired found a working market of operators selling access to AI-generated women built around specific political identities, with conservative personas among the most lucrative. The product is not a one-off deepfake of a real person. It is a persistent fictional creator with a posting schedule, a follower base, and a paywall.

Defenders are describing the problem at a higher altitude. MIT Technology Review this week listed "supercharged scams" among the ten AI developments it is tracking for 2026, citing the shift from generic phishing spam to targeted fraud written, voiced, and now performed by generative models. The magazine framed it as a category-level risk that begins with malicious email and extends into synthetic personas. Its piece is a taxonomy.

The mismatch is in the operating tempo. The med student is already running a business. Platforms still rely on reports of impersonation, which do not apply when no real person is being impersonated. Payment processors flag fraud patterns tied to chargebacks, not to whether the performer on camera exists. Identity verification tools check that a face is live, not that the face belongs to someone.

The new combination is what makes this harder than prior deepfake waves. A fabricated person wearing a partisan identity bypasses the two checks detection systems were built around: matching against a real individual, and matching against known synthetic media libraries. There is nothing to match.

Wired's subject said he plans to keep operating. He described the work as easier than medical school.

Anthropic takes $5B from Amazon, commits $100B back to AWS Amazon invested $5 billion in Anthropic, and Anthropic agreed to spend $100 billion on AWS compute over the term. The circular flow ties Claude training capacity directly to Amazon's cloud revenue line. techcrunch.com

SpaceX structures $60B Cursor deal with $10B walk-away fee SpaceX announced an option to acquire Cursor for $60 billion or pay a $10 billion break fee, ahead of the planned SpaceX/xAI/X combined IPO. The structure folds an AI coding tool into Musk's pre-IPO portfolio without committing to close. theverge.com

Tesla hid thousands of fatal autonomous-driving incidents, Swiss outlet reports RTS reports Tesla concealed thousands of incidents involving its autonomous driving systems, including fatal ones, to keep testing on public roads. The disclosures arrive while regulators in multiple jurisdictions weigh approval expansions. rts.ch

OpenAI hits 4M weekly Codex users, signs Accenture, PwC, Infosys OpenAI launched Codex Labs and named Accenture, PwC, and Infosys as deployment partners for enterprise rollouts. Weekly Codex users reached 4 million, giving OpenAI scale data on how integrators package coding agents for Fortune 500 buyers. openai.com

OpenAI ships ChatGPT Images 2.0 OpenAI released a new version of its in-chat image generation, with updated rendering and editing inside ChatGPT. The launch follows competitive releases from Google and Black Forest Labs over the past month. openai.com

Clarifai deletes 3M OkCupid photos under FTC settlement Clarifai deleted 3 million OkCupid user photos it had used to train facial recognition models, complying with an FTC settlement. Court documents show OkCupid executives invested in Clarifai before sharing user data in 2014. techcrunch.com

YouTube opens deepfake takedown tool to celebrities YouTube extended its likeness detection program to Hollywood public figures, letting enrolled celebrities scan the platform for AI-generated videos of themselves and request removal. The tool was previously limited to creators in YouTube's Partner Program. theverge.com

NeoCognition raises $40M seed for human-style learning agents Founded by an Ohio State researcher, NeoCognition closed a $40 million seed to build agents that acquire domain expertise through continual learning rather than fine-tuning. The check size is large for a pre-product seed in agent research. techcrunch.com

The Verge: Starbucks ChatGPT ordering app fails on a standing order A Verge reporter tried Starbucks' ChatGPT-powered ordering app for a drink she has ordered for years, and the bot mishandled the request. The review documents friction in voice-driven QSR ordering, a category multiple chains piloted this year. theverge.com

The Verge: AI is missing from US midterm campaign agendas despite voter concern US polling shows broad public concern about AI, opposition to data center projects, and online anger at AI executives. Campaign messaging for the 2026 midterms has not picked up the issue, leaving a gap between voter sentiment and stated policy priorities. theverge.com

Bond launches AI social app aimed at reducing scroll time Bond opened a social platform whose AI prompts users toward offline activities and logs them as memories rather than feed posts. The product positions itself against engagement-maximizing feeds at a moment when several US states are weighing minor-use restrictions. techcrunch.com