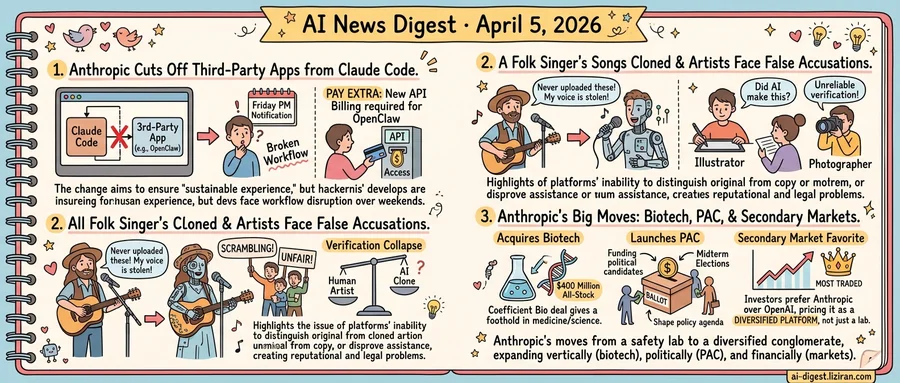

01Anthropic Cuts Off OpenClaw From Claude Code, Demands Extra Payment

On Friday evening, Anthropic sent an email that broke thousands of developer workflows by Monday morning. Starting April 4th at 3PM ET, Claude Code subscribers can no longer use their existing subscription limits for third-party harnesses, including OpenClaw. Anyone who wants to keep using OpenClaw with Claude will need to pay separately for API access.

The reaction was immediate. A Hacker News thread pulled in over 1,000 points and 771 comments, placing it among the site's most-discussed posts this year. The thread reads less like a technical discussion and more like a grievance session. Developers described scrambling to reroute CI pipelines, rewrite editor configurations, and evaluate alternatives over a weekend.

OpenClaw had become, for many, the preferred way to run Claude Code. It wrapped Anthropic's coding assistant into a flexible, open interface that let developers embed it in their own toolchains and customize behavior beyond what Anthropic's first-party client supports. The arrangement worked because Claude Code subscriptions covered the underlying model calls regardless of which client made them. Anthropic's new policy severs that link. The subscription still covers Anthropic's own client, but third-party access now requires separate API billing.

Anthropic framed the change as necessary to "ensure a sustainable and high-quality experience for all Claude Code users," according to the email obtained by The Verge. The company did not specify what triggered the timing. TechCrunch reported that the policy applies to all third-party harnesses, not just OpenClaw, suggesting a broad boundary drawn around the subscription product.

For developers, the practical cost extends beyond the price difference between subscription and API tiers. Many had built OpenClaw into daily workflows over weeks or months. Switching to direct API billing changes cost structures, especially for teams that budgeted around flat subscription pricing. Several HN commenters noted the Friday-evening notice, with enforcement the next afternoon, left almost no transition window.

OpenAI faced similar pushback when it restricted plugin access in 2024. The sequence recurs: a platform lets third-party tools grow on its infrastructure, then tightens access once the dependency is established. No developer who built around OpenClaw had a contractual guarantee the arrangement would last. Anthropic has not said whether it plans to offer a discounted API tier for affected users or any migration path beyond standard pricing.

02A Folk Singer's Songs Were Cloned by AI. Other Artists Can't Prove Theirs Weren't.

Murphy Campbell found songs on her Spotify profile that she never uploaded. Across the internet, illustrators and photographers keep hearing four words about their handmade work: "this looks like AI." One creator can't stop a machine from impersonating her. Others can't prove a machine didn't help them. Both problems share a root: no one can verify who made what anymore.

Campbell, a folk musician, discovered in January that someone had pulled her YouTube performances, run them through an AI vocal generator, and posted the results to her Spotify page. She filed takedown requests. The uploader responded with copyright counter-claims against her own songs. Spotify's content system treated the dispute as symmetric: two parties, competing claims, no mechanism to distinguish original from copy.

The Verge reported separately on creators facing the opposite accusation. Illustrators post hand-drawn work and get told it must be AI-generated. Unedited photographs draw the same suspicion. Platforms that don't label actual AI content have conditioned audiences to suspect everything. The burden now falls on creators to demonstrate authenticity, but no reliable process exists for doing so.

Campbell's case exposes how the procedural machinery fails. She recorded and performed the songs on YouTube. Using AI, someone else reprocessed the vocals and claimed ownership. The platform's dispute system doesn't evaluate originality. It processes counter-notifications. A human artist and an AI-generated copy receive equal standing.

For visual artists, the harm is reputational rather than legal, but the failure mode is the same: authentication collapse. Generative models produce output indistinguishable from human work. "I made this" becomes unfalsifiable in both directions. An impersonator can assert creation; the real artist can't disprove assistance.

Impersonation and false accusation aren't separate crises. They flow from a single gap: platforms and audiences lack any reliable method to verify creative origin. Copyright infrastructure was built to mediate between humans, and content moderation to enforce policy. Neither answers the question generative AI now forces on every upload: who made this?

03Anthropic Acquires Biotech, Launches PAC, and Tops Secondary Markets in One Week

Three moves from Anthropic landed within days of each other. Individually, each is routine for a company valued above $60 billion. Together, they trace something specific: an AI lab acquiring the institutional architecture of a platform company.

Anthropic purchased Coefficient Bio, a stealth biotech AI startup, in a $400 million all-stock deal, according to The Information and Eric Newcomer. Coefficient is early-stage. The price is not. A $400 million stock transaction for a pre-revenue biotech buys application territory, not technology. The deal gives Anthropic a domain-specific foothold where its models could generate revenue outside software.

The same week, Anthropic formed a political action committee ahead of the 2026 midterms, positioning it to fund candidates aligned with its policy agenda. AI companies have lobbied for years. A PAC is structurally different: it means picking sides in elections, writing checks to specific races, and building political relationships that outlast individual policy fights.

Capital markets delivered the third signal. Rainmaker Securities president Glen Anderson told TechCrunch that Anthropic has become the most actively traded name on the private secondary market. OpenAI, long the default prestige holding in AI secondaries, is losing ground. Investors are no longer buying Anthropic for pure AI exposure. They're pricing it as a diversified platform.

Each move is incremental in isolation. The pattern points one direction. The biotech deal secures a vertical beyond software. In forming a PAC before midterms, Anthropic positions itself to shape regulation before those verticals face it. Capital markets have already priced the result: investors now trade Anthropic as a platform, not a lab.

Google followed a version of this sequence in 2014 and 2015: acquiring DeepMind, expanding its policy operation across Washington and Brussels, watching its valuation break from peers. That comparison has limits. But the playbook of simultaneous vertical expansion, political capital accumulation, and financial re-rating has precedent.

Anthropic started as a safety-focused research lab. What showed up this week looked more like a conglomerate taking shape.

Claude Code Found a 23-Year-Old Heap Overflow in the Linux Kernel's NFS Driver Anthropic researcher Nicholas Carlini pointed Claude Code at Linux kernel source files and found a heap buffer overflow in the NFSv4.0 LOCK replay cache, present since 2003. A 112-byte buffer accepted a 1,056-byte response containing a user-controlled owner ID field, letting remote attackers read kernel memory. Kernel maintainers have merged the fix. mtlynch.io

Meta, Microsoft, and Google Build Natural Gas Plants to Power AI Data Centers Meta, Microsoft, and Google are each constructing dedicated natural gas power plants to supply electricity to AI data centers. The projects commit billions of dollars to fossil fuel infrastructure as AI workloads push energy demand far beyond what renewables and grid capacity can deliver today. techcrunch.com

Poll: Americans Prefer an Amazon Warehouse Next Door Over a Data Center A new survey found that U.S. residents would rather live near an Amazon fulfillment center than a data center. The results reflect growing local opposition to data center construction as AI-driven demand accelerates site approvals across the country. techcrunch.com

Moonbounce Raises $12M to Turn Content Moderation Policies Into Enforceable AI Behavior Moonbounce, founded by former Facebook employee Brett Levenson and Ash Bhardwaj, raised $12 million from Amplify Partners and StepStone Group. The platform converts an organization's written content moderation rules into machine learning models that enforce those standards consistently across AI applications. techcrunch.com

Self-Distillation Without Verifiers or Teachers Boosts Code Generation by 13 Points Researchers fine-tuned Qwen3-30B-Instruct on its own sampled code outputs using standard supervised learning — no verifier, teacher model, or reinforcement learning involved. Pass@1 on LiveCodeBench v6 jumped from 42.4% to 55.3%, with gains concentrated on harder problems. The method generalizes across Qwen and Llama models at 4B, 8B, and 30B parameter scales. arxiv.org

CORAL Framework Lets LLM Agents Autonomously Evolve Strategies for Open-Ended Problems CORAL replaces fixed heuristics in LLM-based discovery with long-running agents that explore, reflect, and collaborate through shared memory. The agents accumulate knowledge and adapt strategies without hard-coded exploration rules. The framework is the first to target fully autonomous multi-agent evolution on open-ended research tasks. huggingface.co

SKILL0 Internalizes Agent Skills Into Model Weights via In-Context Reinforcement Learning Current LLM agents load skill packages at inference time, but retrieval noise and token overhead limit performance. SKILL0 fine-tunes skills directly into model parameters through agentic reinforcement learning, eliminating the need to retrieve and inject procedural knowledge at runtime. huggingface.co

Steerable Visual Representations Let Users Direct ViT Attention With Text Prompts Pretrained vision transformers like DINOv2 default to the most visually prominent features, with no way to redirect focus. This work adds text-based steering to ViT representations, letting users point the model at less obvious visual concepts without sacrificing spatial detail the way multimodal LLMs do. huggingface.co