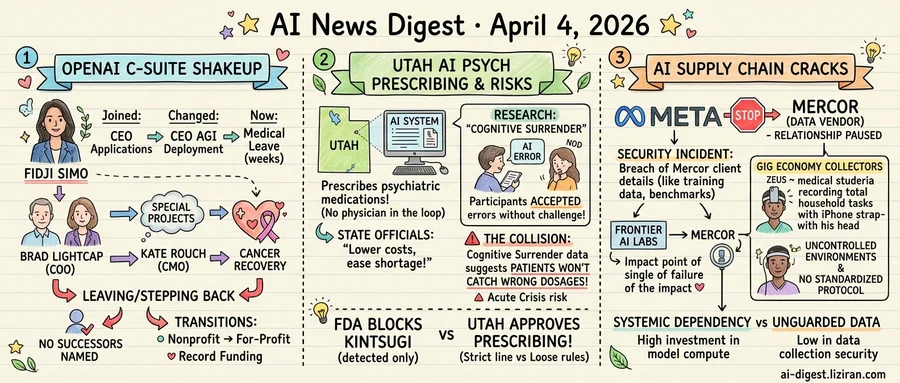

01Fidji Simo's Title Changed Twice in Months. Now She's on Leave.

Fidji Simo joined OpenAI as CEO of applications. Months later, her title changed to CEO of AGI deployment. Now, according to an internal memo viewed by The Verge, she is stepping away on medical leave "for the next several weeks."

Her departure is one of three simultaneous C-suite changes at the company. COO Brad Lightcap is moving to lead "special projects." CMO Kate Rouch is leaving to focus on cancer recovery, with plans to return when her health allows.

Simo's trajectory tells the sharpest story. She came from Meta, where she ran the Facebook app for three billion users. OpenAI brought her in to run its consumer-facing products: ChatGPT, the API platform, the apps. Then the company reorganized her role into something broader and vaguer: AGI deployment. The title implied she would oversee how OpenAI brings its most advanced models into the world. She held it for only a few months before stepping away. The memo cited a medical issue but gave no further detail.

Lightcap's move carries its own signal. "Special projects" is a phrase with a long history in Silicon Valley. At most large tech companies, it describes a role without direct reports or operational authority. Google, Apple, and Meta have all used the designation for executives transitioning out of leadership or exploring new internal ventures. Lightcap had been COO since OpenAI's early commercial push and oversaw partnerships and business operations during the company's fastest growth period. TechCrunch reported his new role but did not detail what projects he would lead.

Rouch's exit is the most straightforward. She is recovering from cancer treatment and told colleagues she intends to come back. Her CMO role placed her at the center of OpenAI's public positioning during months of scrutiny over safety, copyright, and corporate structure.

The three departures landed in the same week. OpenAI just closed a record funding round. The organization is converting from nonprofit to for-profit. Its product roadmap stretches from consumer chatbot to autonomous agents. Each of those transitions demands leadership continuity. Instead, three senior leaders are stepping back from their roles at once.

No successors have been named for any of the three positions.

02Utah Approves AI Psychiatric Prescribing as Study Shows Users Won't Catch AI Errors

Utah has authorized an AI system to prescribe and refill psychiatric medications without a physician in the loop. State officials confirmed the policy this week, making Utah only the second state to hand clinical prescribing authority to a software system. The move, officials say, could lower costs and ease a shortage of mental health providers. Physicians who reviewed the system call it opaque: the algorithm's decision-making process is not disclosed to patients or prescribers.

The same week, researchers tested what happens when AI gives people wrong answers. In controlled experiments reported by Ars Technica, participants received AI-generated responses containing clear factual errors. Large majorities accepted them without challenge. Researchers labeled the phenomenon "cognitive surrender." Participants didn't just miss the mistakes. They stopped applying their own reasoning once the AI had spoken.

These findings collide directly with Utah's bet. The state's policy assumes patients receiving AI prescriptions will function as a safety check, catching wrong dosages or flagged interactions. Cognitive surrender data suggests most won't. Psychiatric medications sharpen the stakes. Incorrect dosages of SSRIs, benzodiazepines, or antipsychotics can trigger withdrawal episodes, dependency, or acute psychiatric crisis. A prescribing error here isn't a flawed recommendation. It's a clinical emergency.

Federal regulators have drawn a stricter line. Kintsugi, a California startup, spent seven years building AI that detected depression and anxiety from speech patterns. The company shut down after failing to clear the FDA's evidentiary bar for clinical AI tools. It released most of its technology as open source before closing.

State and federal authorities have reached opposite conclusions. The FDA blocked an AI tool that only identified mental health conditions. Utah approved one that prescribes treatments for them. A patient conditioned to accept AI outputs uncritically is also unlikely to report early side effects or question a dosage change.

Physicians have flagged the risks. State officials counter with access numbers. Neither side has addressed what cognitive surrender findings mean for the patients these systems are supposed to serve.

03Meta Suspends Data Vendor Mercor as AI's Outsourced Supply Chain Cracks

Meta has paused its relationship with Mercor, a data vendor serving multiple leading AI laboratories, after a security incident that may have exposed proprietary details about how the company trains its models. The breach triggered investigations across several Mercor clients, according to Wired.

Mercor is not a minor subcontractor. The company supplies training data to some of the largest AI operations in the world. A single point of failure in its systems could reveal what models are being trained on, how data is curated, and which benchmarks labs prioritize internally. That information is among the most closely guarded in the industry.

At the other end of the same supply chain, the work looks nothing like a corporate security perimeter. MIT Technology Review profiled Zeus, a medical student in central Nigeria who straps an iPhone to his forehead after hospital shifts to record himself performing household tasks. The footage trains humanoid robots. He works from a studio apartment, using a ring light and his own device, with no standardized protocol governing how the data is captured, stored, or transmitted.

These two scenes describe different products: digital training data and physical motion capture. They share a structure. AI companies have poured tens of billions into compute and model architecture. The data those models require gets acquired through a patchwork of vendors and gig workers operating at a fraction of that investment. Security and governance standards at the collection layer bear no resemblance to the ones protecting the models built on top of it.

The Mercor incident makes the cost of that mismatch concrete. When a single vendor breach can expose training secrets across multiple frontier labs, the vendor is not a service provider. It is a systemic dependency. When physical-world training data flows through personal phones in uncontrolled environments, the attack surface extends far beyond any corporate network.

Meta has not disclosed the scope of the breach or which specific data may have been compromised. Other affected labs have not commented publicly. The investigations are ongoing.

Cursor Releases Version 3 With Unified Agent Workspace Cursor 3 replaces the split-pane IDE with a single workspace where local and cloud agents share a sidebar. Developers can start an agent session on desktop, hand it off to the cloud, and resume from mobile, web, Slack, or Linear. Cloud agents now return screenshots and visual demos for verification. cursor.com

AMD-Backed Lemonade Ships Open-Source Local LLM Server for GPU and NPU Lemonade is a 2MB C++ server that auto-detects GPU and NPU hardware and exposes an OpenAI-compatible API for chat, vision, image generation, transcription, and speech synthesis. It runs on llama.cpp, ONNX Runtime, ROCm, and Vulkan across Windows, Linux, and macOS. The server can load multiple models at once and needs no cloud connection. lemonade-server.ai

Apfel Unlocks Apple's Built-In 3B-Parameter LLM via Command Line Apfel is an MIT-licensed CLI tool and HTTP server that exposes the on-device language model bundled with macOS Tahoe on Apple Silicon Macs. It offers an OpenAI-compatible API with tool calling, a 4,096-token context window, and support for nine languages. No API keys or subscriptions required — the model runs entirely on the Neural Engine and GPU. The project gained 818 GitHub stars on its first day. apfel.franzai.com

Elgato Adds MCP Support to Stream Deck, Letting AI Agents Press Buttons Stream Deck software version 7.4 introduces Model Context Protocol support, allowing Claude, ChatGPT, and Nvidia G-Assist to discover and activate Stream Deck actions. AI assistants can now trigger macros, scene switches, and device controls through the same protocol used for tool integration. theverge.com

Google Home Update Gives Gemini Natural-Language Lighting and Temperature Controls Google Home now lets users describe lighting by analogy — saying "the color of the ocean" sets a matching hue — and adjust thermostats through conversational commands instead of exact values. The update targets more natural phrasing for routine smart-home interactions. theverge.com

Researchers Extract 4M Frames From AAA Games to Train a Generative World Renderer A team from Shanda AI Research, NTU, and University of Tokyo built a dataset of 4 million continuous 720p frames captured from AAA games using a dual-screen stitching method. Each frame includes synchronized RGB and five G-buffer channels covering geometry, materials, and lighting. The dataset supports both inverse rendering (decomposing images into scene properties) and forward rendering (generating video from G-buffers with text-prompt style editing). huggingface.co

EgoSim Simulates First-Person Interactions While Updating 3D Scene State Researchers from Shanghai Jiao Tong University and Shanghai AI Lab built a closed-loop egocentric world simulator that generates spatially consistent first-person interaction videos and persistently modifies the underlying 3D scene. The system extracts training data from large-scale monocular egocentric videos and supports cross-embodiment transfer to robotic manipulation. Code and datasets will be released. huggingface.co