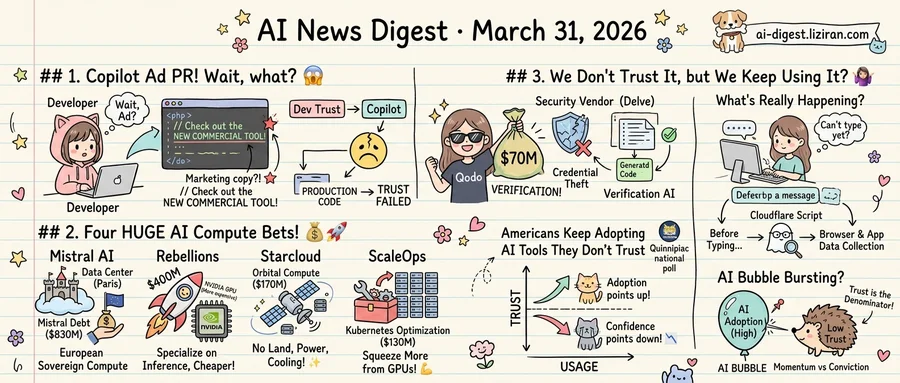

01A Developer Found an Ad in His Copilot-Generated Pull Request

Zach Manson was reviewing a pull request when he spotted something that didn't belong. A line generated by GitHub Copilot contained what appeared to be a product recommendation. It wasn't a dependency or library call. The text read like marketing copy for a commercial tool, slotted into otherwise functional code.

He published his findings. The Hacker News thread that followed drew 1,430 points and 595 comments, making it one of the platform's most active discussions that week.

Manson's post documented his debugging process. This wasn't a hallucination in the usual sense. The model didn't fabricate a function or misname a variable. It produced a string resembling an advertisement, placed inside a code suggestion. Whether the insertion originated from training data contamination, promotional content in the model's corpus, or something else remains unresolved. GitHub has not publicly explained the mechanism.

The community response split along familiar lines. Some developers pointed to known cases of models regurgitating training data verbatim. Others raised a harder question: if an AI coding assistant can insert an ad once, what prevents it from doing so at scale? No one outside GitHub can verify whether this was a bug or a feature.

The same week, a separate failure hit a different part of the AI toolchain. LiteLLM, a widely used AI gateway startup, disclosed that credential-stealing malware had compromised its systems. The breach traced back to Delve, a company LiteLLM had hired to handle two security compliance certifications. A vendor that was supposed to verify security instead became the attack's entry point. LiteLLM has since cut ties with Delve, according to TechCrunch.

Both incidents follow the same pattern. Developers extended default trust to tools in their workflow without independent checks. Copilot was assumed safe to write production code. No one audited Delve before handing it credentials. In both cases, that trust failed in ways users didn't anticipate.

The market is responding with capital. Qodo, an Israeli startup building AI code verification tools, closed a $70 million round announced the same week. The company's pitch: as AI generates more production code, someone needs to verify it independently. That funding signals a shift. AI-assisted development has entered a phase where auditing AI output matters as much as generating it.

02Four AI Compute Bets Draw $1.5 Billion in a Single Day

On March 30, investors poured $1.53 billion into four AI infrastructure companies. None of them compete with each other. That's the point.

The largest check went to Mistral AI: $830 million in debt financing to build a data center outside Paris. Debt, not equity. Mistral chose to borrow rather than dilute because it intends to own the facility outright, with operations targeted for Q2 2026. The structure signals a bet on long-term physical control over compute, not a bridge to the next equity round. It also carries a sovereignty argument: Europe's most prominent AI lab will run on European soil, financed by European capital markets.

At the chip layer, South Korean startup Rebellions closed $400 million at a $2.3 billion valuation. The company designs processors built for AI inference and plans to go public later this year. Inference workloads now consume more compute than training in many production deployments. Rebellions is betting specialized silicon can undercut Nvidia on cost-per-token for that work.

Then there is the most radical proposal. Starcloud raised a $170 million Series A to put data centers in orbit, reaching unicorn status 17 months after Y Combinator demo day. No YC company has gotten there faster. Orbital compute eliminates land acquisition, grid connection, and cooling constraints. Whether it can deliver competitive latency and uptime is unproven. Investors valued the company at over $1 billion on a premise: terrestrial sites are running out of power and permits faster than demand is growing.

ScaleOps took the opposite approach. Its $130 million Series C funds software that optimizes Kubernetes clusters in real time, squeezing more work from GPUs that already exist. No new facilities, no new chips. Just higher utilization on current hardware.

Four deals, four layers of the same problem: fabrication, ownership, location, efficiency. Investors are not converging on a single answer to the compute shortage. They are funding every layer at once because no single layer can close the gap alone.

03Americans Keep Adopting AI Tools They Don't Trust

A Quinnipiac national poll released March 30 found AI usage among Americans climbing while trust in the technology falls in parallel. Most respondents said AI cannot be trusted to deliver accurate results. A majority also called for stronger federal regulation. Separately, 15% said they'd be willing to report to an AI supervisor at work. That's roughly one in seven American adults.

Set that willingness against the rest of the data: a growing user base that considers its own tools unreliable, opaque, and under-regulated. Adoption points up. Confidence points down.

The distrust got specific the same week. A security researcher published a reverse-engineering analysis of ChatGPT's front-end code. The finding: a Cloudflare script executes before a user types a single character, reading the React application's internal state. Users cannot type until the script finishes. The researcher traced the behavior step by step, showing browser and application data are collected as a precondition for using the chat interface. The post collected 935 points and 599 comments on Hacker News. Developers in the thread described not surprise but confirmation of suspected pre-interaction data collection.

Neither OpenAI nor Cloudflare has publicly addressed the analysis. Researchers had flagged Cloudflare's Turnstile system as opaque before, but the specifics were speculative. This time someone decrypted the program and published exactly what it reads and when.

Quinnipiac respondents who said they don't trust AI were expressing a feeling. The reverse-engineering post converted that feeling into auditable code.

A separate essay, "How the AI Bubble Bursts," appeared on Hacker News the same day. Its argument: AI adoption driven by momentum rather than conviction follows a recognizable investment pattern. Usage metrics climb because switching costs are high and defaults are sticky. Confidence in the underlying value erodes. If adoption is the numerator and trust is the denominator, the ratio is getting worse.

The poll, the code teardown, and the bubble thesis all surfaced within 48 hours. None references the others.

Microsoft and Amazon Race to Ship Consumer AI Health Tools Microsoft launched Copilot Health, letting users connect medical records and ask questions inside its Copilot app. Amazon expanded Health AI — previously limited to One Medical members — to a broader audience. Both tools rely on LLMs to interpret personal health data, but independent validation of their accuracy remains thin. technologyreview.com

Court Gives Authors Easier Path to Challenge Meta's Torrenting of Training Data A federal judge lowered the legal bar for authors suing Meta over torrenting copyrighted books to train AI models. Meta now hopes a recent Supreme Court piracy ruling will block the class action. The decision could shape how courts handle bulk data acquisition for AI training. arstechnica.com

Okta Builds Identity Layer for AI Agents Okta CEO Todd McKinnon is steering the company toward managing identity and access for autonomous AI agents, not just human employees. As enterprises deploy agents that act across internal apps and external APIs, authenticating non-human actors becomes a core security problem. theverge.com

Mantis Biotech Uses Synthetic Data to Build Digital Twins of the Human Body Mantis Biotech combines disparate medical data sources to generate synthetic datasets representing human anatomy, physiology, and behavior. The approach targets a persistent bottleneck: real patient data is scarce, siloed, and legally restricted. The company aims to give drug developers and researchers simulated populations for testing. techcrunch.com

OpenAI and Gates Foundation Train Asian Disaster Response Teams on AI Tools OpenAI ran a workshop with the Gates Foundation focused on applying AI to disaster response across Asia. The program targets emergency teams that lack technical capacity to adopt AI independently. openai.com

Trace2Skill Automates Skill Extraction for LLM Agents Researchers released Trace2Skill, a framework that distills reusable skills from agent task trajectories without manual authoring. The system mirrors how human experts write procedures: it analyzes full execution traces, identifies generalizable patterns, and packages them as transferable agent skills. huggingface.co