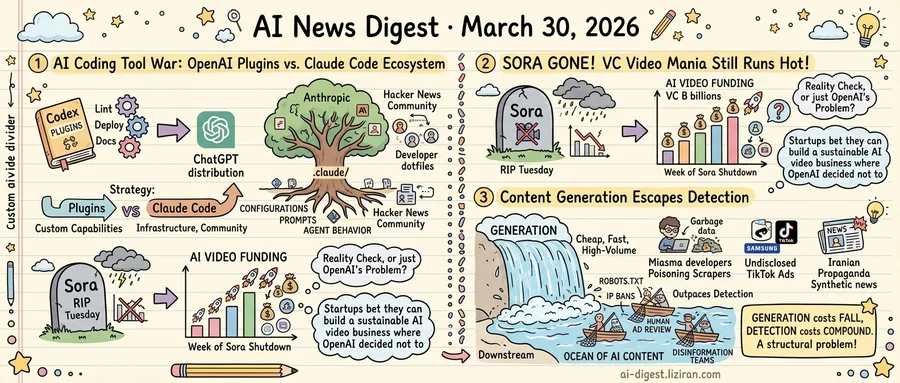

01OpenAI Adds Plugins to Codex as Claude Code's Developer Ecosystem Digs In

OpenAI shipped a plugin system for Codex this week, pushing the tool past code generation into broader development workflows. "Competitors have offered something similar for a while," Ars Technica noted.

The chief competitor is Anthropic's Claude Code. A blog post dissecting its .claude/ configuration folder drew 612 points and 257 comments on Hacker News, placing it among the most-discussed developer tooling posts this quarter. The threads weren't product reviews. They were architecture discussions: how to structure prompt files, version control agent behavior, and share configurations across teams. Developers are treating Claude Code as infrastructure, not a feature.

That's the gap OpenAI is trying to close. Codex plugins let developers add custom capabilities on top of the base coding agent: linting rules, deployment scripts, documentation generators. The system mirrors what Claude Code's .claude/ directory already enables. Persistent configuration, reusable commands, and project-specific context survive between sessions. OpenAI is building the feature. Anthropic already has the community using it.

The two companies are running different plays. OpenAI controls distribution. Codex sits inside ChatGPT, giving it a channel no coding-focused competitor can match. A plugin system turns that user base into a development platform without requiring anyone to download a CLI or configure a local environment.

Anthropic's advantage is stickier but slower. Claude Code requires terminal setup and manual configuration. That friction filters for committed developers who invest time customizing their environments, writing CLAUDE.md instruction files, and building workflows they won't easily abandon. Once a team's agent configuration is version-controlled alongside the codebase, switching tools means rewriting that layer from scratch. The Hacker News thread shows what that investment looks like: developers sharing configuration patterns the way they once shared dotfiles.

OpenAI is betting breadth converts to depth. Ship plugins to millions, and some fraction build habits. Anthropic's wager runs opposite: depth creates lock-in that breadth can't displace. A developer who has spent hours tuning agent behavior through config files faces real switching costs.

Both strategies have clear failure modes. Plugins without an engaged community produce an empty marketplace. A devoted niche without distribution stays niche. The AI coding tool war has moved past which model writes better code. It now turns on which ecosystem developers choose to build inside.

02OpenAI Killed Sora on a Tuesday. That Same Week, VCs Poured Billions Into AI Video.

Tuesday morning at OpenAI started like any other. The Sora team was running its video-generation app. Plans for video integration in ChatGPT were moving forward. By evening, all of it was dead.

The speed caught even insiders off guard. According to The Verge's reconstruction, the shutdown compressed into a single day: cost pressures and competitive realities overtook whatever technical vision had kept Sora alive. An executive was reshuffled. The app was scrapped, and the ChatGPT video roadmap was reversed. OpenAI didn't scale back. It killed the product entirely and wound down partnerships tied to it.

The trigger was economics. Sora's debut in February 2024 had triggered a wave of AI video startups and billions in venture funding. But its own compute costs were enormous, and the gap between output quality and willingness to pay never closed. Chinese startups and open-source projects caught up, generating comparable video at a fraction of the cost. That early lead evaporated before anyone could monetize it. Twenty-five months after stunning the internet, the company behind Sora concluded the business wasn't there.

TechCrunch framed the shutdown as a potential "reality check moment" for AI video as a category. The question isn't whether Sora failed. It's whether the failure was specific to OpenAI's approach or structural to the entire market.

Venture capital offered its own answer that same week. Billions continued flowing into AI video startups, according to TechCrunch's reporting. The company that created the AI video category had walked away from it. Capital didn't follow. Investors are betting smaller, focused teams can build the business that a frontier lab with the world's largest compute budget decided wasn't worth the burn.

OpenAI can redirect engineering toward its core products. Startups still in the race don't have that fallback. They need AI video to work as a standalone business, not a research showcase. The company that could most afford to keep trying was the first to stop.

03AI Content Generation Now Outpaces Every Layer of Detection

Three stories from unrelated corners of the internet surfaced this week. Each exposed the same structural flaw.

A developer released Miasma, an open-source tool that feeds AI web scrapers an endless stream of poisoned data. The project drew 269 points and 202 comments on Hacker News, reflecting frustration among developers who have run out of conventional defenses. Robots.txt files and IP bans no longer stop crawlers. Miasma concedes the point: if you can't block the bots, drown them in garbage. It generates plausible but false content faster than scrapers can sort through it.

On TikTok, a Verge reporter spent several weeks documenting AI-generated ads running without disclosure labels. Samsung promotions and other campaigns showed visible signs of synthetic generation. The reporter could spot them. TikTok's systems could not, or at least did not flag them. Its ad review pipeline, built for human-produced content, has no reliable way to catch AI material at scale.

Then 404 Media reported that Iranian propaganda operations have adopted AI-generated content as a primary tool. The output is cheap, fast, and high-volume. State-backed operators produce synthetic news and social posts that platforms struggle to distinguish from organic content. Detection teams built for human-written disinformation face a volume problem they were never designed to handle.

Three cases at three different scales: individual, platform, geopolitical. They share a root cause. Generating synthetic content requires one model and minimal compute. Detection must cover every generation method, every model version, every output format. That asymmetry is structural, not temporary. Each new release widens it.

Miasma is a developer building a moat by hand. TikTok's labeling gap reveals governance falling behind its own ad pipeline. When that gap scales to geopolitics, it becomes a weapon. The progression from self-defense to platform failure to weaponization follows one logic: generation costs fall, detection costs compound.

NeurIPS Reversed a Policy Barring Chinese-Affiliated Researchers After Backlash The leading AI research conference announced a policy change that would have restricted submissions from researchers with Chinese institutional ties. Chinese AI researchers pushed back publicly within days, and NeurIPS reversed course. The episode exposed how export-control politics are spilling into academic peer review. wired.com

OpenAI Rolls Out Ads Across ChatGPT's Free Tier in the US Wired tested 500 prompts on ChatGPT and cataloged the ads appearing alongside responses. The ads are contextual, tied to prompt topics, and limited to free-tier users for now. OpenAI joins Google and Meta in building an ad-supported AI product. wired.com

Apple Marks 50th Anniversary With Bet That iPhones Will Outlast the AI Shift Apple executives told Wired the company plans to keep the iPhone as its core product for decades, layering AI capabilities on top rather than replacing the form factor. The interviews came as the company turns 50 and faces pressure to show a coherent AI strategy beyond Siri. wired.com

New AI Documentary Interviews CEOs but Pulls Its Punches The AI Doc: Or How I Became an Apocaloptimist puts Sam Altman and other executives on camera to address existential risk. Wired's review argues the film lets its subjects frame the conversation on their own terms. wired.com