01Google Launched Three AI Search Features in One Week, All Skipping the Text Box

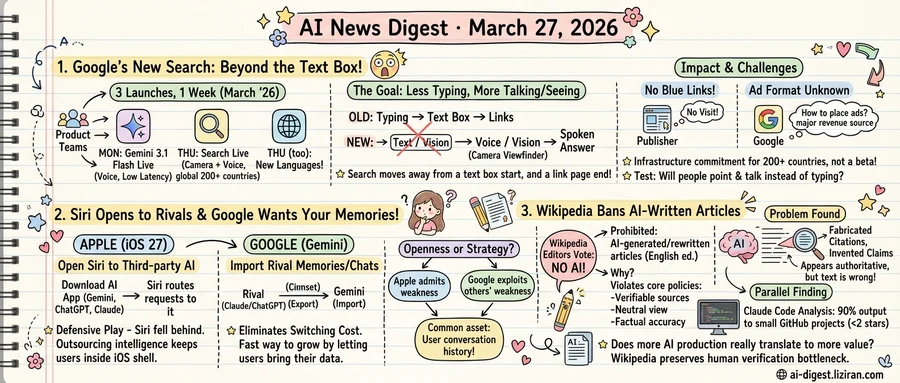

On Monday, Google released Gemini 3.1 Flash Live, a model tuned for low-latency voice interaction, across its products. Search Live followed on Thursday, expanding from the US to more than 200 countries and territories. The same day, Google added support for dozens of new languages. Three moves from separate product teams in five days.

They share a structural premise: the next version of search won't start with a text box and won't end with a page of links.

Flash Live makes talking to Gemini less awkward. Google says the model handles interruptions, pauses, and conversational turn-taking more naturally than its predecessor. Search Live goes further. Users point their phone camera at an object or scene, describe what they see, and receive a spoken answer in real time. The feature first launched as a US-only English tool. This week it expanded to every market where Google's AI Mode is available, now spanning more than 200 countries, according to The Verge.

Each launch chips away at a different part of traditional search. Flash Live swaps typing for speech. With Search Live, the query box becomes a camera viewfinder. Multilingual support removes the English-only constraint that kept both features in a single market. Together, the three describe search built on voice and vision, not text.

When the answer is spoken, there are no blue links to click. There's no featured snippet to optimize. The user asks, the AI answers, and the session closes without a page load. Publishers that built distribution around search rankings still get ranked. They just don't get the visit.

Google hasn't said how it will handle attribution or ad placement in these conversational sessions. Search Live shows sources in some visual responses, but spoken answers don't carry clickable URLs. No company has shipped a proven ad format for real-time voice search, and Google hasn't previewed one. Search ads account for the majority of Google's revenue.

Google is rolling this out to every market where AI Mode exists, all at once. That's not a beta expansion; it's an infrastructure commitment. The test is whether users will swap two decades of typing for pointing a camera and talking.

02Apple Opens Siri to Rival Chatbots; Google Moves to Poach Their Users

Apple's iOS 27 will let users plug third-party AI chatbots into Siri, Bloomberg's Mark Gurman reports. Download Gemini, Claude, or ChatGPT from the App Store, link it, and Siri routes requests it can't handle to your chosen model. The mechanism resembles how Apple already integrates ChatGPT, but now any chatbot developer can compete for that slot.

This is a defensive play. Siri has fallen behind every major AI assistant in reasoning, generation, and multi-turn conversation. Rather than close the gap alone, Apple is outsourcing the hard part. Users stay inside the iOS shell. Apple keeps its distribution lock. But the intelligence powering Siri's answers will increasingly belong to someone else.

Google is playing the opposite game. Gemini's new desktop features, "Import Memory" and "Import Chat History," let users copy over everything a rival chatbot already knows about them. The process is manual: copy your memory export from Claude or ChatGPT, paste it into Gemini. The intent is unmistakable. Google wants to eliminate the switching cost that keeps users loyal to a competitor.

Anthropic rolled out a similar memory import tool for Claude earlier this month. Google followed within weeks. Memory portability is now a standard competitive weapon. Every major AI lab recognizes that months of accumulated preferences, context, and conversation history create real lock-in. The fastest way to grow is to let users bring that data with them.

The two strategies reveal where each company thinks it stands. Apple lacks a competitive model and knows it. Opening Siri to third parties preserves the iPhone as the default interface without requiring Apple to win the AI race on its own. Google has a competitive model and wants more users on it. Lowering migration friction is a bet that Gemini can retain anyone who tries it.

Both companies call this openness. Apple's version admits weakness; Google's exploits it in others. The common denominator: both treat user conversation history as the asset worth fighting over.

03Wikipedia Bans AI-Written Articles After Editors Find Fabricated Citations

English Wikipedia's volunteer editors have spent two decades building the internet's largest encyclopedia entry by entry. Last week, they voted to keep it that way.

Wikipedia formally prohibited AI-generated or AI-rewritten articles in an update to its editing guidelines. The policy, added late last week, states that AI-written content tends to violate "several of Wikipedia's core content policies." Those policies require verifiable sourcing, neutral point of view, and factual accuracy. Large language models, the community concluded, fail those standards consistently enough to warrant a blanket prohibition rather than case-by-case review.

Editors had been catching AI-generated submissions for months before the rule took shape. Those articles looked polished on the surface. They read fluently, cited sources, and followed Wikipedia's formatting conventions. But when volunteers dug in, they found fabricated citations, invented claims, and confident assertions unsupported by the referenced material. For an encyclopedia built on volunteer verification, this was a new kind of failure: text that appeared authoritative but demanded more scrutiny than human-written drafts ever required.

The policy covers the English-language edition, which holds over 6.8 million articles and serves as the project's flagship edition. Other language versions will decide independently. English Wikipedia's editorial norms, however, tend to set the baseline for the broader project.

Wikipedia's decision landed in a week when separate data surfaced a parallel finding in software. An analysis of Claude Code activity showed that 90% of AI-generated code output flows to GitHub repositories with fewer than two stars. Wikipedia's concern is factual integrity. The code data reflects something distinct: how AI output concentrates in low-visibility projects with little apparent reuse. Both findings test the same assumption: that more AI production translates to more value.

Wikipedia answered by preserving its bottleneck. It remains one of the last large-scale platforms where unpaid humans review every contribution before publication.

Senators Warren and Hawley Demand Mandatory Energy Reporting for Data Centers The bipartisan pair sent a letter to the Energy Information Administration requesting "comprehensive, annual energy-use disclosures" from data centers, made publicly available. The push would establish mandatory reporting requirements amid growing concern over AI infrastructure power consumption. theverge.com

Meta Prepares Two New Ray-Ban AI Glasses Models FCC filings reveal Meta and EssilorLuxottica are readying the next generation of Ray-Ban AI glasses. The filings point to two distinct models, building on the current smart glasses line that added multimodal AI features last year. theverge.com

Google DeepMind Publishes Research on AI Manipulation Risks DeepMind released a study examining how AI systems could harmfully manipulate users across domains including finance and health. The research led to new internal safety measures targeting persuasion and deception vectors. deepmind.google

Google Research Introduces TurboQuant for Extreme Model Compression TurboQuant is a new quantization method aimed at compressing AI models far below standard precision levels while preserving output quality. The technique targets deployment scenarios where memory and compute budgets are tight. research.google

Webtoon Adds AI Translation and Localization to Creator Platform Webtoon's Canvas platform for user-uploaded comics will roll out AI-powered localization tools to help creators reach international audiences. The update is part of a broader overhaul designed to increase creator revenue and global distribution. theverge.com

Apple Music's AI Playlist Playground Struggles with Genre Accuracy Apple's new AI-powered playlist feature frequently mismatches genres, returning vocal tracks for instrumental requests and mixing unrelated styles. Early testing shows the tool fails on specific subgenre prompts that streaming competitors handle better. theverge.com

CUA-Suite Releases Largest Open Dataset for Computer-Use Agents Researchers published CUA-Suite, a collection of human-annotated video demonstrations for training desktop automation agents. The dataset addresses a key bottleneck: prior open datasets topped out at roughly 20 hours of video, far too little for training general-purpose computer-use agents. huggingface.co

UniGRPO Proposes Unified Reinforcement Learning for Text-and-Image Generation A new framework applies group-relative policy optimization to models that interleave text reasoning with image generation via flow matching. The method trains the model to reason about a prompt before generating the image, improving prompt adherence. huggingface.co

EVA Uses Reinforcement Learning to Make Video Understanding Models More Efficient EVA replaces manually designed video-agent workflows with a learned policy that selects which frames to process, cutting redundant computation. The approach outperforms uniform sampling and prior agent-based methods on standard video QA benchmarks. huggingface.co