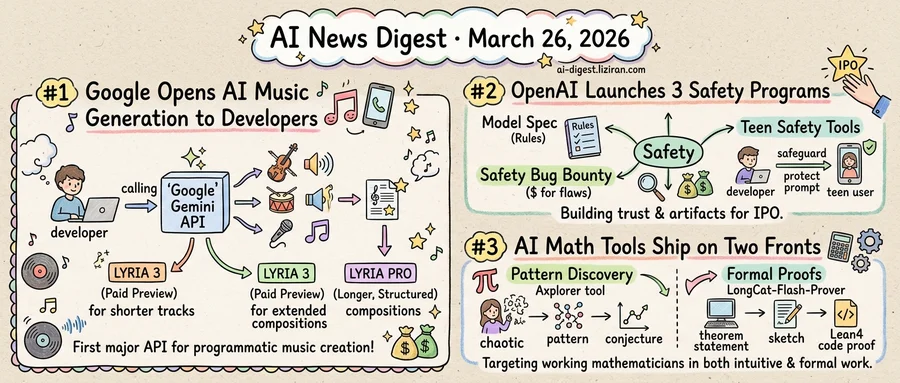

01Google Opens AI Music Generation to Developers with Lyria 3 API

For two years, AI music generation lived behind closed doors. Companies showed demos at conferences, posted sample clips on social media, and invited select testers to try controlled interfaces. Nobody shipped an API.

Google changed that this week. The company released Lyria 3 through the Gemini API as a paid preview, making it the first major AI music model available as callable developer infrastructure. Any developer with API access can now generate music programmatically through the same integration layer they'd use for text or image generation.

Google didn't ship one model. It shipped two. Lyria 3 targets developers directly, accessible through the Gemini API and testable in Google AI Studio. The Pro version, announced the same day by DeepMind, handles longer compositions with what the company calls structural awareness. It can maintain musical coherence across extended tracks rather than generating loops or fragments.

Releasing both simultaneously signals that Google sees music generation as a product line, not a research curiosity. The paid preview pricing reinforces that read. Free research previews attract academics and hobbyists. Paid previews attract companies planning to build products on top of the technology. Google is pricing for the second group.

The practical shift is concrete. A developer building a video editing tool can now call an API to generate a soundtrack. Game studios can prototype adaptive music systems without licensing a catalog or hiring composers for placeholder tracks. Social media platforms can offer users AI-generated background music the way they already offer AI-generated stickers. Six months ago these were theoretical use cases. Now they're integration projects.

The music industry has watched this space with visible anxiety. Major labels have pushed back against AI-generated content that mimics specific artists, and licensing frameworks for AI training data remain unresolved. Google's decision to open a commercial API moves those conversations from hypothetical to operational.

DeepMind noted that Lyria is also expanding to more Google products and surfaces beyond the developer API. Internal deployment across Google's own properties runs in parallel with external access.

The text and image generation markets made this same transition in 2023. Music generation just caught up.

02OpenAI Launches Three Safety Programs in One Day Amid IPO Preparations

OpenAI published a model behavior framework, a paid bug bounty for agent vulnerabilities, and teen safety tools for developers on the same date. All three arrived while the company prepares for its anticipated public offering.

The Model Spec sets public rules governing how OpenAI's models handle refusals, user autonomy, and accountability. It reads as an auditable constitution for model decision-making, specifying where safety overrides user intent and where it does not. Separately, the Safety Bug Bounty extends OpenAI's existing cybersecurity bounty into new territory. It pays outside researchers to probe agentic systems for prompt injection, data exfiltration, and abuse escalation. No major AI lab has formalized a paid program targeting agent-specific vulnerabilities at this scope. The third release, gpt-oss-safeguard, gives developers prompt-based policies to moderate age-specific risks when teens use apps built on OpenAI's APIs.

Each initiative fills a gap the company has been criticized for leaving open. The bug bounty puts money behind OpenAI's claim that it welcomes external scrutiny of its agent infrastructure. For regulators and researchers, the Model Spec makes behavioral guidelines externally auditable for the first time. And the teen toolkit converts corporate pledges on youth safety into code a developer can ship.

Regulatory pressure strengthens the case for substance. Washington and Brussels are both advancing AI safety legislation. Investor due diligence on AI companies now covers safety governance as standard practice. The programs create artifacts for every relevant audience: a citable spec, a funded vulnerability pipeline, a deployable moderation layer.

The counterargument requires no speculation. Three staggered announcements produce three news cycles; three simultaneous ones produce a single, larger story. A company staging its market debut understands the difference. OpenAI's safety releases read well in an S-1 footnote. They would read just as well published weeks apart.

The programs are real. So is the timing.

03AI Math Tools Ship on Two Fronts in a Single Week

Two independent teams released AI tools for working mathematicians in the same week, each targeting a different half of mathematical work. One builds a pattern-discovery engine for conjecture generation. The other open-sources a 560-billion-parameter model for writing formal proofs in Lean4. Neither team coordinated with the other. Both decided the technology was ready at the same time.

Axiom Math, a Palo Alto startup, released Axplorer, a free tool designed to help mathematicians spot structural patterns that could point toward unsolved problems. The tool is a redesign of PatternBoost, which Axiom research scientist François Charton co-developed in 2024. MIT Technology Review reported on the launch, noting the tool's focus on mathematical discovery rather than computation. Axplorer targets the intuitive side of math: the moment a researcher notices a regularity and forms a conjecture worth pursuing.

Separately, a research group published LongCat-Flash-Prover, an open-source Mixture-of-Experts model built for formal reasoning in Lean4. The system decomposes proof work into three stages: auto-formalization, sketching, and proving. It uses reinforcement learning with tool-integrated reasoning to generate proof trajectories. At 560 billion total parameters, it ranks among the largest open-source models aimed specifically at theorem proving.

The timing is the signal. Discovery and verification require different cognitive modes and different software. Until recently, AI contributions to both lived in academic papers, not shipping products. Professional mathematicians treated computational aids with skepticism, preferring intuition over algorithmic suggestions. That two groups independently judged the market ready suggests both the underlying capability and the audience have shifted.

What changed: large language models now handle the symbolic manipulation and long-context reasoning that math demands. PatternBoost existed as a research prototype in 2024. Two years later, Axiom Math hired its creator and rebuilt the tool for daily use. On the proof side, open-source models have closed ground with proprietary systems enough that a formal prover can target Lean4 workflows, not just benchmarks.

Both tools are aimed at working mathematicians, not AI researchers. Axplorer is free. LongCat-Flash-Prover's weights are open-source.

MinerU-Diffusion Treats Document OCR as Inverse Rendering, Drops Autoregressive Decoding Researchers reframe document parsing as a diffusion-based inverse rendering task instead of left-to-right token generation. The approach removes sequential decoding bottlenecks that compound errors across long documents with tables, formulas, and mixed layouts. huggingface.co

SpecEyes Cuts Agentic Vision Model Latency with Speculative Perception A new framework breaks the sequential loop of perceive-reason-act in agentic multimodal LLMs by speculatively executing perception and planning steps in parallel. The method targets the "agentic depth" problem — cascaded tool calls that throttle real-world throughput in systems like o3 and Gemini Agentic Vision. huggingface.co

mSFT Algorithm Detects and Stops Per-Task Overfitting During Multi-Task Fine-Tuning Standard multi-task SFT applies equal compute to every sub-dataset, letting fast-learning tasks overfit while slow ones stay underfitted. mSFT iteratively monitors each task's loss curve, removes overfitting datasets from the active mixture, and reallocates budget to lagging ones. huggingface.co

SIMART Converts Static 3D Meshes into Simulation-Ready Articulated Objects via MLLM A single-stage multimodal LLM pipeline decomposes monolithic meshes into parts with joints, enabling physics simulation without the error-prone multi-module pipelines used today. The work targets the gap between abundant static 3D assets and the articulated objects that embodied AI and robotics actually need. huggingface.co

PEARL Introduces Personalized Streaming Video Understanding for Real-Time AI Assistants Current personalization methods handle only static images or pre-recorded video. PEARL processes continuous video streams while recognizing and remembering new identities on the fly, bridging a gap between human-like streaming cognition and today's offline models. huggingface.co

AwaRes Framework Makes VLMs Fetch High-Resolution Crops Only Where Needed AwaRes runs vision-language models on a low-resolution global view first, then retrieves high-resolution patches only for regions that matter — like small text. This spatial-on-demand approach sidesteps the usual tradeoff between accuracy and compute cost in high-resolution image processing. huggingface.co

WildWorld Dataset Pairs Actions with Explicit State for Training Game World Models Existing video world model datasets lack diverse action spaces and tie actions directly to pixels rather than underlying game state. WildWorld provides action-conditioned dynamics data with explicit state annotations, aimed at training generative models for action RPGs. huggingface.co

SpatialBoost Adds 3D Spatial Reasoning to Pre-Trained Vision Encoders via Language Guidance Pre-trained image models fail to capture 3D spatial relationships because they train only on 2D data. SpatialBoost injects spatial awareness into frozen vision encoders using language-guided reasoning, improving downstream tasks that depend on object-to-background geometry. huggingface.co

DA-Flow Handles Blur, Noise, and Compression in Real-World Optical Flow Estimation Optical flow models trained on clean data collapse on corrupted real-world video. DA-Flow repurposes intermediate features from image restoration diffusion models — which already encode corruption awareness — and adds temporal modeling for dense correspondence across degraded frames. huggingface.co

Survey Maps LLM Agent Workflows from Static Templates to Runtime-Optimized Graphs A comprehensive survey organizes the growing literature on LLM agent workflow design around "agentic computation graphs." It classifies methods by when structure is decided — at design time, compile time, or dynamically at runtime — covering tool use, retrieval, code execution, and verification. huggingface.co