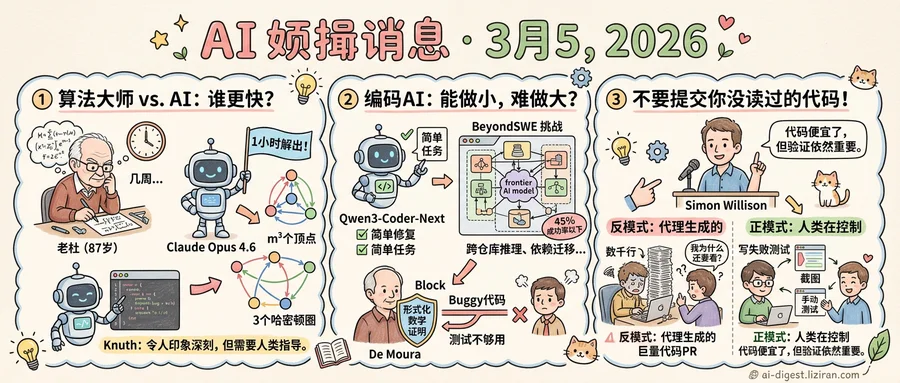

01Donald Knuth Spent Weeks on an Open Problem. Claude Solved It in an Hour.

"Shock! Shock!" Donald Knuth wrote on February 28. The 87-year-old computer scientist, widely regarded as the father of algorithm analysis, had just learned that an open problem he'd been grinding on for weeks had been solved by an AI model released three weeks prior.

The problem arose from Knuth's ongoing work on directed Hamiltonian cycles for a future volume of The Art of Computer Programming. It asks whether the arcs of a specific digraph with m-cubed vertices can always be decomposed into three directed cycles. Knuth had solved the case for m=3 and posed the general question as an exercise. His colleague Filip Stappers found empirical solutions up to m=16. Neither had a proof.

Stappers fed the problem to Claude Opus 4.6 using Knuth's exact wording. He gave it one structural instruction: document every exploration before starting the next. What followed was 31 explorations over roughly one hour.

Claude's path was not a straight line. It reformulated the problem as assigning permutations at each vertex of a Cayley digraph. It tried linear functions, brute-force search, and what it called "serpentine patterns." It recognized the digraph's structure as layered by fiber coordinates. It ran simulated annealing. After exploration 25, it concluded: "SA can find solutions but cannot give a general construction. Need pure math."

Then it shifted strategy. Exploration 27 produced a near miss: three individually Hamiltonian cycles generated by coordinate rotation, with conflicts on only 3(m-1) out of m-cubed vertices. When exploration 29 proved those conflicts were unresolvable, Claude went back to its earlier simulated-annealing output and noticed the solution depended on single coordinates at each fiber. Exploration 31 produced a working construction for all odd m, verified by Stappers for every odd value from 3 to 101.

Knuth then did what Knuth does. He wrote a rigorous proof, generalized the result, and enumerated exactly 760 valid "Claude-like" decompositions for all odd m. Of the 11,502 Hamiltonian cycles that exist for m=3, only 996 generalize to all odd m. The proof fills five pages of characteristically precise mathematics. The even case remains open, though Knuth's postscript notes that GPT-5.3-Codex separately generated code producing decompositions for even m at least 8, tested up to m=2000: a graph with 8 billion vertices.

Knuth's paper reads less like a concession and more like a collaboration report. He calls the session "definitely an impressive success story" while noting the process required human coaching: Stappers had to restart Claude through errors and repeatedly remind it to document its progress. The construction Claude found was valid. Whether it was the "nicest" of the 760 possibilities, Knuth writes, he couldn't say.

02Code Agents Ace the Easy Test. The Hard One Breaks Them

Alibaba's Qwen team released Qwen3-Coder-Next this week, an 80-billion-parameter coding agent that activates only 3 billion parameters at inference time. The pitch: near-frontier coding ability at a fraction of the compute. The model ships with open weights, trained through reinforcement learning on synthesized coding tasks paired with executable environments. On standard benchmarks like SWE-bench, it performs competitively against far larger models.

The same week, a separate research group published results that reframe what "competitively" means. BeyondSWE, a new benchmark of 500 real-world coding tasks, tests what SWE-bench does not: cross-repository reasoning, dependency migration, domain-specialized problem solving, and generating entire repositories from specifications. Where current agents solve over 80% of SWE-bench Verified tasks, frontier models plateau below 45% on BeyondSWE. No single model dominates across task types. Code-specialized models sometimes perform worse than general-purpose ones. Adding search augmentation, expected to help, yielded inconsistent gains and in some cases degraded performance.

The gap is structural, not incremental. SWE-bench asks agents to fix isolated bugs in a single repository with full context provided. BeyondSWE requires agents to reason across codebases, understand dependency chains, and produce working software from scratch. These are the tasks that occupy most of a senior engineer's week.

Leo de Moura, creator of the Lean theorem prover and chief architect of the Lean Focused Research Organization, argued in a recent blog post that the verification problem will only sharpen. Google and Microsoft report that 25-30% of their new code is AI-generated. De Moura cited research showing nearly half of AI-generated code fails basic security tests. His proposal: formal mathematical proof, verified by machine, must replace testing as the standard for AI-written software. His team demonstrated the approach by converting the zlib compression library to Lean and proving that decompressing a compressed buffer always returns the original data at every compression level.

Testing, de Moura wrote, provides confidence. Proof provides a guarantee. The distinction matters when no human reads the code before it ships.

The industry is building agents that write code faster. It has not built the tools to know whether that code is correct.

03Simon Willison Publishes an Agentic Engineering Field Guide, Starting With What Not to Do

The first rule of Simon Willison's new agentic engineering guide isn't a pattern. It's a warning: stop filing pull requests full of code you never read.

Willison, an independent developer and longtime voice in the Python community, published a structured guide this week covering how to work effectively with AI coding agents like Claude Code and OpenAI Codex. The guide organizes practices into principles, testing methods, and code comprehension techniques. But the section drawing the sharpest response is the anti-patterns list, which reads less like advice and more like a catalog of things already going wrong in production teams.

The core anti-pattern targets a specific behavior: submitting agent-generated pull requests containing hundreds or thousands of lines without verifying the code works. "They could have prompted an agent themselves," Willison writes of the reviewers left holding the bag. "What value are you even providing?" His fix is blunt. Small PRs, confirmed functionality, manual testing notes, screenshots. Evidence that a human was in the loop before the code reached a collaborator's review queue.

That particular failure mode reveals a problem no individual guide can solve. AI agents produce code fast enough that the bottleneck has shifted from writing to reviewing. When developers treat agent output as finished work, they transfer their verification burden to teammates who had no say in the matter. The trust contract that makes code review functional breaks down.

The guide's other sections follow a more constructive arc. Willison advocates red-green TDD cycles where developers write failing tests first, then let agents generate implementations. He recommends "linear walkthroughs" for reading agent-produced code systematically rather than skimming. Each technique assumes the same premise: agent output is a draft, not a deliverable.

What gives the guide outsized weight is context. No industry body has published standards for agentic software engineering. No certification exists. The practices forming now come from individual practitioners documenting what works after months of daily use. Willison's guide, which attracted 471 points and 274 comments on Hacker News, functions as field notes from early adoption filling a vacuum that formal institutions haven't touched.

The guide's structure tells its own story. "Writing code is cheap now" opens the principles section. The anti-patterns section follows immediately, as if to say: cheap doesn't mean free.

Kuaishou Releases Kling-MotionControl for Character Animation Kuaishou published a DiT-based framework that transfers motion from a driving video to a reference image, producing full-body character animation. The system handles face, hands, and body motion through separate control modules unified in a single pipeline. huggingface.co

Researchers Map the Design Space for Native Multimodal Pretraining A team ran controlled from-scratch pretraining experiments isolating factors that govern multimodal learning without prior language pretraining. The study uses the Transfusion framework — next-token prediction for language, diffusion for vision — to identify which design choices actually matter. huggingface.co

PRISM Uses Process Reward Models to Fix Deep Reasoning Failures A new inference framework addresses a core flaw in deep-thinking methods: longer deliberation often amplifies errors rather than correcting them. PRISM injects correctness signals during inference through process reward models, preventing wrong candidates from dominating the solution pool. huggingface.co

Utonia Trains One Point Cloud Encoder Across Five Domains A self-supervised point transformer encoder learns shared representations across remote sensing, outdoor LiDAR, indoor RGB-D, CAD models, and RGB-lifted point clouds. Despite radically different sensing geometries and densities, the single model matches or beats domain-specific encoders. huggingface.co

UniG2U-Bench Tests Whether Generation Actually Helps Understanding A new benchmark systematically evaluates when multimodal generation improves comprehension, covering 7 regimes and 30 subtasks. The framework requires varying degrees of visual transformation, filling a gap left by benchmarks that test generation and understanding separately. huggingface.co

SteerEval Benchmarks LLM Controllability Across Language, Sentiment, and Personality Researchers introduced a hierarchical evaluation framework testing how well LLMs follow behavioral specifications at three levels: what to express, how to express it, and how to instantiate it. The benchmark targets deployment in socially sensitive domains where unpredictable output poses direct risk. huggingface.co

Mix-GRM Separates Breadth and Depth in Chain-of-Thought Reward Models Current generative reward models scale reasoning by making chains of thought longer, ignoring that breadth (multi-dimensional coverage) and depth (judgment soundness) serve different functions. Mix-GRM structures these two mechanisms separately, improving evaluation reliability without relying on raw length. huggingface.co

InSight Replaces Difficulty Heuristics with Information-Guided Data Selection for RL Standard RL training for LLMs picks data by difficulty — favoring mid-range success rates — which confuses hard problems with informative ones. InSight uses weighted mutual information to select training samples, accounting for epistemic uncertainty from limited evidence. huggingface.co

Kiwi-Edit Adds Reference Image Guidance to Video Editing A new pipeline generates high-quality paired training data to enable reference-guided video editing, bypassing the bottleneck of scarce training pairs. The system accepts both text instructions and reference images, giving editors precise visual control that language alone cannot specify. huggingface.co

Adaptive Test-Time Scaling Tackles Image Editing Efficiency Image Chain-of-Thought methods improve generation quality by extending inference time but waste compute on editing tasks where the solution space is already constrained. This work introduces adaptive sampling budgets and early-stage verification tuned specifically for instruction-based image editing. huggingface.co