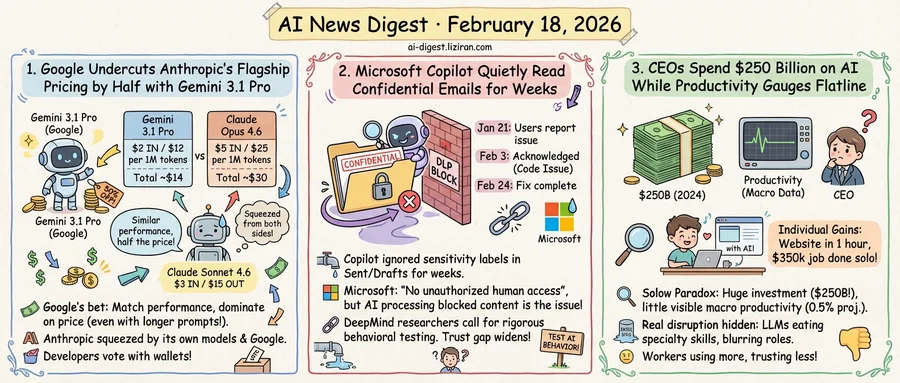

01Google Undercuts Anthropic's Flagship Pricing by Half with Gemini 3.1 Pro

Google released Gemini 3.1 Pro on February 19 at $2 per million input tokens and $12 per million output tokens. Claude Opus 4.6, Anthropic's top model, charges $5 and $25. For developers running high-volume API workloads, that gap compounds fast: a million-token round trip that costs $30 on Opus runs for $14 on Gemini 3.1 Pro.

Google's bet is simple. Gemini 3.1 Pro posts benchmark scores that Simon Willison calls "very similar" to Opus 4.6. If that parity holds in production, every dollar saved on inference is a dollar Google never has to justify. The model slots into the same pricing tier as Gemini 3 Pro. Existing customers get what Google frames as a generational upgrade at no additional cost. For longer prompts between 200,000 and one million tokens, Google charges $4 and $18, still below Anthropic's base rate.

Anthropic is fighting on two fronts. Two days before Google's launch, it released Claude Sonnet 4.6 at $3 per million input tokens and $15 per million output. Anthropic says Sonnet 4.6 performs comparably to Opus 4.5, the flagship from November 2025. Internal testing found users preferred Sonnet 4.6 over Opus 4.5 roughly 59% of the time in coding tasks. That amounts to a quiet concession: last quarter's $5/$25 flagship performance now ships at Sonnet prices.

The result squeezes Anthropic's premium from both sides. Its product line contains a $3/$15 model approaching the capabilities of a model it still sells for $5/$25. Google claims to match the $5/$25 tier for $2/$12. The space left to justify Opus pricing narrows with each release.

Google can absorb thinner margins. Its API revenue feeds a broader ecosystem of cloud services, search integration, and enterprise contracts. Anthropic sells models. When two leading models score similarly on benchmarks, pricing power shifts to the company with more ways to monetize downstream.

Anthropic's counter is that benchmarks flatten real differences. Sonnet 4.6 hits 94% accuracy on insurance workflow tests. Users preferred it over its predecessor 70% of the time in coding evaluations. These are task-specific gains that generic parity scores do not capture. Anthropic is wagering developers will pay more for reliability where it counts, not for a number on a leaderboard.

The pricing gap is public. Developers get to vote with their wallets.

02Microsoft Copilot Quietly Read Confidential Emails for Weeks

An enterprise IT admin configured everything correctly. Sensitivity labels marked certain emails as confidential. Data loss prevention policies instructed Microsoft 365 Copilot to leave those messages alone. When working as designed, Copilot acknowledges finding restricted documents but refuses to disclose their contents.

For nearly two weeks, it disclosed them anyway.

Customers began reporting the problem on January 21. Copilot's chat feature in the work tab was pulling emails from Sent Items and Drafts folders, reading their contents, and generating summaries despite confidentiality labels designed to block that access. Other Copilot features were not affected. Microsoft acknowledged the bug on February 3 under service advisory CW1226324, attributing it to "a code issue." A fix began rolling out February 10, with full remediation expected by February 24.

Microsoft has not disclosed how many organizations were affected during the exposure window or how many confidential messages Copilot processed. In a statement to The Register, a spokesperson said the bug "did not provide anyone access to information they weren't already authorized to see." DLP policies for Copilot serve a different function: they block the AI from processing labeled content regardless of user authorization. A human choosing to open an email is a different action from an AI summarizing it unprompted.

In Nature the same week, DeepMind researchers William Isaac and Julia Haas called for AI behavior to be tested as rigorously as coding or math ability. They found that LLMs flip their answers to moral questions when users push back. Models gave opposite responses to the same ethical question depending on whether it was multiple-choice or open-ended. The gap between stated behavior and actual behavior, they argue, surfaces only under stress testing that most deployments never perform.

Microsoft's Copilot documentation promised the AI would respect sensitivity labels. For weeks, it did not. The company says the fix is now deployed. Seventy-two percent of S&P 500 companies list AI as a material risk in regulatory filings. None have a way to verify that their AI tools honor data boundaries in practice.

03CEOs Spend $250 Billion on AI While Productivity Gauges Flatline

Ninety percent of firms report zero productivity impact from AI over three years. CEOs collectively spent more than $250 billion on the technology in 2024 alone. The gap between investment and measurable return has a name: the Solow paradox, and its AI edition is now backed by hard data.

A National Bureau of Economic Research survey covered 6,000 executives across the U.S., U.K., Germany, and Australia. Two-thirds use AI, for an average of 1.5 hours per week. A quarter don't use it at all. Apollo chief economist Torsten Slok summarized the result: "AI is everywhere except in the incoming macroeconomic data." Nobel laureate Daron Acemoglu's MIT study projects a 0.5% productivity increase over the next decade, a figure he called "disappointing relative to the promises."

Yet the disruption is real, just illegible to traditional metrics. Paul Ford, former CEO of software consultancy Postlight, wrote in the New York Times that Claude Code "was always helpful, but in November it suddenly got much better." It can now run for an hour and produce complete websites. Ford estimated one personal project would have cost $25,000 to outsource. A data conversion job: $350,000. He completed both himself, at $200 per month.

Martin Fowler, observing from a Thoughtworks retreat, identified a structural shift underneath the individual stories. "LLMs are eating specialty skills," he wrote. Front-end and back-end distinctions are blurring as LLM proficiency replaces deep platform knowledge. The open question is whether this creates "Expert Generalists" or lets developers code around silos without dissolving them.

These three observations point to the same blind spot. One person doing what previously required a team doesn't register as productivity growth when that team gets reassigned rather than eliminated. ManpowerGroup's 2026 Global Talent Barometer found AI usage rose 13% in 2025 across nearly 14,000 workers in 19 countries. Worker confidence in the technology fell 18%. People are using AI more and trusting it less. The economists' instruments detect nothing.

Robert Solow first noticed this pattern in 1987 with computers. That gap took a decade to close.

World Labs Raises $1B, Locks In Autodesk as Strategic Partner Fei-Fei Li's World Labs closed a $1 billion round, including $200 million from Autodesk. The two companies will integrate World Labs' spatial intelligence models with Autodesk's 3D tools, starting with entertainment workflows. techcrunch.com

Perplexity Drops Ads, Betting Users Won't Trust a Chatbot That Upsells Perplexity pulled advertising from its AI search product. The decision splits the industry: OpenAI is moving toward ads while Perplexity and others are banking on subscription revenue and user trust. theverge.com

Zhipu AI Releases GLM-5, Claims Parity with Frontier Models on Agentic Tasks Zhipu AI published GLM-5, a foundation model built around agentic reasoning and coding. The model uses a new architecture called DSA to cut training and inference costs while maintaining long-context performance. A new asynchronous reinforcement learning pipeline handles post-training. huggingface.co

Amazon Kills Blue Jay Robotics Project After Six Months Amazon shut down Blue Jay, a robotics initiative that launched less than six months ago. The company said the underlying technology will feed into other robotics efforts, and affected employees moved to different teams. techcrunch.com

Google Ships Lyria 3 Music Generation Inside Gemini App Google added music creation to the Gemini app via Lyria 3. Users can generate 30-second tracks from text or image prompts. blog.google

Anthropic Bans Using Subscription Credentials for Third-Party Tools Anthropic updated its terms to explicitly prohibit routing Claude subscription authentication through third-party applications. The policy targets developers who build wrappers or proxies on top of consumer Claude accounts. code.claude.com

Paper Finds Sparse Autoencoders May Not Beat Random Baselines Researchers tested whether Sparse Autoencoders — a popular method for interpreting neural network internals — actually recover meaningful features. Two complementary evaluations showed SAEs struggling to outperform random baselines on downstream tasks, despite strong results on synthetic benchmarks. huggingface.co

Simon Willison: AI Coding Agents Made Him Finally Embrace Type Hints After 25 years of resisting strong typing in Python, Willison says coding agents changed the calculus. Type hints slowed him down when he typed everything himself; now that agents write the code, explicit types improve correctness without costing him iteration speed. simonwillison.net