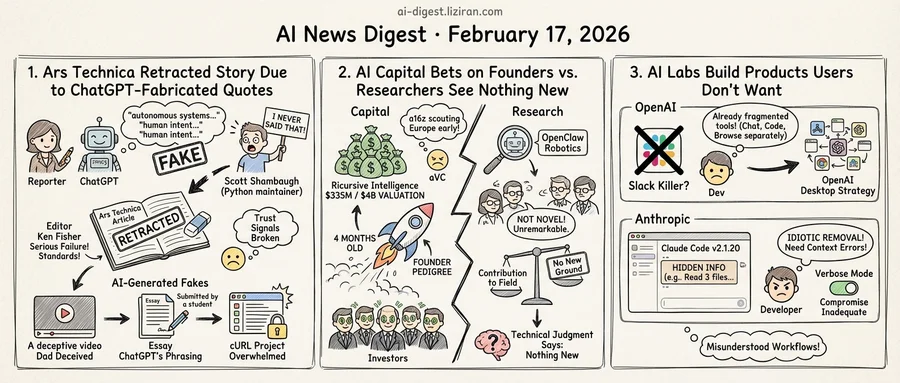

01Ars Technica Retracted a Story About AI After Its Own Reporter Fabricated Quotes With ChatGPT

Scott Shambaugh spent his Friday reading quotes attributed to him that he never said. Shambaugh, a volunteer maintainer of the Python plotting library matplotlib, had recently rejected a code contribution from an AI agent. That rejection became the subject of an Ars Technica piece by Benj Edwards and Kyle Orland. The problem: Edwards had used ChatGPT to paraphrase Shambaugh's blog post, then inserted the AI-generated text as direct quotes.

One fabricated passage had Shambaugh musing about "autonomous systems" and "the boundary between human intent and machine output." He wrote no such thing. Shambaugh updated his blog to say he had never contacted Ars Technica and never made those statements.

The piece covered an AI agent that published a critical response after a human rejected its code. A story about AI behaving badly, in other words, contained AI-fabricated material itself. Ars Technica pulled it Friday evening. Editor-in-chief Ken Fisher published a note Saturday calling the incident "a serious failure of our standards" and stating that company policy requires clear labeling of any AI-generated material. Edwards acknowledged responsibility on Bluesky.

This landed in a media environment already buckling under AI-generated content. Ars Technica has covered technology for nearly three decades with experienced reporters. It is not a content farm. Yet its editorial process still couldn't catch a reporter passing off ChatGPT output as a source's words. AI-generated text slipped through professional gates built for a pre-AI world.

That failure resonated beyond journalism. Anthony, a programmer who writes about his relationship with AI tools, cataloged the accumulating damage in a blog post that drew attention on Hacker News. His father was deceived by a fabricated AI-generated video. A friend who TAs at a university described students submitting ChatGPT essays with the model's signature phrasing still intact. The cURL project shut down its bug bounty program after AI-generated fake vulnerability reports overwhelmed it. "I guess I kinda get why people hate AI," he titled the post.

Anthony uses AI daily for coding. He plans to use it at his next job. But he argued that traditional trust signals, like production quality in video or coherent prose in text, no longer indicate human origin. The same tools that help him write Haskell let anyone generate plausible content at zero effort and zero accountability.

Fisher's editor's note promised accountability. Shambaugh got an apology. The quotes are gone. The editorial process that missed them is the same one running at every other newsroom.

02AI Capital Bets on Famous Founders While Researchers See Nothing New

Three signals from the same week. Ricursive Intelligence closed $335 million at a $4 billion valuation, four months after its founding. Andreessen Horowitz announced it is scouting European AI startups to find deals before local funds can. And several AI researchers told TechCrunch that OpenClaw, a high-profile open-source robotics project, breaks no new scientific ground. "From an AI research perspective, this is nothing novel," one said.

Capital and technical judgment are moving in opposite directions. Investors price AI startups on founder pedigree and market narrative. For researchers, the test is contribution to the field. The two systems produce very different answers.

Ricursive's raise illustrates the first system. The company has disclosed little about its product or technical approach. What attracted investors: co-founders whose prior work at major AI labs made them recruitment targets across the industry. VCs lined up on the strength of a roster, not a demo. Four months from incorporation to a valuation exceeding most public SaaS companies is a pace with few precedents, even in AI.

The a16z move adds a geographic layer. The firm told TechCrunch it wants to "spot companies as early as local funds might." In practice, the U.S. AI deal pipeline has grown so competitive that a firm managing tens of billions now sources startups globally. When capital supply outstrips the supply of credible AI bets, geography stops being a filter.

The technical side runs cooler. OpenClaw drew wide public attention for its open-source robotic manipulation work. Researchers who reviewed it told TechCrunch the underlying methods aren't new. One called it unremarkable from a research standpoint. The distance between public excitement and expert evaluation was, in their telling, wide.

These aren't contradictory reports about separate industries. The investment thesis rewards conviction and speed; peer review demands novelty and proof. Both frameworks are internally coherent. They just land on different numbers for the same companies.

03AI Labs Keep Building Products Their Users Don't Want

OpenAI wants to replace Slack. Anthropic tried to clean up Claude Code's interface. Both efforts landed in the same place: a wall of developer anger.

The OpenAI pitch came from Swyx at Latent Space, arguing that Sam Altman should build a Slack competitor. The logic: Slack is expensive, its AI features are weak, and OpenAI already hired former Slack CEO Denise Dresser in December 2025. A chat platform would give OpenAI permanent enterprise entrenchment and a multiplayer surface for AI agents. The proposal drew 324 comments on Hacker News, where the community split hard. Supporters saw a natural extension of OpenAI's chat capabilities into collaboration software. The other side pointed to a company that can't unify its own desktop apps (separate tools for chat, browsing, and coding) presuming to build connective tissue for everyone else.

Anthropic's misstep was smaller in scope but sharper in reaction. In Claude Code version 2.1.20, the company collapsed file operation displays into summary lines like "Read 3 files (ctrl+o to expand)." The intent, according to Claude Code creator Boris Cherny, was reducing "noise" so users could focus on diffs and outputs. Developers disagreed. One called it an "idiotic removal of valuable information." Others pointed out that visible file names let them catch context errors early, saving thousands of tokens by interrupting wrong approaches before they snowballed.

Cherny told users to "try it for a few days" and said internal developers appreciated the quieter interface. He eventually repurposed verbose mode to show file paths, a compromise many found inadequate since it traded detailed information for a binary toggle.

The two episodes sit at different scales: a strategic land grab versus a settings toggle. But the developer complaint is the same. You built the frontier models, and you still don't understand how we work. OpenAI's fragmented desktop strategy undermines its pitch to unify someone else's workplace. The "reduced noise" Anthropic removed was signal its users relied on.

Alibaba Releases Qwen3.5 With Native Multimodal Agent Support Alibaba's Qwen team launched Qwen3.5, a model built for natively multimodal agent workflows. The release targets growing demand for models that handle multiple input types while executing multi-step tasks autonomously. qwen.ai

NVIDIA Claims Blackwell Ultra Delivers 50x Performance Gain for Agentic AI NVIDIA cited SemiAnalysis InferenceX benchmarks showing Blackwell Ultra achieves up to 50x better performance and 35x lower costs on agentic AI workloads. Inference providers Baseten, DeepInfra, Fireworks AI, and Together AI already run the current Blackwell platform, which cut cost per token by up to 10x. blogs.nvidia.com

Western Digital Says Hard Drives Are Sold Out for 2026 Western Digital reported its HDD inventory is fully allocated for the year. AI data center buildouts are driving storage demand past manufacturing capacity. mashable.com

Study Finds AI Agents' Self-Generated Skills Provide No Benefit An arXiv paper tested whether skills that AI agents generate for themselves actually improve performance. The researchers concluded these self-generated skills are effectively useless. arxiv.org

MedXIAOHE Medical Vision-Language Model Beats Closed-Source Systems Researchers released MedXIAOHE, a medical vision-language model that outperforms leading closed-source multimodal systems across multiple clinical benchmarks. The model uses entity-aware continual pretraining to organize heterogeneous medical data and broaden knowledge coverage. huggingface.co

Flapping Airplanes Pursues Alternative Approaches to AI Architecture AI startup Flapping Airplanes told TechCrunch it is exploring "radically different" methods for building AI systems outside the dominant scaling paradigm. The company described its work as investigating a different set of tradeoffs from mainstream labs. techcrunch.com

Favia Agent Automates CVE-to-Commit Matching in Large Repositories Researchers introduced Favia, an AI agent that links disclosed CVEs to their fixing commits across repositories with millions of commits. Existing methods — both traditional ML and LLM-based — struggle with precision-recall tradeoffs when the vast majority of commits are unrelated to security. huggingface.co

Essay Argues AI Optimism Tracks With Economic Privilege Developer Josh Collinsworth published a post examining how enthusiasm for AI tools correlates with socioeconomic class. The essay argues AI's benefits and harms distribute unevenly across income levels. joshcollinsworth.com

Simon Willison Describes Claude Code Desktop Workflow Simon Willison detailed his setup using Anthropic's cloud-based Claude Code exclusively through native Mac and iPhone apps. He prefers the container-based environment over local execution, citing reduced risk to his own machines. simonwillison.net

Paper Proposes Feature Activation Coverage to Measure Training Data Diversity Researchers introduced Feature Activation Coverage (FAC), which quantifies post-training data diversity in an interpretable feature space. Standard text-based diversity metrics capture linguistic variation but provide weak signals for actual downstream task performance. huggingface.co