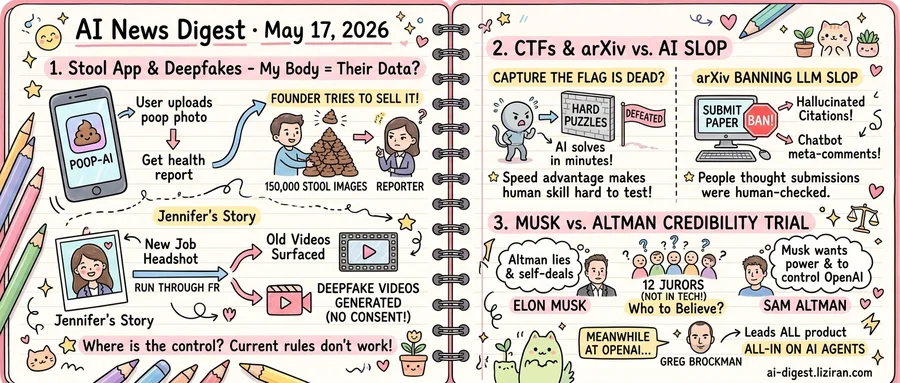

01The founder of a stool-analysis app tried to sell 150,000 user photos to a reporter

"I hoarded a large database of something valuable, just not what you expect… 150k stools images," the founder of an AI poop-analysis app told a 404 Media reporter who had posed as a buyer. The pitch came unprompted. The founder offered to package and sell images that paying users had uploaded to have their bowel movements analyzed by a machine learning model, according to 404 Media's report.

The app markets itself as a health tool. Users photograph their stool, the model returns notes on shape, color, and possible digestive issues. The transaction users thought they were making was diagnostic. The transaction the founder described to the reporter was wholesale.

Nothing in the exchange suggested the founder considered this a breach. He framed the 150,000 images as inventory. The reporter did not buy.

The same week, MIT Technology Review published the account of a woman identified as Jennifer, who in 2023 ran a new professional headshot through a facial recognition service before starting a nonprofit research job. She wanted to know whether the tool would surface porn videos she had filmed more than a decade earlier, when she was in her early 20s. It did. It also surfaced deepfake videos generated from her face that she had never filmed and never consented to.

The two cases point opposite directions through the same door. One set of users handed over images of their bodies in exchange for a service, and discovered the operator treated the archive as a sellable asset. The other never handed over anything, and discovered her face had been scraped and recombined into sexual content circulating on sites she had no relationship with.

Existing remedies map poorly onto either path. Takedown systems built around copyright assume the person depicted owns or licensed the original footage. Jennifer told MIT Technology Review she has spent years filing requests against deepfakes that legally have no clear copyright holder, because no one filmed the underlying act. Consent frameworks built around upload, meanwhile, assume the operator who collected the data will not turn around and sell it. The 404 Media exchange suggests that assumption is doing more work than it can carry.

Neither user in either story has a clear lever to pull. The stool-app users do not know their images were offered for sale. Jennifer knows exactly what is out there, and the tools she has are slower than the generators producing new clips.

02The CTF scene declared itself dead the same week arXiv started banning AI slop

A blog post titled "The CTF scene is dead" circulated this week. The author, a longtime player, argues that frontier AI models have broken Capture The Flag competitions as a meaningful test of security skill. Days later, arXiv announced it would start banning researchers who upload preprints with "incontrovertible evidence that the authors did not check the results of LLM generation." Examples: hallucinated citations or chatbot meta-comments left in the text.

The two announcements come from opposite ends of technical culture. CTFs are timed solve-the-puzzle competitions used to rank offensive security talent. arXiv is the preprint server that physics, math, and CS researchers use to share work before peer review. Neither has much in common operationally. Both were built on the same assumption: that the people on the other end were human, working under human constraints of time and effort.

That assumption is what made the gatekeeping cheap. CTF organizers trusted that a hard challenge would take hours, not seconds. arXiv assumed submitting a paper carried enough reputational cost that authors would not flood the system with low-effort drafts. Models that can solve binary exploitation problems in minutes, or generate plausible 30-page manuscripts in an afternoon, dissolve both constraints at once.

The responses diverge. arXiv is adding a rule and a penalty: papers showing clear LLM artifacts get rejected, and repeat offenders get banned. The CTF post argues no such fix exists for competitive solving. The format rewards speed, and there is no way to prove a human did the work without watching them do it. The author's conclusion is that the scene's value as a credential is over, and what remains is a training exercise.

Neither institution has said it will redesign from scratch. arXiv's policy still relies on catching obvious tells. CTF rule sets continue to use solve times.

03A jury with no stake in AI is about to rule on Musk and Altman's credibility

For three weeks in a San Francisco courtroom, lawyers have argued one question: whether Sam Altman or Elon Musk is more credible. The jury hearing them does not work in AI. According to MIT Technology Review, Altman faced repeated questioning this week over alleged lying and self-dealing involving companies that do business with OpenAI. He pushed back by casting Musk as a power-seeker who tried to take control of OpenAI's development.

The framing breaks from a years-long pattern. Disputes over Altman's conduct have until now played out in tech press, board statements, and X threads: the November 2023 board firing, the for-profit conversion, equity ownership questions. The Musk lawsuit moves them in front of jurors with no industry stake and no prior coverage to defend.

Both sides leaned into character. Musk's lawyers built their case on Altman's prior business dealings. OpenAI's lawyers built theirs on Musk's 2018 bid to fold OpenAI into Tesla and his pattern of public attacks since. Jurors are being asked to weight one founder's allegations against another's, with a verdict turning on which version of events they find more honest.

Inside the company, the week brought a separate signal. OpenAI announced on Friday that president Greg Brockman would take over as the official lead of all product, The Verge reported. Brockman's memo framed the reorganization around going "all-in on AI agents" and consolidated product areas under one of the remaining co-founders. The move places product authority with a co-founder while the trial enters its closing days.

Closing arguments are expected in the coming days. The jury will return a verdict on contract and fiduciary claims. But the record they heard ran longer: weeks of testimony about who said what, when, and whether either man can be believed.

Databricks deploys GPT-5.5 for enterprise agent workflows Databricks integrated OpenAI's GPT-5.5 into its enterprise agent stack after the model topped the OfficeQA Pro benchmark. The deal routes a major data-warehouse customer base directly to OpenAI for agentic workloads. openai.com

OpenAI gives every Malta citizen ChatGPT Plus OpenAI signed a country-level agreement with Malta to provide ChatGPT Plus access and training to all residents. The deal is OpenAI's first nationwide consumer rollout and positions it ahead of EU-level procurement frameworks. openai.com

Andon Labs hands four radio stations to Claude, ChatGPT, Gemini, and Grok Andon Labs put Anthropic's Claude, OpenAI's ChatGPT, Google's Gemini, and xAI's Grok in charge of separate 24/7 radio stations with no human oversight. The stations are the company's latest unsupervised-business experiment after its earlier vending-machine trial. theverge.com

Citation-padding turns AI-generated papers into a peer-review crisis A 2017 epidemiology paper got flagged for receiving anomalous citation spikes traced to AI-written manuscripts mass-citing earlier work. Reviewers now face submissions designed to game citation graphs rather than communicate findings. theverge.com

Campbell Brown questions who curates AI answers for users Meta's former news partnerships head told StrictlyVC that Silicon Valley and end users hold opposite views on what AI assistants should say. Brown argued editorial decisions inside chatbots are being made without the public scrutiny applied to news platforms. techcrunch.com

Sony walks back Xperia 1 XIII AI Camera Assistant claims Sony clarified that the Xperia 1 XIII feature does not edit photos but offers four suggestions based on lighting, depth, and subject. The clarification followed backlash from a demo post that implied automatic edits. theverge.com

OpenAI scaling recipe reaches gold-medal Olympiad performance Researchers published a unified SFT-and-RL pipeline that converts a reasoning backbone into a solver hitting gold-medal scores on IMO and IPhO problems. The recipe uses a reverse-perplexity curriculum during fine-tuning. huggingface.co

Causal Forcing++ pushes interactive video generation to 1-2 sampling steps The paper distills bidirectional diffusion models into frame-wise autoregressive students that generate video in one or two steps. The setup targets real-time streaming use cases where current 4-step methods still introduce sampling latency. huggingface.co

MIT Tech Review maps data-sovereignty gaps in agentic AI The piece documents how enterprises fed proprietary data into third-party models under a "capability now, control later" bargain that now lacks governance. It cites autonomous systems as the trigger forcing companies to renegotiate where their data sits. technologyreview.com

Financial services hit data-readiness wall before deploying agents MIT Tech Review reports agentic AI rollouts in regulated finance stall on data lineage and second-by-second update requirements rather than model quality. The conclusion shifts vendor selection toward data infrastructure providers over model labs. technologyreview.com