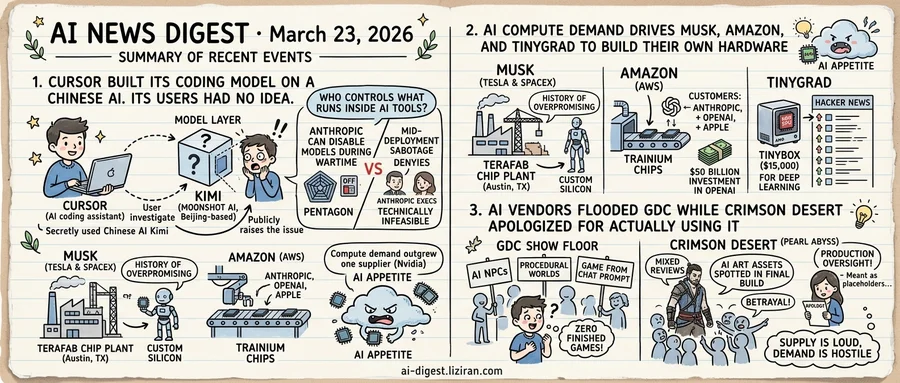

01Cursor Built Its Coding Model on a Chinese AI. Its Users Had No Idea.

Cursor, an AI coding assistant competing with GitHub Copilot for developer adoption, confirmed this week that its newest model runs on top of Kimi, built by Beijing-based Moonshot AI. The company had not disclosed the model's provenance when it shipped the feature. It acknowledged the connection only after users investigated and raised the issue publicly.

This admission arrives alongside a related but distinct argument unfolding in a federal courtroom. Pentagon attorneys allege that Anthropic retains the technical ability to manipulate or disable AI models already deployed in critical systems during wartime. Anthropic executives deny this is possible.

Two disputes, one question: who controls what runs inside the AI tools that people depend on?

Cursor's users were writing production code with a model whose origins they couldn't verify. Nothing in the tool's interface indicated that a Chinese-developed model powered their experience. Building on a model from China is especially sensitive given ongoing U.S. trade restrictions and national-security reviews targeting Chinese AI companies. For most developers, though, the brand on the product was the entire trust signal. What sat underneath was invisible.

That opacity isn't unique to Cursor. Most AI development tools abstract away the model layer entirely. Users pick a product, not a model. They evaluate autocomplete speed, tab-completion accuracy, context window size. Which company trained the weights, and under which jurisdiction, rarely comes up in a product review. No industry standard requires vendors to disclose this information.

The Pentagon's allegation against Anthropic adds a second dimension. Even when a model's origin is known, the DoD argues, the vendor retains ongoing control. Models served through APIs can be updated, degraded, or withdrawn at any time. Anthropic executives counter that their architecture makes mid-deployment sabotage technically infeasible. Yet the allegation signals that the U.S. military now treats vendor control over deployed AI as a live operational risk.

One reveals a gap in knowledge; the other, a gap in control. Both rest on the same architecture: AI tools consumed as services, with users unable to verify what runs underneath.

Cursor has not said whether it will continue using Kimi or offer model-selection controls. No comment has come from Moonshot AI. For developers shipping code through AI assistants every day, the tool works identically to last week. The only difference is what they now know about it.

02AI Compute Demand Drives Musk, Amazon, and Tinygrad to Build Their Own Hardware

Three announcements landed within days of each other, from companies that share almost nothing in scale or resources.

Musk's is the grandest. Tesla and SpaceX will jointly build a "Terafab" chip plant in Austin, Texas, producing custom silicon for humanoid robots, xAI training runs, and orbital data centers. Musk cited the chip industry's inability to keep pace with AI and robotics demand as motivation. TechCrunch noted his "history of overpromising." Building a chip fab from scratch requires billions in capital and years of execution. No timeline or budget has been disclosed.

Amazon has made the most progress. Its Trainium chips are already in production. Anthropic, OpenAI, and Apple have adopted the silicon, according to AWS. The company opened its chip lab to reporters shortly after announcing a $50 billion investment in OpenAI, framing Trainium as the infrastructure backbone of that deal. If the three largest AI labs are willing to train on non-Nvidia hardware, the switching cost may be lower than the industry assumed.

Tinygrad operates at the opposite extreme. The tinybox is a $15,000 desktop computer designed for deep learning, built on AMD GPUs. It hit the top of Hacker News with 571 points and 330 comments. The thread read like a live demand signal: individual developers want non-Nvidia training hardware and will pay for it.

The pattern connects all three. A trillion-dollar company announces a fab. The largest cloud provider designs custom training chips and lands the top AI labs as customers. An open-source project sells AMD boxes to developers at $15,000 each. These are not coordinated moves. The same force is producing independent responses at every tier: compute demand has outgrown what one supplier can feed.

None of these bets require Nvidia to have failed. AI's appetite for silicon has simply outstripped what any single vendor can satisfy.

03AI Vendors Flooded GDC While Crimson Desert Apologized for Actually Using It

Walk the GDC show floor this year and you'd think generative AI had already conquered game development. Vendors occupied prominent booth space, pitching AI-driven NPCs, procedural world-building, and tools that claimed to generate entire games from a chat prompt. One Verge reporter spent ten minutes playing a pixel-art fantasy world generated entirely by Tencent's AI tools. Polished pitches, packed booths, zero finished games to show for it.

As The Verge reported, AI saturated GDC 2026 as a sales proposition: tooling, middleware, workflow automation. It was conspicuously absent from the finished titles on display. Developers sat through the presentations and browsed the demos. Few had shipped anything built with the technology being sold to them.

Then Crimson Desert provided the counterexample nobody wanted. Pearl Abyss's open-world action game launched to mixed reviews, but the real firestorm started when players spotted what appeared to be AI-generated art assets in the final build. The discovery tore through gaming communities. Pearl Abyss initially denied the claims. Days later, the studio reversed course and issued a public apology, acknowledging that AI-generated art had been used during development. The assets were placeholders meant to be replaced before release, according to Pearl Abyss.

That framing mattered less than the outcome: AI art shipped in a retail product without disclosure. Pearl Abyss called it a production oversight; players called it a betrayal. The apology emphasized intent to replace rather than intent to use, a distinction that only highlighted how toxic AI-generated content remains for paying audiences.

At GDC, vendors had answers for everything: faster iteration, lower costs, smaller teams building bigger worlds. What they lacked was a single success story to point to. The one high-profile game that actually shipped AI art spent the same week apologizing for it.

Supply has never been louder, and demand has never been more hostile.

EFF Warns Publisher Blocks on Internet Archive Threaten One Trillion Archived Pages The New York Times and The Guardian now block Internet Archive's Wayback Machine crawlers, ostensibly to fight AI scraping. The Archive holds over one trillion web pages collected across three decades. Wikipedia links to 2.6 million news articles preserved by the Archive across 249 languages — all now at risk. eff.org

DoorDash Pays Gig Workers to Record Themselves Doing Chores for AI Training DoorDash's new Tasks app pays workers to film mundane activities — laundry, cooking, walking — to generate training data for AI models. The app turns gig workers into on-demand data laborers alongside their delivery shifts. wired.com

Companies Start Offering AI Token Budgets as Part of Engineering Compensation Some employers now bundle AI API token allowances into engineering job offers alongside salary, equity, and benefits. TechCrunch reports the trend raises questions about whether tokens are a genuine perk or an expense companies are shifting onto workers. techcrunch.com